That would explain all the 13 year olds on Xbox Live that have had sex with my mother.

skektek's forum posts

I'm praying for zen....

You god is dead because its not going to be zen.

custom chips

lower price

Pick one.

Refer to static branch.

SPU has static branch prediction and prepare-to-branch operations. https://www.research.ibm.com/cell/cell_compilation.html

You are using words you don't know.

-------------

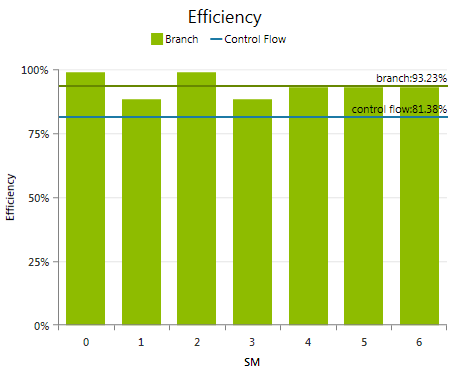

http://docs.nvidia.com/gameworks/content/developertools/desktop/analysis/report/cudaexperiments/kernellevel/branchstatistics.htm

Note why NVIDIA has PhysX CUDA.

/facepalm holyfuckingfuckfuck

Branching and predicting the branch are two separate functions.

Branching is like walking through a maze.

Branch prediction is like calculating the most efficient path from the beginning to the end of a changing maze.

Saying that SPUs have branch prediction is like saying that you have a GPS because you printed out instructions to grandma's house from Mapquest.com.

Again, IBM claims SPU has static branch prediction and prepare-to-branch operations.

Static prediction is the simplest branch prediction technique because it does not rely on information about the dynamic history of code executing. Instead it predicts the outcome of a branch based solely on the branch instruction.

Dynamic branch prediction[2] uses information about taken or not taken branches gathered at run-time to predict the outcome of a branch

A two-level adaptive predictor with globally shared history buffer and pattern history table is called a "gshare" predictor if it xors the global history and branch PC, and "gselect" if it concatenates them. Global branch prediction is used in AMD processors, and in Intel Pentium M, Core, Core 2, and Silvermont-based Atom processors

The Intel Core i7 has overriding branch prediction with two branch target buffers and possibly two or more branch predictors.

The AMDRyzen processor, previewed on December, 13th, 2016, revealed its newest processor architecture using a neural network based branch predictor to minimize prediction errors.

Read https://en.wikipedia.org/wiki/Branch_predictor

Facepalm yourself.

Wow, it took you a long time to read through the wiki article - and you still didn't learn anything :)

You claimed SPU doesn't have "branch prediction" while IBM claimed SPU has static branch prediction. Try again.

The reason why I use a wiki source is due to your failure with the basics.

You are wasting all this time and you don't even bother to read what I wrote:

"They don't have any branch prediction at all. Branch prediction has to be emulated (predicted) in the compiler"

Static branch prediction is monolithically compiled into the binary.

RSX's branch was very bad.

SPU's branch was a better than very bad.

Neither of those devices even support branch prediction.

You are using words you don't know.

Refer to static branch.

SPU has static branch prediction and prepare-to-branch operations. https://www.research.ibm.com/cell/cell_compilation.html

You are using words you don't know.

-------------

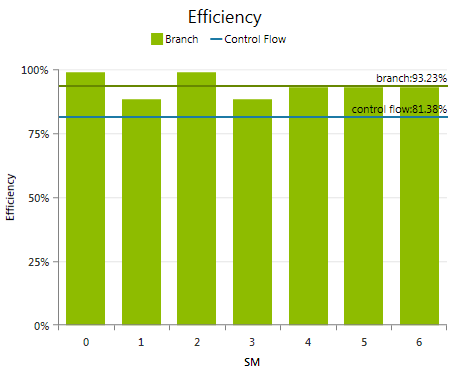

http://docs.nvidia.com/gameworks/content/developertools/desktop/analysis/report/cudaexperiments/kernellevel/branchstatistics.htm

Note why NVIDIA has PhysX CUDA.

/facepalm holyfuckingfuckfuck

Branching and predicting the branch are two separate functions.

Branching is like walking through a maze.

Branch prediction is like calculating the most efficient path from the beginning to the end of a changing maze.

Saying that SPUs have branch prediction is like saying that you have a GPS because you printed out instructions to grandma's house from Mapquest.com.

Again, IBM claims SPU has static branch prediction and prepare-to-branch operations.

Static prediction is the simplest branch prediction technique because it does not rely on information about the dynamic history of code executing. Instead it predicts the outcome of a branch based solely on the branch instruction.

Dynamic branch prediction[2] uses information about taken or not taken branches gathered at run-time to predict the outcome of a branch

A two-level adaptive predictor with globally shared history buffer and pattern history table is called a "gshare" predictor if it xors the global history and branch PC, and "gselect" if it concatenates them. Global branch prediction is used in AMD processors, and in Intel Pentium M, Core, Core 2, and Silvermont-based Atom processors

The Intel Core i7 has overriding branch prediction with two branch target buffers and possibly two or more branch predictors.

The AMDRyzen processor, previewed on December, 13th, 2016, revealed its newest processor architecture using a neural network based branch predictor to minimize prediction errors.

Read https://en.wikipedia.org/wiki/Branch_predictor

Facepalm yourself.

Wow, it took you a long time to read through the wiki article - and you still didn't learn anything :)

Motion blur and Depth of field should both be killed with fire.

Does that include film as well? Why or why not?

♫ ♪ Everybody was kung fu fighting ♪ ♫

DSP are bad for branch prediction,which is funny because Ronvalencia quotes a sony hater from Beyond3D claiming you have to move branching to SPE which is a joke..lol

The Cell doesn't have any DSPs (although DSP functions can be emulated).

The SPE cores aren't "bad" at branch prediction. They don't have any branch prediction at all. Branch prediction has to be emulated (predicted) in the compiler which isn't nearly as efficient as hardware branch prediction.

RSX's branch was very bad.

SPU's branch was a better than very bad.

Neither of those devices even support branch prediction.

You are using words you don't know.

Refer to static branch.

SPU has static branch prediction and prepare-to-branch operations. https://www.research.ibm.com/cell/cell_compilation.html

You are using words you don't know.

-------------

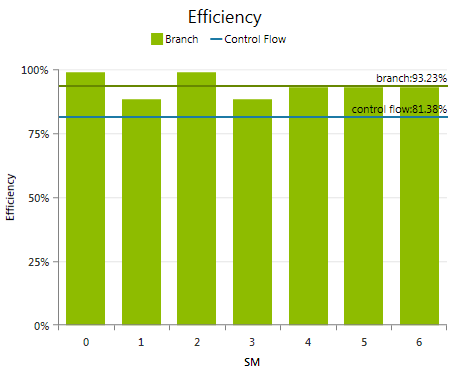

http://docs.nvidia.com/gameworks/content/developertools/desktop/analysis/report/cudaexperiments/kernellevel/branchstatistics.htm

Note why NVIDIA has PhysX CUDA.

/facepalm holyfuckingfuckfuck

Branching and predicting the branch are two separate functions.

Branching is like walking through a maze.

Branch prediction is like calculating the most efficient path from the beginning to the end of a changing maze.

Saying that SPUs have branch prediction is like saying that you have a GPS because you printed out instructions to grandma's house from Mapquest.com.

Dark Souls games aren't exactly known for their excellent graphics (although, somewhat paradoxically, they have some of the best atmospheres in gaming) but Dark Souls 2 is especially ugly. The world is bright, way too bright for a series that is noted for its oppressing darkness. Their is a half baked torch mechanic in the game that only really makes sense for a darker world. It almost feels like the brightness was jacked up near the very end of game's development. If you look close enough the textures are bad, sometimes N64 bad (look around the corner from the Dragon Aerie bonfire). The world itself is often disjointed, different areas feel haphazardly tacked together in ways that make no logical sense. Many areas are simply empty and devoid of detail (e.g. Drangleic Castle).

Zelda: Breath of the Wild. Most of the game is inexplicably washed out. Lot's of pop in and aliasing issues. It looks more like a 2007 game than 2017.

The Cell presented 2 difficulties: most of the cores lacked branch prediction and most of its performance relied on parrelization at a time when parrelization was not a solved problem.

DSP are bad for branch prediction,which is funny because Ronvalencia quotes a sony hater from Beyond3D claiming you have to move branching to SPE which is a joke..lol

The Cell doesn't have any DSPs (although DSP functions can be emulated).

The SPE cores aren't "bad" at branch prediction. They don't have any branch prediction at all. Branch prediction has to be emulated (predicted) in the compiler which isn't nearly as efficient as hardware branch prediction.

RSX's branch was very bad.

SPU's branch was a better than very bad.

Neither of those devices even support branch prediction.

You are using words you don't know.

I fell for that BS last gen and glad I didn't this gen. PC gaming means a hell of a lot of a hassle and at the end of the day you're still stuck playing Xbox ports. All the hoops you gotta jump through just to get the PC to do what a console does right out the box. Ordering parts that work together, assembling the shit. Buying all sorts of Peripherals trying to get some clunky desktop to fit in your living room. Not to mention the kind of dough you have to drop to make a system that would have an actual discernible difference between consoles. For the price you pay you can get a PS4 Pro, PSVR, Switch and even an Xbone and still have money left over for a 4K TV. You'll have access to all the hot titles that either come late to PC or will never come at all like Bloodborne, Nioh, Horizon, Halo, Zelda, The Last Guardian, Uncharted. Resident Evil 7 in VR, Final Fantasy 15. I mean that's not even half of it. Not to mention With multiplats you don't have to worry about Hackers, cheaters and dead communities. What's PC got? Counterstrike? A couple rinky dink strategy games? PC is twice the hassle for half the fun. If you don't see why people don't want to deal with that then I've got nothing to tell ya.

Well said. PC just doesn't have the same utility as a console. It can be a PITA to establish and maintain a PC. Sometimes I just want to game and not fuckaround with Windows.

That being said some people actually like to build PCs. And there are mods which add a ton of value (which can also be a double edged sword. Sometimes I find myself modding more than gaming).

Log in to comment