See Pedro and you say lemmings are happy with the series X.🤣🤣

I am always on your mind it seems. 🤣

It is funny seeing you trying to deflect because you don't like what you are reading.

Lol the only thing the so called laptop demo would prove is that UE5 is cross compatible and scaleble across multiple hardware provided if one tunes/adjust the settings. After all the settings and resolution ran on the laptop wasn't confirmed (after all it can be ran downgraded settings/assets). Boasting about how the laptop ran UE5 as a win is like bragging about running Crysis on the PS360 settings (or running Witcher 3 on the Switch) as being superior, while the other is running at max settings.

https://twitter.com/TimSweeneyEpic/status/1261800971585404930?s=20

I had a good laugh at people equating a laptop playing a 1080P video of PS5 UE5 equating that to running an engine demo....

UE5 tech demo on a RTX 2080 & 970 EVO Plus notebook is 1440p40

Epic China engineers’ interview, if you know Chinese you can confirm it by yourself.

https://www.bilibili.com/video/BV1kK411W7fK

53:00, he said his notebook could run at 1440p 40fps+, optimization target is 60fps for next-gen.

2:08:00, SSD bandwidth (for the flying part) isn’t as that high as ppl said, not need a stricted spec SSD (decent SSD is ok).

Someone at TGFCer forum said, the engineer just confirmed to him: RTX 2080 GPU notebook but forgot the exact SSD model, maybe 970 EVO Plus. (http://club.tgfcer.com/thread-8307013-7-1.html 94th post)

Shocking I tell you!

RTX 2080 mobile (150W, 1590 MHz boost) is gimped when compared to desktop RTX 2080 (215W).

https://www.notebookcheck.net/NVIDIA-GeForce-RTX-2080-Laptop-Graphics-Card.384931.0.html

RTX 2080 mobile is like desktop RTX 2070.

For laptops, there's RTX 2080 mobile (~150 watts) and RTX 2080 Max Q (~80 watts).

Source

The very DF video about it.

Source

"For the benchmark we just ran at full Ultra mode." "With none of those extra settings like I said".

People just read the article about the SX and missed this clear difference in the full DF breakdown. It performed a bit worse than a 2080 at Ultra. Not at Ultra+.

From https://www.eurogamer.net/articles/digitalfoundry-2020-inside-xbox-series-x-full-specs

but there was one startling takeaway - we were shown benchmark results that, on this two-week-old, unoptimised port, already deliver very, very similar performance to an RTX 2080.

https://youtu.be/oNZibJazWTo?t=726

Jensen Huang wasn't wrong when he said these notebooks are powerful than next-gen consoles.

Console*

Don't lump the Series X in with the PlayStation 5 as if they have the same power envelope, they don't.

We haven't seen the RT performance of XSX yet. Hell we haven't yet seen the traditional raster performance. He might still be right.

We already know he's not right, that Gears 5 two week port that wasn't optimized with settings pushed beyond even the PC's maximum settings was running at the same performance profile of a DESKTOP RTX 2080.

Gears 5 wasn't even the most demanding game on PC. It's the same with Forza. Just because they had the same maximum ceiling doesn't mean PC's can't be pushed further but that they held it back. Also, that was a controlled slice for PR. We don't have any third party benchmarks.

On being friendly to AMD GPUs, Gears 5 is not Forza Motorsport or Battlefield V.

PC gamers on this forum are not very bright.

A 2080 max q will not touch a PS5.

https://gpu.userbenchmark.com/Compare/Nvidia-RTX-2080-Mobile-Max-Q-vs-AMD-RX-5700-XT/m704710vs4045

Heavy geometry dooms AMD GPUs and PS5 is not going to escape it.

https://mobile.twitter.com/TimSweeneyEpic/status/1261800971585404930?s=20

End thread!

The guys in the video are Devs from EPIC CHINA they 150% have access to URV

— Mike from Philly (@Mik3Rosario) May 17, 2020

@femtog: Epic deleted the video and Tim Sweeney is damage controling.

No one was claiming they saw a video running on a laptop. Which would still be weird if the video was outperforming the original source in both resolution and frame rate.

@phbz: The guy never showed a video and even if he did what were the settings? The engine scales everything dynamically so we don't even know if the asset quality was the same. What we do know is a 2080 max Q in this laptop is in fact weaker then an AMD 5700 and a 5700xt which are both weaker then a PS5.

@femtog: Epic deleted the video and Tim Sweeney is damage controling.

No one was claiming they saw a video running on a laptop. Which would still be weird if the video was outperforming the original source in both resolution and frame rate.

That's exactly what is happening, he even said in a tweet that he didn't even know what they were saying. He's in a bind to be mindful of Sony because this revelation completely evaporates the marketing illusion.

From https://www.eurogamer.net/articles/digitalfoundry-2020-inside-xbox-series-x-full-specs about Gears 5's unreal engine 4 build

The developers worked with Epic Games in getting UE4 operating on Series X, then simply upped all of the internal quality presets to the equivalent of PC's ultra, adding improved contact shadows and UE4's brand-new (software-based) ray traced screen-space global illumination.

VS

https://www.eurogamer.net/articles/digitalfoundry-2020-this-is-next-gen-unreal-engine-running-on-playstation-5

backed up by real-time global illumination that's fully dynamic

@kazhirai: https://amp.reddit.com/r/PS5/comments/gl4rx4/ue5_demo_on_a_rtx_2080_970_evo_plus_laptop_runs/

The TC didn't post the entire translation. Apparently it dropped to 1080p on the laptop. It only ran at 1440p and 40fps at the beginning. That's to say this isn't all complete bullshit.

@femtog: Exactly, he never showed a video so it's even weirder Tim's justification. That people are confusing what the Epic engineer said with something it didn't happen at all, after they delete the original source. But even if it did happen how does a video end up running at more 10fps.

We don't even know how optimized was the demo for the PS5. Or if they limited to 30fps for the sake of consistency and it was actually outperforming the 40fps of the laptop.

It doesn't need to be when it very clearly is...

Then you are simply making assumption. You can't state your assumptions as facts.

There's no assumptions, it looks nothing like Unreal Engine 4, there's no polygonal edges, the scaling and geometric intricacy is the same as was seen in this UE5 demonstration.

It's Unreal Engine 5, you're just being obtuse.

There's no assumptions, it looks nothing like Unreal Engine 4, there's no polygonal edges, the scaling and geometric intricacy is the same as was seen in this UE5 demonstration.

It's Unreal Engine 5, you're just being obtuse.

You are making an assumption. Unreal 5 was revealed a fews days ago. Hellblade 2 last year. The demo showed on Thursday was the FIRST showing of Unreal 5. Until you can provide evidence to the contrary you are making a baseless assumption.

There's no assumptions, it looks nothing like Unreal Engine 4, there's no polygonal edges, the scaling and geometric intricacy is the same as was seen in this UE5 demonstration.

It's Unreal Engine 5, you're just being obtuse.

You are making an assumption. Unreal 5 was revealed a fews days ago. Hellblade 2 last year. The demo showed on Thursday was the FIRST showing of Unreal 5. Until you can provide evidence to the contrary you are making a baseless assumption.

I'm going to screenshot this, you're just being dumb at this point in contrast to the obvious.

"But you're just making assumptions!"

The way it looks is a rapid departure from anything Unreal Engine 4, and everything seen from it identically mirrors the tech on display in that Unreal Engine 5 reveal. There's a difference between a game being shown with something ambiguous and you don't know what it is and the engine being officially revealed at a later date.

Hellblade II being shown doesn't contradict the official reveal of their engine. Seems pretty obvious bud.

https://twitter.com/XboxP3/status/1260681227071131648

2020 and people still blindly believe what a guy who has been found talking shit n+1 times says.

He's like Todd Howard's lost twin

@kazhirai: lmfao they're in the same ballpark, you're gonna have to deal with that bro. Oh and as always, Sony will have the better games as they've proven generation after generation. Xbox fanboys, enjoy the unnoticeable difference in pixels, is about all you'll get from a 18 percent difference that's mitigated by SSD differences.

@kazhirai: lmfao they're in the same ballpark, you're gonna have to deal with that bro. Oh and as always, Sony will have the better games as they've proven generation after generation. Xbox fanboys, enjoy the unnoticeable difference in pixels, is about all you'll get from a 18 percent difference that's mitigated by SSD differences.

Your "that's mitigated by SSD differences" is bullshit.

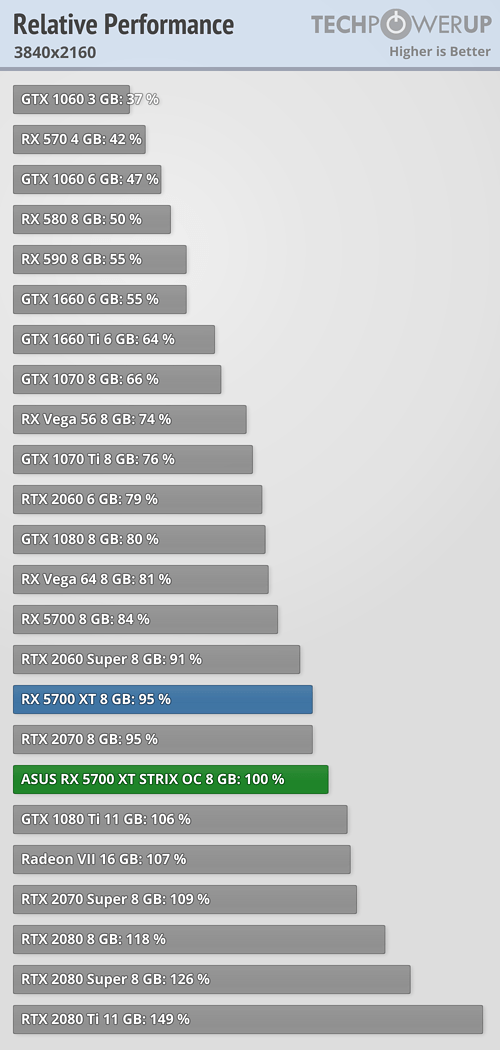

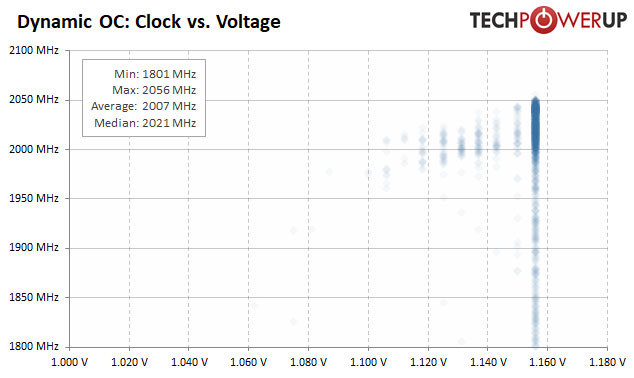

PC already ASUS ROG RX 5700 XT Strix OC's 2007 Mhz average already has 10.275 TFLOPS similar PS5.

Math: 2 x 40 x 64 x 2.007Ghz = 10,275.84 GFLOPS or 10.275. TFLOPS

ASUS ROG RX 5700 XT Strix OC is missing DX12U feature set. NAVI 10's "primitive shader" is similar to DX12U's "mesh shader".

There's no assumptions, it looks nothing like Unreal Engine 4, there's no polygonal edges, the scaling and geometric intricacy is the same as was seen in this UE5 demonstration.

It's Unreal Engine 5, you're just being obtuse.

You are making an assumption. Unreal 5 was revealed a fews days ago. Hellblade 2 last year. The demo showed on Thursday was the FIRST showing of Unreal 5. Until you can provide evidence to the contrary you are making a baseless assumption.

I'm going to screenshot this, you're just being dumb at this point in contrast to the obvious.

"But you're just making assumptions!"

The way it looks is a rapid departure from anything Unreal Engine 4, and everything seen from it identically mirrors the tech on display in that Unreal Engine 5 reveal. There's a difference between a game being shown with something ambiguous and you don't know what it is and the engine being officially revealed at a later date.

Hellblade II being shown doesn't contradict the official reveal of their engine. Seems pretty obvious bud.

https://twitter.com/XboxP3/status/1260681227071131648

Hellblade 2's demo run has 4K resolution

Sony complained to Epic. And Epic got the video taken down according to b3yondgaming.

Sony: "We paid you money to spread misinformation and not talk about Xbox. Do your job"

@kazhirai: You are fighting a losing battle even your Phil Spencer tweet. First look at UE 5 and it is NOT Hellblade 2. The fact is that Hellblade 2 is NOT confirmed to be UE5 and the only known reveal of UE5 was the tech demo. Everything else you are claiming is unsubstantiated. Don't hate me for sticking to the facts.

@ronvalencia: Hellblade 2 was not native 4k and it only ran at 24fps.

https://www.eurogamer.net/articles/digitalfoundry-2019-senuas-saga-hellblade-2-trailer-analysis

But what if it were real-time? The raw specs of the trailer file itself are intriguing. If you look at the metadata of the video - it is mastered at just 24 frames per second and the actual resolution of the rendered frame between the cinematic black bars is 3840x1608: so a little more than 74 per cent of a 'real' 4K in spatial resolution and at 80 per cent of 30fps. All together, that is 60 per cent of the amount of pixels pushed per second compared to 4K30 - and something that's decidedly easier to render than you may expect.

The image is always 3840 wide, so the vertical resolution is a function of the aspect ratio. If the aspect ratio is 2.39:1, the vertical resolution is approximately 3840/2.39, which is about 1608.

3840 x 1608 = 6,174,720 pixels at 24 fps = 148,193,280 pixels per second

2,560 x 1,440 = 3,686,400 pixels at 30 fps = 110,592,000 pixels per second

3840x1608 refer to 2.39:1 aspect ratio 4K which is used in native 4K ultra-blu-ray movies.

148,193,280 / 110,592,000 = 1.34 or 34% higher pixels.

@pc_rocks:

Didn't see you cry when the 2080ti dropped to 1080p to run 60FPS in certain game with ray tracing..

$1,200 GPU drops to 1080p... Damn the PS5 will be fine.

My point is simple you downplaying this is lol worthy when PC is passing the same with ray tracing,hard hitting effects have an impact that is all.

Dropped in an actual 'game' with RT - the most demanding graphics technique in existence in 60 FPS. This 'demo' didn't even use RT at 30 FPS. ROFLMAO!

Oh and been beaten by an almost a year old laptop.

5700XT does RT and accelerate ML workloads?

Blackbargate part 2!!! How exciting. I like how Lems attacked Cows for The Order using black bars, but they’re defending them here. This place is amazing.

For real though. A GPU has an easier time rendering graphics with those black bars because there are far fewer pixels involved. Only fanboys would try to sell that as “native” resolution.

@pc_rocks: Except if you read the translation it wasn't beat by a year old laptop. The laptop dropped to 1080p.

Not surprising since we have benchmarks showing a 2080 max q is weaker then GPU's that are weaker then what's in the PS5.

Where's the actual quote and even if it's true. What's the lowest resolution PS5 demo dropped to? It was variable up to 1440p so?

Yeah, I'll take the actual engineer's word over your DC and stupid unrelated assumptions.

No but the PS5's GPU does, the UE5 demo was also no using RT. So how is a 2080 max Q beating a PS5 when it can't even beat a 5700xt?

If you read the entire translation though on reddit apparently the flying scene had to be reworked and it dropped to 1080p. So I don't think the original guy was even saying it was outperforming the PS5. It ran at 1440p 40fps at the beginning of the demo.

Jensen Huang wasn't wrong when he said these notebooks are powerful than next-gen consoles.

https://gpu.userbenchmark.com/Compare/Nvidia-RTX-2080-Mobile-Max-Q-vs-AMD-RX-5700-XT/m704710vs4045

I would say he was wrong.

5700XT does RT and accelerate ML workloads?

No but the PS5's GPU does, the UE5 demo was also no using RT. So how is a 2080 max Q beating a PS5 when it can't even beat a 5700xt?

If you read the entire translation though on reddit apparently the flying scene had to be reworked and it dropped to 1080p. So I don't think the original guy was even saying it was outperforming the PS5. It ran at 1440p 40fps at the beginning of the demo.

SO, the laptop is indeed more powerful than 5700XT and as per the Epic engineer also PS5, nice. I believe the engineer over your hopeful assumptions. See ya!

Please Log In to post.

Log in to comment