Lol, the 20xx cards are becoming a bigger and bigger joke at this point!

It doesn't matter because the alternative is more like a dead jester buried in a septic tank.

This topic is locked from further discussion.

@pc_rocks: Well, a German company run by Turks.

Yerli was born in Germany to Turkish immigrants from Giresun. His brothers, Faruk and Avni, joined Crytek in 2000 and 2001.

@Jag85: It seems like you just can't beat German engineering. :D

https://wccftech.com/gran-turismo-polyphony-raytracing/

Sony is also working on real time ray-tracing tech for GT

http://raytracey.blogspot.com/2008/08/ruby-voxels-and-ray-tracing.html

This example is from Radeon HD 4870 CrossFire (combined 2.4 TFLOPS FP32, two geometry-raster engines, 32 ROPS at 750Mhz, 230GB/s video memory bandwidth, 1GB VRAM storage)'s voxels based ray-tracing /raster hybrid that used 16 GB of system memory. This demo's 3D engine was designed with voxels based ray-tracing from the ground up.

For comparison, stock Vega 56 has 10.56 TFLOPS FP32, 21 TFLOPS FP16, four geometry-raster engines and 64 ROPS with up 1474 Mhz, 409 GB/s video memory bandwidth, 4MB L2 cache and 8 GB VRAM storage.

Raytracing on GPUs are usually compute shader/TMU read write path which minimized AMD's raster ROPS weakness, hence exposing AMD GPU's raw TFLOPS power to memory bandwidth.

NVIDIA Turing GPUs also has raw TFLOPS power.

Nobody ever said you cant do ray tracing without rtx tho

Yes, but Nvidia was hyping up the RT cores and saying that regular GPUs struggle with RT. Their RTX implementation runs like shit and the RT cores are a bottleneck. Now we have regular GPUs running real-time ray tracing well. RTX is a joke. Overpriced garbage.

For the record, I bought my RTX 2080 Ti for the extra rasterization performance and not for ray tracing. I knew that RTX was shit and I wasn't happy having to pay a premium because of it.

RTX 2080 Ti also has the raw workstation TFLOPS power besides industry leading rasterization performance. TU102's compute is based on Titan V's workstation compute strength with revised cache design.

RTX 2080 Ti is effectively the superior near GCN compute architecture i.e. TU102 SM's register storage vs CUDA cores ratio is similar to GCN CU's register storage vs stream processors cores ratios. TU102's workstation compute SM design is different from GP102's SM end consumer SM design.

NVIDIA's GCN compute clone started from GP100 evolved into GV100 and TU102.

Turing has comparable compute features similar to Vega series GPUs.

Stock RTX 2080 has raw TFLOPS power and compute features similar to Vega 56 with rasterzation power similar to Vega II. These are near workstation GpGPUs hence the higher asking price. RTX 2080 OC has higher TFLOPS over stock RTX 2080s.

So basically, whatever happens, you're good to go with Nvidia. Got it.

Just kidding, I'm not in any rush. Will wait and see.

@xantufrog:

@ronvalencia:

>Working on ray tracing

>2008 article

Piss off ron.

Please banned this recalcitrant.

@ronvalencia:

>Working on ray tracing

>2008 article

Piss off ron.

https://www.eurogamer.net/articles/digitalfoundry-the-making-of-killzone-shadow-fall

The ray-traced reflection system

What we do on-screen for every pixel we run a proper ray-tracing - or ray-marching - step. We find a reflection vector, we look at the surface roughness and if you have a very rough surface, that means that your reflection is very fuzzy in that case," he explains.

"So what we do is find a reflection for every pixel on screen, we find a reflection vector that goes into the screen and then basically start stepping every second pixel until we find something that's a hit. It's a 2.5D ray-trace... We can compute a rough approximation of where the vector would go and we can find pixels on-screen that represent that surface. This is all integrated into our lighting model."

"It's difficult to see where one system stops and another begins. We have pre-baked cube maps and we have real-time ray-traced reflections and then we have reflecting light sources and they all blend together in the same scene," adds Michiel van der Leeuw.

Guerrilla Games is using screen space to limit ray-tracing budget. Sony is already working on ray tracing with low compute power hardware. Give Sony programmer teams with Vega 56 level compute hardware.

Crytek disabled SSR (Screen Space Reflection) to demo it's ray-tracing reflections technology running on Vega 56.

Try again.

https://www.eurogamer.net/articles/digitalfoundry-the-making-of-killzone-shadow-fall

The ray-traced reflection system

What we do on-screen for every pixel we run a proper ray-tracing - or ray-marching - step. We find a reflection vector, we look at the surface roughness and if you have a very rough surface, that means that your reflection is very fuzzy in that case," he explains.

"So what we do is find a reflection for every pixel on screen, we find a reflection vector that goes into the screen and then basically start stepping every second pixel until we find something that's a hit. It's a 2.5D ray-trace... We can compute a rough approximation of where the vector would go and we can find pixels on-screen that represent that surface. This is all integrated into our lighting model."

"It's difficult to see where one system stops and another begins. We have pre-baked cube maps and we have real-time ray-traced reflections and then we have reflecting light sources and they all blend together in the same scene," adds Michiel van der Leeuw.

Guerrilla Games is using screen space to limit ray-tracing budget.

Crytek disabled SSR (Screen Space Reflection) to demo it's ray-tracing reflections technology running on Vega 56

Try again.

>2013

"is working"

Using progressive tense for articles from 5-10 years ago.

Are you even trying?

https://www.eurogamer.net/articles/digitalfoundry-the-making-of-killzone-shadow-fall

The ray-traced reflection system

What we do on-screen for every pixel we run a proper ray-tracing - or ray-marching - step. We find a reflection vector, we look at the surface roughness and if you have a very rough surface, that means that your reflection is very fuzzy in that case," he explains.

"So what we do is find a reflection for every pixel on screen, we find a reflection vector that goes into the screen and then basically start stepping every second pixel until we find something that's a hit. It's a 2.5D ray-trace... We can compute a rough approximation of where the vector would go and we can find pixels on-screen that represent that surface. This is all integrated into our lighting model."

"It's difficult to see where one system stops and another begins. We have pre-baked cube maps and we have real-time ray-traced reflections and then we have reflecting light sources and they all blend together in the same scene," adds Michiel van der Leeuw.

Guerrilla Games is using screen space to limit ray-tracing budget.

Crytek disabled SSR (Screen Space Reflection) to demo it's ray-tracing reflections technology running on Vega 56

Try again.

>2013

"is working"

Using progressive tense for articles from 5-10 years ago.

Are you even trying?

Piss off, you're wrong.

Piss off, you're wrong.

I'd rather being wrong than being ron.

You're seriously using articles from a decade ago to prove a point. Know what was happening 10 years ago? Probably not, you were programmed in 2008 according to your join date.

Your argument is bull$hit without factoring the technical side. Workstations involved has 16GB of memory with RAID hard drives feeding the voxel based ray-tracing 3D engine and GPU card.

http://raytracey.blogspot.com/2008/08/ruby-demo.html

In this video, Urbach says that the Ruby demo is rendered with voxel ray tracing. Otoy can also dynamically relight the scene.

One of the things that's very exciting about the latest generation of hardware, coming from AMD, is that we can now write general purpose code, using CAL, that does wavelet compression

AMD's CAL = Compute Abstraction Layer which is low level access layer. This is the pre-cursor to OpenCL and MS's DirectX11's Compute Shaders 5.0.

CryEngine 5.5 is using a Sparse Voxel Octree based accelerated structure

https://www.dsogaming.com/news/cryengine-5-5-is-now-available-featuring-svogi-improvements-and-more-than-1000-fixes/

SVOGI Improvements: SVOGI, the feature which allows developers to create scenes with realistic ambient tonality, now includes a major advancement with SVO Ray-traced Shadows offering an alternative to using cached shadow maps in scenes.

Turing RT cores are designed for BVH search tree accelerated structure. Turing RT cores follows PowerVR's BVH search tree accelerator model.

Crytek evolved CryEngine 5.5 with Sparse Voxel Octree Total Illumination and demo'ed ray-traced reflectionswithout SSR (Screen Space Reflection) enabled.

Neon Noir was developed on a bespoke version of CRYENGINE 5.5., and the experimental ray tracing feature based on CRYENGINE’s Total Illumination used to create the demo is both API and hardware agnostic

Radeon Rays 2.0 uses BVH search tree accelerated structure running on compute shaders.

Screen Space Reflections or Realtime Local Reflections aren't ray-tracing. It's right there in the name 'Screen Space' and Crysis 2 was the first to implement that.

Screen Space Reflections has been disabled with Crytek's latest demo.

http://cgicoffee.com/blog/2018/03/what-is-nvidia-rtx-directx-dxr

Reality check. What is NVIDIA RTX Technology? What is DirectX DXR? Here's what they can and cannot do

...

Need proof? No problem, just render any scene with 1 (one) sample per pixel with your favorite renderer and awe at the amazing render speed! Now process a series of renders with a temporal denoiser and you've got your RTX/DXR.

To limit sample per pixel, Nvidia cheats their real time ray-tracing with de-noise pixel construction.

I'd rather wait for live gameplay benchmarks before making any conclusions. If it turns out to be this effective then that's great news!

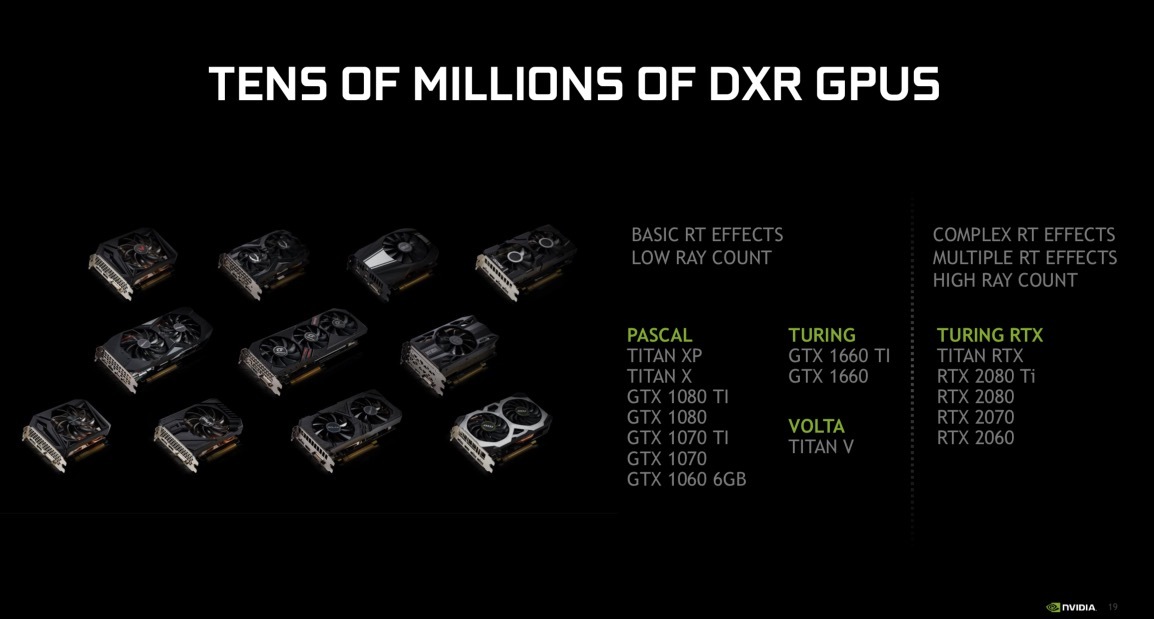

https://www.anandtech.com/show/14100/nvidia-april-driver-to-support-ray-tracing-on-pascal-gpus-dxr-support-in-unity-and-unreal

Nvidia's April driver to support DXR (DirectX ray-tracing) on Pascal GPUs.

@BassMan:

https://www.gizmodo.com.au/2019/03/ray-tracing-is-coming-to-a-whole-lot-of-gpus/

How good is the performance?

Tracing rays of light for reflections is a lot less taxing on a GPU than tracing every ray of light to create both shadows and reflections. Which means your GTX 1060 will have a lot less of a struggle handling ray tracing on Battlefield V than Metro Exodus. “It’s going to be very game dependent,” Nvidia told a group of reporters, including Gizmodo.

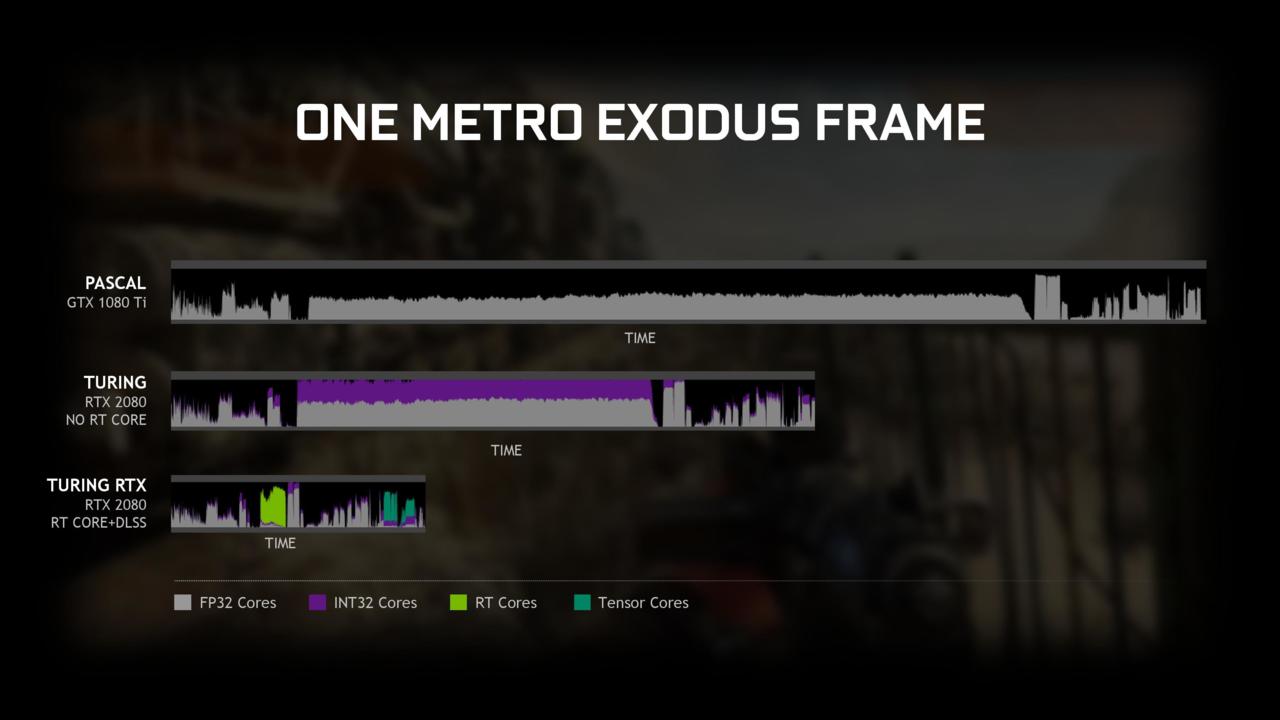

But how much of a struggle? That remains to be seen for some of the less powerful GPUs Nvidia ships. Nvidia only divulged numbers for the 1080Ti. In Battlefield V, a 1080Ti at 1,440p resolution supported ray tracing while churning out 50fps. In Metro Exodus it plummeted to under 20fps. Nvidia claims a 2080 at the same resolution did over 70fps in Battlefield V and over 60fps in Metro Exodus—a far sight better than the 1080Ti. So expect even less stellar performance from the 1070 or 1060.

@NoodleFighter: Reminds me of an episode from an old '70s British comedy show called Mind Your Language, where a German woman says something like, "German technology is za best!" And then a Japanese guy stands up and says something like, "No, Japan technology is the besto!"

Battlefield V DXR's BHV search tree ray-traced reflection has GTX 1080 Ti running at 50 fps and 2560x1440p resolution which yields 184,320,000 pixels per second. GTX 1080 Ti has 12.9 TFLOPS FP32 peak at 1800Mhz (max boost) or 12.18 TFLOPS FP32 at 1700Mhz

Neon Noir's SVO ray-traced reflection has Vega 56 running at 30 fps and 3840x2160p resolution which yields 248,832,000 pixels per second. Vega 56 has 10.56 TFLOPS FP32 peak

From https://www.anandtech.com/show/14100/nvidia-april-driver-to-support-ray-tracing-on-pascal-gpus-dxr-support-in-unity-and-unreal

Pascal architecture has problems with concurrent integer and floating operations.

Under the hood, NVIDIA is implementing support for DXR via compute shaders run on the CUDA cores. In this area the recent GeForce GTX 16 series cards, which are based on the Turing architecture sans RTX hardware, have a small leg up. Turing includes separate INT32 cores (rather than tying them to the FP32 cores), so like other compute shader workloads on these cards, it's possible to pick up some performance by simultaneously executing FP32 and INT32 instructions. It won't make up for the lack of RTX hardware, but it at least gives the recent cards an extra push. Otherwise, Pascal cards will be the slowest in this respect, as their compute shader-based path has the highest overhead of all of these solutions.

DXR results with different CUDA and RT cores behaviors.

Vega architecture has no problems with mix FP32 and INT32 workloads.

On compute workload,

Vega 56's compute has 4 MB L2 cache

GTX 1080's compute has ~3MB L2 cache

Compute workload may disable NVIDIA's delta color compression advantage.

It would be interesting to see Vega 56/64's fine-wine in action.

So if RT and RTX 20XX cards are so shit and don't work and are overpriced why are they still selling in their droves, even some of the people posting here trashing them have paid big money for them even though they could have bought a GTX or even an AMD card, does not make sense. If what is being claimed here is true why have these cards not seriously dropped in price, again does not make sense. I like to partly decide things on how the market reacts and as of now there is no evidence that RTX cards are sub standard, do not work or are bogus.

Please Log In to post.

Log in to comment