@ronvalencia:

...And how did Xbox One X a premium priced mid generation console achieve 6TFLOPS at 150w TDP?... They used a older GCN 2.0 architecture for price and yields and had slower clocks than a RX 480 which can boost to 1250-1300MHz and be 6TFLOPs with a 150w TDP with less 4 Less CU's.

The X1X does nothing special their is no magic behind it at all, its TDP is inline with its CU count and core clocks for GCN. If a RX 580 was clocked to 1.17GHz like a X1X and had its same CU count it will more than likely be at the same 150-175 TDP.

You act like the X1X put a turned a 250w GCN chip into a 150w magic power house, it didn't... Its TDP is a direct result of clocks and CU counts on GCN. You move the CU count up then down goes the clock speeds and vice versa when it comes to hitting the TDP.

NOW:

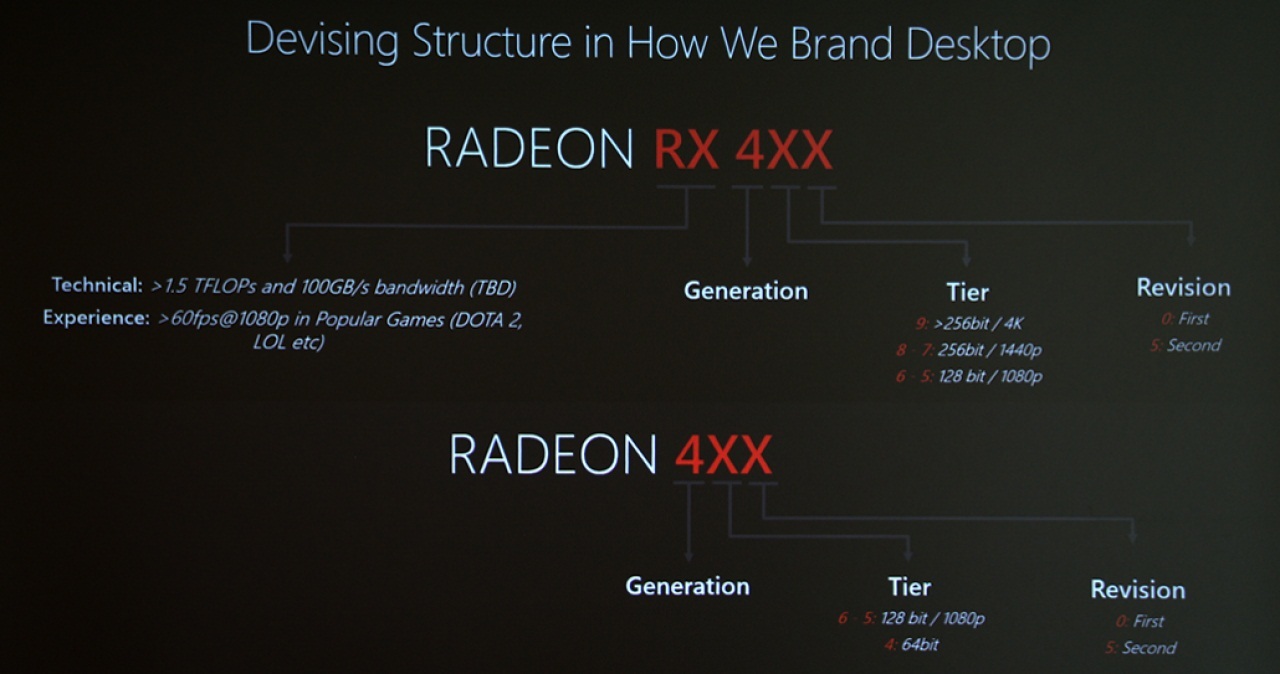

Rumours/leaks PS5:

- Navi 10 Lite

- 1.8GHz clock speeds

Rumours/leaks Navi:

- Navi 10 48CU 160w

- Navi 10 52CU 175w

- Navi 10 56CU 190w

Now... Witch one will be the Navi 10 lite?

If you think AMD will be releasing a 190w 56 CU GPU on PC and then a 56 CU GPU on a console at 150w with clock speeds at 1.8GHz then your even more of a gullible fool than I thought.

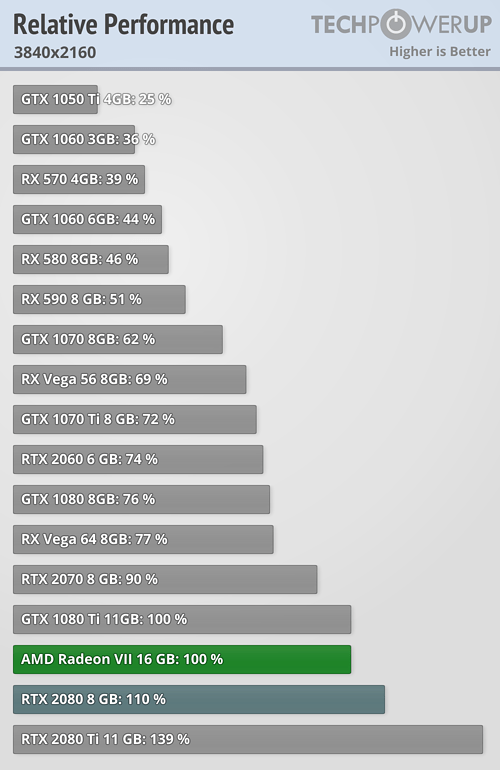

Another leak/rumour is that Navi won't hit Vega 20 clocks... A standard Randeon 7 boosts to 1750MHz and can hit 1.9-2GHz with overclocking.

Again the rumours/leaks from Navi are HEAVILY conflicting with the bull-shit fanboy leaks of PS5 spec's when it comes to the GPU.

56 CU at 1.8GHz on GCN at 150w?...

Sh** man based on the TDP of Navi 10 and the 1.8GHz PS5 rumour they would need to take a 48 CU Navi 10 and cut 4 CU's to get 150w, because there is no way that 48CU 160w chip is even hitting 1.8GHz on desktop without dual fans and not going under 70c.

Xbox One X's 44 CU has all the Polaris gfx8 compute updates with extras e.g.

1. Variable shader rate like feature which doesn't exist in Polaris/Hawaii/Tonga/Fury

2. ROPS with 2 MB render cache design which doesn't exist in Polaris/Hawaii/Tonga/Fury

https://gpucuriosity.wordpress.com/2017/09/10/xbox-one-xs-render-backend-2mb-render-cache-size-advantage-over-the-older-gcns/

X1X GPU has Polaris 2MB L2 cache for TMUs with 2 MB render cache for ROPS, hence X1X's GPU is between Polaris and Vega when it comes to cache design. X1X GPU's total L2 + render cache storage is 4MB.

Vega 56/64/VII has unified 4MB L2 cache for both ROPS and TMUs

Hawaii (GCN 2.0) has 1MB L2 cache for TMUs with tiny kilobytes render cache for ROPS.

Polaris 10/20/30 has 2MB L2 cache with tiny kilobytes render cache for ROPS.

@Grey_Eyed_Elf said:

The X1X does nothing special their is no magic behind it at all, its TDP is inline with its CU count and core clocks for GCN.

Bullshit, Polaris doesn't have X1X's 2MB render cache!

Xbox One X dev kit has the full Scorpio GPU has 44 active CU with 6.6 TFLOPS at 1172Mhz (base clock not boost mode) . Retail game console market doesn't allow PC's "XT" and "Pro" SKU difference.

If a game console is built around Polaris 10/20/30 (RX-480/RX-580/RX-590 respectively) design, the console would have 32 active CU with 4 CU disabled for yield issues.

Polaris GPU with greater than 256bit bus was hinted i.e. RX 490. This GPU configuration wasn't released for the PC market, but it was used for Xbox One X.

Try again.

For RTX 2080 Ti (not factoring Tensor and RT cores) vs GTX 1080 Ti, NVIDIA done the following

- doubled the unified L2 cache in GTX 1080 Ti's 3 MB into 6 MB

- added rapid pack maths features (from Volta)

- added discrete integer CUDA units

- added variable shader rate feature

- added async compute scheduler with multiple concurrent context support (from Volta)

- added higher clock speed

- added more CUDA FP cores

- improve register storage vs CUDA core count ratio (GCN like, from Volta)

- improved memory compression

- 'etc'

RTX 2080 Ti is an upgraded GTX 1080 Ti is an upgraded GTX 980 Ti. 88 ROPS and six GPC units are common designs from GTX 980 Ti to RTX 2080 Ti and it's a good foundation for higher TFLOPS scaling.

The main difference between AMD and NVIDIA is the six geometry-raster engine with 96 ROPS foundation vs four geometry-raster engine with 64 ROPS foundation.

@Grey_Eyed_Elf said:

If you think AMD will be releasing a 190w 56 CU GPU on PC and then a 56 CU GPU on a console at 150w with clock speeds at 1.8GHz then your even more of a gullible fool than I thought.

Don't put words into my mouth. I purposely leaved out the final clock speed for 7nm era game consoles.

Log in to comment