If AAA devs focused on 720p, how much better would the "graphics" might be?

Wow that looks great. Luke looks great the woman still has a more "gamey" face though. If everyone wears a helmet. It really helps out the visuals. I'm fooled by the Darthmaul model: Looks good!

Just a bit more polygons to the ferns on the ground and a bit more on the fire effects. but still. WOW.

Is this running real time with Scorpio?

Wow that looks great. Luke looks great the woman still has a more "gamey" face though. If everyone wears a helmet. It really helps out the visuals. I'm fooled by the Darthmaul model: Looks good!

Just a bit more polygons to the ferns on the ground and a bit more on the fire effects. but still. WOW.

Is this running real time with Scorpio?

The advert is for Playstation 4.

http://www.windowscentral.com/forza-motorsport-7-4k-project-scorpio-xbox

Scorpio has 4K Battlefront 2. Scorpio's extra memory storage may enable it to run with PC's higher quality textures.

EA still has problems with "eye candy" women e.g. refer to Mass Effect Andromeda. In real life, an enemy operative could be a Russian hot chick or some "China dolls"..

Wow that looks great. Luke looks great the woman still has a more "gamey" face though. If everyone wears a helmet. It really helps out the visuals. I'm fooled by the Darthmaul model: Looks good!

Just a bit more polygons to the ferns on the ground and a bit more on the fire effects. but still. WOW.

Is this running real time with Scorpio?

The advert is for Playstation 4.

http://www.windowscentral.com/forza-motorsport-7-4k-project-scorpio-xbox

Scorpio has 4K Battlefront 2. Scorpio's extra memory storage may enable it to run with PC's higher quality textures.

EA still has problems with "eye candy" women e.g. refer to Mass Effect Andromeda. In real life, an enemy operative could be a Russian hot chick or some "China dolls"..

Ah. Well see when it comes out. Looking forward to some tech analysis even though most jargons go over my head.

Hmm.. I meant more like the eyes of the girl looks off like weird for some reason not that she's ugly. Perhaps it's just the eye lashes? IDK

Even though it's for the PS4/PS4 channel. IDK if they can still use the high end pc version since it's just commercial. I have a feeling the retail PS4 will have way more aliasing that in that trailer. Good if not

Wow that looks great. Luke looks great the woman still has a more "gamey" face though. If everyone wears a helmet. It really helps out the visuals. I'm fooled by the Darthmaul model: Looks good!

Just a bit more polygons to the ferns on the ground and a bit more on the fire effects. but still. WOW.

Is this running real time with Scorpio?

The advert is for Playstation 4.

http://www.windowscentral.com/forza-motorsport-7-4k-project-scorpio-xbox

Scorpio has 4K Battlefront 2. Scorpio's extra memory storage may enable it to run with PC's higher quality textures.

EA still has problems with "eye candy" women e.g. refer to Mass Effect Andromeda. In real life, an enemy operative could be a Russian hot chick or some "China dolls"..

Ah. Well see when it comes out. Looking forward to some tech analysis even though most jargons go over my head.

Hmm.. I meant more like the eyes of the girl looks off like weird for some reason not that she's ugly. Perhaps it's just the eye lashes? IDK

Even though it's for the PS4/PS4 channel. IDK if they can still use the high end pc version since it's just commercial. I have a feeling the retail PS4 will have way more aliasing that in that trailer. Good if not

... this sounds familiar... ... Mass Effect Andromeda.

For single player story plot, Battlefront 2's Corvette size Imperial hero ship sounds like Mass Effect's Normandy with Star Wars skin.

Battlefront 2's Imperial story line sounds like Star Wars TOR MMO's Imperial story line...

@j2zon2591: You need to educate yourself on what a rendering pipeline is. What shaders are. How geometry is rendered in a 3D space, lit, and shaded. You're talking about how to power this rendering, not the actual rendering done.

Literally everything you just said was in complete ignorance as to how graphics rendering is actually computed on the hardware you speak of. You simply do not understand how you take a 3D world and render it. That's why this doesn't work. You can't just throw hardware and resolutions out like you are. That's not how the actual code runs.

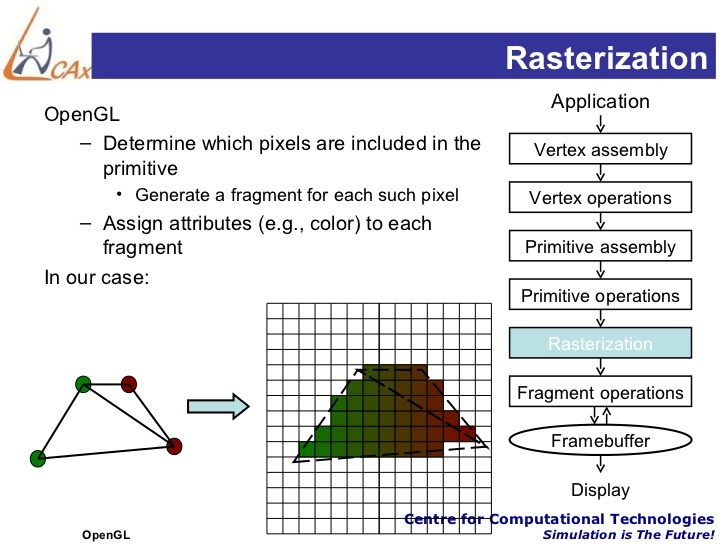

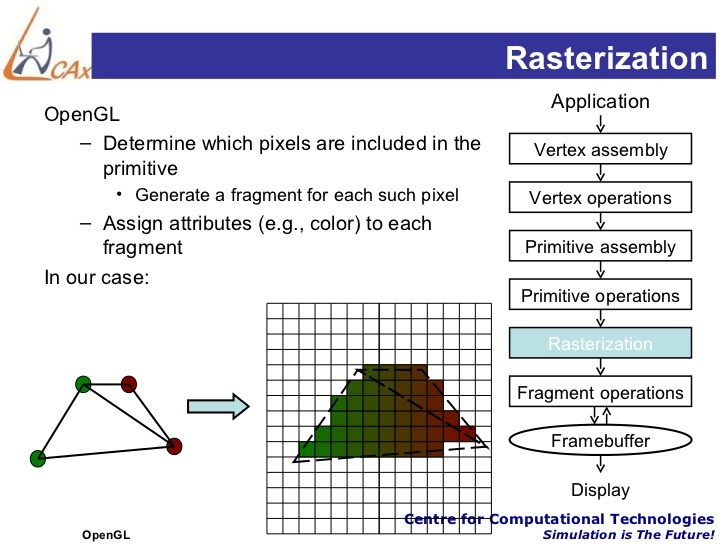

For example, do you know what rasterization means? And I don't just mean googling the definition.

I took a single graphics programming class in college as part of my computer science major. I'm not an expert by any means. However I do know enough to tell you that you can't just "focus" on a lower resolution. Graphics do not work that way. It would take me much longer that Gamespot posts allow to convey that idea.

@j2zon2591: You need to educate yourself on what a rendering pipeline is. What shaders are. How geometry is rendered in a 3D space, lit, and shaded. You're talking about how to power this rendering, not the actual rendering done.

Literally everything you just said was in complete ignorance as to how graphics rendering is actually computed on the hardware you speak of. You simply do not understand how you take a 3D world and render it. That's why this doesn't work. You can't just throw hardware and resolutions out like you are. That's not how the actual code runs.

For example, do you know what rasterization means? And I don't just mean googling the definition.

I took a single graphics programming class in college as part of my computer science major. I'm not an expert by any means. However I do know enough to tell you that you can't just "focus" on a lower resolution. Graphics do not work that way. It would take me much longer that Gamespot posts allow to convey that idea.

Give me the gist of it and I'd really appreciate it.

So far this only makes me think dropping resolution allows more effects as shown by how PC works at least on face value and specially how I've experienced in messing with Crysis way back when.

I'll try looking up at rasterization later at least in concept of 3D.

On a sidenote, I am immensely envious of people who take Computer Science and Engineering as I am horrid at math and logic ever since. Congrats on the degree btw!

I should really post in beyond3D soon but I don't feel like making another account xD

@j2zon2591: You need to educate yourself on what a rendering pipeline is. What shaders are. How geometry is rendered in a 3D space, lit, and shaded. You're talking about how to power this rendering, not the actual rendering done.

Literally everything you just said was in complete ignorance as to how graphics rendering is actually computed on the hardware you speak of. You simply do not understand how you take a 3D world and render it. That's why this doesn't work. You can't just throw hardware and resolutions out like you are. That's not how the actual code runs.

For example, do you know what rasterization means? And I don't just mean googling the definition.

I took a single graphics programming class in college as part of my computer science major. I'm not an expert by any means. However I do know enough to tell you that you can't just "focus" on a lower resolution. Graphics do not work that way. It would take me much longer that Gamespot posts allow to convey that idea.

Give me the gist of it and I'd really appreciate it.

So far this only makes me think dropping resolution allows more effects as shown by how PC works at least on face value and specially how I've experienced in messing with Crysis way back when.

I'll try looking up at rasterization later at least in concept of 3D.

On a sidenote, I am immensely envious of people who take Computer Science and Engineering as I am horrid at math and logic ever since. Congrats on the degree btw!

@j2zon2591: You need to educate yourself on what a rendering pipeline is. What shaders are. How geometry is rendered in a 3D space, lit, and shaded. You're talking about how to power this rendering, not the actual rendering done.

Literally everything you just said was in complete ignorance as to how graphics rendering is actually computed on the hardware you speak of. You simply do not understand how you take a 3D world and render it. That's why this doesn't work. You can't just throw hardware and resolutions out like you are. That's not how the actual code runs.

For example, do you know what rasterization means? And I don't just mean googling the definition.

I took a single graphics programming class in college as part of my computer science major. I'm not an expert by any means. However I do know enough to tell you that you can't just "focus" on a lower resolution. Graphics do not work that way. It would take me much longer that Gamespot posts allow to convey that idea.

Give me the gist of it and I'd really appreciate it.

So far this only makes me think dropping resolution allows more effects as shown by how PC works at least on face value and specially how I've experienced in messing with Crysis way back when.

I'll try looking up at rasterization later at least in concept of 3D.

On a sidenote, I am immensely envious of people who take Computer Science and Engineering as I am horrid at math and logic ever since. Congrats on the degree btw!

Have my babies, Ron! JK

Thanks. I'll watch the vid when I get back home.

YT eats our mobile plan and I'm gonna get in trouble if I eat too much of that :D

@j2zon2591: You need to educate yourself on what a rendering pipeline is. What shaders are. How geometry is rendered in a 3D space, lit, and shaded. You're talking about how to power this rendering, not the actual rendering done.

Literally everything you just said was in complete ignorance as to how graphics rendering is actually computed on the hardware you speak of. You simply do not understand how you take a 3D world and render it. That's why this doesn't work. You can't just throw hardware and resolutions out like you are. That's not how the actual code runs.

For example, do you know what rasterization means? And I don't just mean googling the definition.

I took a single graphics programming class in college as part of my computer science major. I'm not an expert by any means. However I do know enough to tell you that you can't just "focus" on a lower resolution. Graphics do not work that way. It would take me much longer that Gamespot posts allow to convey that idea.

Give me the gist of it and I'd really appreciate it.

So far this only makes me think dropping resolution allows more effects as shown by how PC works at least on face value and specially how I've experienced in messing with Crysis way back when.

I'll try looking up at rasterization later at least in concept of 3D.

On a sidenote, I am immensely envious of people who take Computer Science and Engineering as I am horrid at math and logic ever since. Congrats on the degree btw!

Have my babies, Ron! JK

Thanks. I'll watch the vid when I get back home.

YT eats our mobile plan and I'm gonna get in trouble if I eat too much of that :D

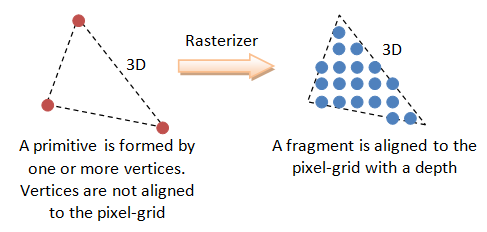

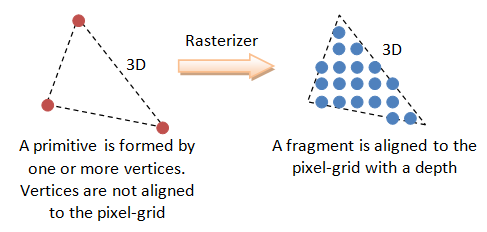

Rasterization step...

Geometry aligns to pixel grid frame buffer with depth. After this step, shading and texturing occurs.

More advance 3D engines has texture data + tessellation boosting geometry data prior to rasterization step.

Rasterization is done by GPU's fix function hardware i.e. one of the "heart and soul" that separates the GPU from DSP (e.g. CELL's SPUs).

Having played Shadow Warrior 2 on the Alienware Alpha R2 (R9 M470X model), I would say that the graphical gain might not amount to much, but you'll definitely get a sufficient gain in frames per second, which is especially important for platformers, fighting games and first person shooters.

Having played Shadow Warrior 2 on the Alienware Alpha R2 (R9 M470X model), I would say that the graphical gain might not amount to much, but you'll definitely get a sufficient gain in frames per second, which is especially important for platformers, fighting games and first person shooters.

AMD Radeon R9 M470X is based on the older 28 nm Bonaire chip. Read https://www.notebookcheck.net/AMD-Radeon-R9-M470X.166342.0.html

AMD Radeon R9 M470X has 1.97 TFLOPS.

@j2zon2591: You need to educate yourself on what a rendering pipeline is. What shaders are. How geometry is rendered in a 3D space, lit, and shaded. You're talking about how to power this rendering, not the actual rendering done.

Literally everything you just said was in complete ignorance as to how graphics rendering is actually computed on the hardware you speak of. You simply do not understand how you take a 3D world and render it. That's why this doesn't work. You can't just throw hardware and resolutions out like you are. That's not how the actual code runs.

For example, do you know what rasterization means? And I don't just mean googling the definition.

I took a single graphics programming class in college as part of my computer science major. I'm not an expert by any means. However I do know enough to tell you that you can't just "focus" on a lower resolution. Graphics do not work that way. It would take me much longer that Gamespot posts allow to convey that idea.

Give me the gist of it and I'd really appreciate it.

So far this only makes me think dropping resolution allows more effects as shown by how PC works at least on face value and specially how I've experienced in messing with Crysis way back when.

I'll try looking up at rasterization later at least in concept of 3D.

On a sidenote, I am immensely envious of people who take Computer Science and Engineering as I am horrid at math and logic ever since. Congrats on the degree btw!

Have my babies, Ron! JK

Thanks. I'll watch the vid when I get back home.

YT eats our mobile plan and I'm gonna get in trouble if I eat too much of that :D

Rasterization step...

Geometry aligns to pixel grid frame buffer with depth. After this step, shading and texturing occurs.

More advance 3D engines has texture data + tessellation boosting geometry data prior to rasterization step.

Rasterization is done by GPU's fix function hardware i.e. one of the "heart and soul" that separates the GPU from DSP (e.g. CELL's SPUs).

Thanks again, Ron! I watched the vid once and read a few bits about rasterization. Your slides were very helpful.

Now I have a much better understanding about Rasterization which my uncle mentioned almost a decade ago.

Anyway, I am still not 100% sure but I think I do somewhat understand that compute power can really be translated from resolution to other things at least on the most basic of concept (dropping res = more performance or better effects, etc.) :

http://www.eurogamer.net/articles/digitalfoundry-2016-are-4k-visuals-really-the-best-use-for-playstation-neo-and-project-scorpio

Instead of 4K..

..Alternatively, in-game worlds could become deeper, with a much higher degree of simulation - more NPCs, better physics, real-time global illumination - you name it. More time can be invested by developers in GPGPU, the process of utilising graphics hardware for tasks more traditionally suited to the CPU.

-Leadbetter

http://wccftech.com/doom-lead-4k-xbox-scorpio-waste/

Using the 6TFLOPS of computing power within the Xbox Scorpio for 4k gaming is a waste of resources says DOOM’s lead renderer programmer. The available power should better be used for higher fidelity 1080p gaming.

-this one seems implied though.

I'd also assume lowered res + higher fidelity resulted in the early touted graphics of 1886 and Ryse.

Sadly, I watched some 3D environment building in YT and it seems too daunting atm. Maybe in a couple of years xD

I'll try to get back to P5 and Andromeda 1st. :P

@j2zon2591: Start a game. Pause it. If you're displaying in native 1080, that picture takes up 8mb, including all the texture data...for that screen.

So how do you fit a 100MB texture onto the screen? A little at a time per frame.

I don't think you understand how rendering works at all, they can easily have 4+ GB of visual data in memory that gets processed once every 16 milliseconds for 60 fps. It creates a final image which will have multiple layers which will get post processed into a frame buffer. You don't do a little bit of texture per frame, you take tons of different textures and apply them to all the surfaces in the game, what you end up with is an image with tons of textures that are scaled to fit on the picture, the higher quality the input, the more memory bandwith you need.

I'm not entirely sure what point your making with the frame buffer size, it is of little consequence when considering how that image actually gets made and how much memory is actually needed. For instance you can use higher quality assets and apply them to a 720p image, you still need the memory capacity/bandwidth to store/process those textures.

First, I am right and I don't give a shit what you write, but you've basically just re-written what I did, for the most part.

Data (image) is processed and stacked into the framebuffer(s), then output to the display a little at a time per frame.

The file size and memory occupied by the texture in vram is irrelevant, as long as it fits.

So how do you get 100mb or even 4gb of data to your screen? A little at a time per frame.

Don't see with what you have a problem, and don't particularly care.

First..... you are wrong.

And a 1080p buffer is 8mb? Lmao.........

Thanks again, Ron! I watched the vid once and read a few bits about rasterization. Your slides were very helpful.

Now I have a much better understanding about Rasterization which my uncle mentioned almost a decade ago.

Anyway, I am still not 100% sure but I think I do somewhat understand that compute power can really be translated from resolution to other things at least on the most basic of concept (dropping res = more performance or better effects, etc.) :

http://www.eurogamer.net/articles/digitalfoundry-2016-are-4k-visuals-really-the-best-use-for-playstation-neo-and-project-scorpio

Instead of 4K..

..Alternatively, in-game worlds could become deeper, with a much higher degree of simulation - more NPCs, better physics, real-time global illumination - you name it. More time can be invested by developers in GPGPU, the process of utilising graphics hardware for tasks more traditionally suited to the CPU.

-Leadbetter

http://wccftech.com/doom-lead-4k-xbox-scorpio-waste/

Using the 6TFLOPS of computing power within the Xbox Scorpio for 4k gaming is a waste of resources says DOOM’s lead renderer programmer. The available power should better be used for higher fidelity 1080p gaming.

-this one seems implied though.

I'd also assume lowered res + higher fidelity resulted in the early touted graphics of 1886 and Ryse.

Sadly, I watched some 3D environment building in YT and it seems too daunting atm. Maybe in a couple of years xD

I'll try to get back to P5 and Andromeda 1st. :P

Frame buffer (Geometry edge aligns to frame buffer resolution) can set to 4K resolution while other shader passes can be in different resolution or at a cheaper cost e..g. cheaper SSAO vs higher cost HBAO+(NVIDIA) or HDAO/AOFX (AMD).

The problem with PC's "Ultra settings" are the diminishing visual quality gain vs exponential GPU resource BS usage i.e. Gameworks "higher fidelity" BS.

Maxing graphics settings with average looking art assets would be still be average looking graphics e.g. compared IDsoftware's Doom 2016 vs EA DICE's SW Battlefront.

I prefer SW Battlefront over Doom 2016.

The following screenshot is Scorpio's Forza 6 techdemo at 4K resolution with XBO graphics settings and PC 4K art assets.

Compared Forza 6's artwork against Doom 2016's artwork. I prefer Forza 6's artwork over Doom 2016.

If I include Forza Horizon 3's artwork.... Doom 2016's artwork is another class lower than Forza Horizon 3.

Hmmmm.

Personally I'd sacrifice graphical fidelity and resolution for stable performance. I'd rather have higher FPS first. :)

OP: of course the visuals in movies are vastly superior to games, even if you see the CG in 720p vs a game at native 4K / 2160p.

I too wonder what games could look like if resolution was limited to 720p and the visual complexity (re: geometry) and lighting was pushed way, way up.

Especially on the most powerful hardware consumers can get today (a very high-end PC build).

Or at the very least, Scorpio, which will be equivalent to a mid-range gaming PC by the time it launches.

OP: of course the visuals in movies are vastly superior to games, even if you see the CG in 720p vs a game at native 4K / 2160p.

I too wonder what games could look like if resolution was limited to 720p and the visual complexity (re: geometry) and lighting was pushed way, way up.

Especially on the most powerful hardware consumers can get today (a very high-end PC build).

Or at the very least, Scorpio, which will be equivalent to a mid-range gaming PC by the time it launches.

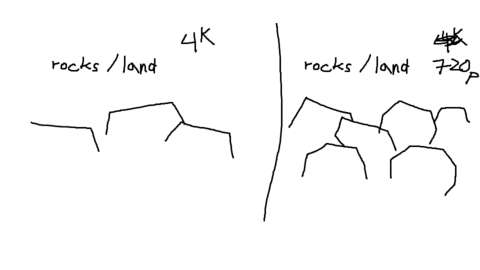

Geometry edges ultimately aligns to frame buffer's pixel grid. There's very little point for sub-pixel geometry or geometry overdraw.

To expose additional geometry details, the resolution has to be higher i.e. more pixels to show more geometry details.

1280x720p has 921600 pixels and that how much you can fill the entire screen a geometry cloud.

Movie CG's advantage comes down to ray-traced lights/reflections and very nice artwork assets.

Hmmmm.

Personally I'd sacrifice graphical fidelity and resolution for stable performance. I'd rather have higher FPS first. :)

VS

OP: of course the visuals in movies are vastly superior to games, even if you see the CG in 720p vs a game at native 4K / 2160p.

I too wonder what games could look like if resolution was limited to 720p and the visual complexity (re: geometry) and lighting was pushed way, way up.

Especially on the most powerful hardware consumers can get today (a very high-end PC build).

Or at the very least, Scorpio, which will be equivalent to a mid-range gaming PC by the time it launches.

Geometry edges ultimately aligns to frame buffer's pixel grid. There's very little point for sub-pixel geometry or geometry overdraw.

To expose additional geometry details, the resolution has to be higher i.e. more pixels to show more geometry details.

1280x720p has 921600 pixels and that how much you can fill the entire screen a geometry cloud.

Movie CG's advantage comes down to ray-traced lights/reflections and very nice artwork assets.

Okay I see. In your opinion, can real-time game graphics make some decent strides toward approximating things like this in the next 3-4 years?

I mean, given the known technology roadmaps for GPU architectures from Nvidia & AMD, and also stacked DRAM like HBM3 and HMC (Hybrid Memory Cube) plus another chip manufacturing shrink to 7nm, etc.

OP: of course the visuals in movies are vastly superior to games, even if you see the CG in 720p vs a game at native 4K / 2160p.

I too wonder what games could look like if resolution was limited to 720p and the visual complexity (re: geometry) and lighting was pushed way, way up.

Especially on the most powerful hardware consumers can get today (a very high-end PC build).

Or at the very least, Scorpio, which will be equivalent to a mid-range gaming PC by the time it launches.

Geometry edges ultimately aligns to frame buffer's pixel grid. There's very little point for sub-pixel geometry or geometry overdraw.

To expose additional geometry details, the resolution has to be higher i.e. more pixels to show more geometry details.

1280x720p has 921600 pixels and that how much you can fill the entire screen a geometry cloud.

Movie CG's advantage comes down to ray-traced lights/reflections and very nice artwork assets.

Okay I see. In your opinion, can real-time game graphics make some decent strides toward approximating things like this in the next 3-4 years?

I mean, given the known technology roadmaps for GPU architectures from Nvidia & AMD, and also stacked DRAM like HBM3 and HMC (Hybrid Memory Cube) plus another chip manufacturing shrink to 7nm, etc.

Running on GeForce Titan X Maxwell (Year 2015)

Game's appearance is based on the skill from the artist and game engine's programmer. Hardware power helps with creativity freedom.

technology needs to move forward not backwards

720p is pathetic for large TV

4k should be the standard

Thanks again, Ron! I watched the vid once and read a few bits about rasterization. Your slides were very helpful.

Now I have a much better understanding about Rasterization which my uncle mentioned almost a decade ago.

Anyway, I am still not 100% sure but I think I do somewhat understand that compute power can really be translated from resolution to other things at least on the most basic of concept (dropping res = more performance or better effects, etc.) :

http://www.eurogamer.net/articles/digitalfoundry-2016-are-4k-visuals-really-the-best-use-for-playstation-neo-and-project-scorpio

Instead of 4K..

..Alternatively, in-game worlds could become deeper, with a much higher degree of simulation - more NPCs, better physics, real-time global illumination - you name it. More time can be invested by developers in GPGPU, the process of utilising graphics hardware for tasks more traditionally suited to the CPU.

-Leadbetter

http://wccftech.com/doom-lead-4k-xbox-scorpio-waste/

Using the 6TFLOPS of computing power within the Xbox Scorpio for 4k gaming is a waste of resources says DOOM’s lead renderer programmer. The available power should better be used for higher fidelity 1080p gaming.

-this one seems implied though.

I'd also assume lowered res + higher fidelity resulted in the early touted graphics of 1886 and Ryse.

Sadly, I watched some 3D environment building in YT and it seems too daunting atm. Maybe in a couple of years xD

I'll try to get back to P5 and Andromeda 1st. :P

Frame buffer (Geometry edge aligns to frame buffer resolution) can set to 4K resolution while other shader passes can be in different resolution or at a cheaper cost e..g. cheaper SSAO vs higher cost HBAO+(NVIDIA) or HDAO/AOFX (AMD).

The problem with PC's "Ultra settings" are the diminishing visual quality gain vs exponential GPU resource BS usage i.e. Gameworks "higher fidelity" BS.

Maxing graphics settings with average looking art assets would be still be average looking graphics e.g. compared IDsoftware's Doom 2016 vs EA DICE's SW Battlefront.

I prefer SW Battlefront over Doom 2016.

The following screenshot is Scorpio's Forza 6 techdemo at 4K resolution with XBO graphics settings and PC 4K art assets.

Compared Forza 6's artwork against Doom 2016's artwork. I prefer Forza 6's artwork over Doom 2016.

If I include Forza Horizon 3's artwork.... Doom 2016's artwork is another class lower than Forza Horizon 3.

Yeah. Doom does look cartoony and seem to have heavier colorization, I prefer the more neutral, realistic colors of the racer and BFront though.

Yep, I mentioned it too earlier; high to ultra setting have a diminishing return but I think this is more due to the fact that the focus were on medium-high and console hardware but that's probably the most realistic as it gets due to budget reasons. Developers aren't probably gonna make much better lighting and different, redesigned assets that's built for 900-1080p of the same game rather just relatively smaller eye candy that are computationally expensive but cheap by man power work like going 4K and/or increasing shadow resolution, AA, AF, AO.

Anyway, later down the line, I need to learn more about lighting too. I know raytracing is too expensive but I think we're really gonna have better results with just very good approximation that are way cheaper.

@27:57 of this UCA4 Epilogue

https://youtu.be/1I6EY-0dJZ4

Lighting looks fantastic to me. I really love the neutral, noon-ish lighting of the house. Please check the tour and don't mind much the human model and animal.

Just a little bit more work on the assets, shadows, lighting and hopefully some more interactivity might do it for me (Remote, blanket, dog fur).

I hope that next gen would look much closer to something like that next gen.

OP: of course the visuals in movies are vastly superior to games, even if you see the CG in 720p vs a game at native 4K / 2160p.

I too wonder what games could look like if resolution was limited to 720p and the visual complexity (re: geometry) and lighting was pushed way, way up.

Especially on the most powerful hardware consumers can get today (a very high-end PC build).

Or at the very least, Scorpio, which will be equivalent to a mid-range gaming PC by the time it launches.

Yeah definitely.

I think the only way to see this is either for us to actually do a deep investigation and ask for top class artists and engineers on what they can come up with just a 720p/30fps limit for pixels but left with massive compute left for much better shading, lighting, objects number, object quality, physics, etc.

Sounds ridiculous but perhaps the lower resolution plus some AA might even give a bit of help like squinting a bit on a 3D graphics asset and it looks realistic at first glance because the focus and sharpness isn't enough to see that it's fake. Obviously there'd have to be a bit more tweaking because I bet you could fool a lot of people with bf1 graphics at youtube quality 240p but design the camera, movement and animation more realistic and less "gamey".. and that's not really what I wanted to see.

Regarding Scorpio, I hope so. I would love to snag a 2017 gpu for $ 249 that performs much better than a scorpio.

ATM, I am excited to pick either a New TV/Monitor, Scorpio or new GPU for 2017. I can probably only have one by year's end though.

I can't imagine there would be much to gain from lowering the resolution to increase the graphical fidelity. All the extra details in there would be pointless since the presentation would be so blurry. Unlocking the framerate would be more useful with that extra processing power.

Even at 720p we can still see sharp edges due to some low polygon counts so that can be an obvious boost IMO. I just saw some 4k/8k vid of digital foundry and the landscape are full of those low poly slabs. Better focus on physics, lighting, shaders might be more obvious too eve at lower resolution but games would probably have to be designed differently unlike the current focus of mid-high image quality + high resolution for current gen consoles.

That's a PS3/360 game though so look at Horizon. Extra GPU power towards much better physics could look more obvious even at 720p like if foliage had better physics/interaction and/or some better terrain deformation during combat and or water physics and quality. Granted these may not be as easily seen in screenshots but would be so during actual gameplay. All that should still be pretty obvious even at 720p.

There'd likely be less lod/pop in issues as well (assets changing into more or higher quality when camera gets nearer) not just because of the lower screen res but because there'd be more compute left to render farther.

Don't get me wrong though. I think HZD has the best vanilla foliage quality currently in terms of overall looks, retail available.. at least glancing at the gaf screenshot thread: http://www.neogaf.com/forum/showthread.php?t=1348006&page=44

EDIT:

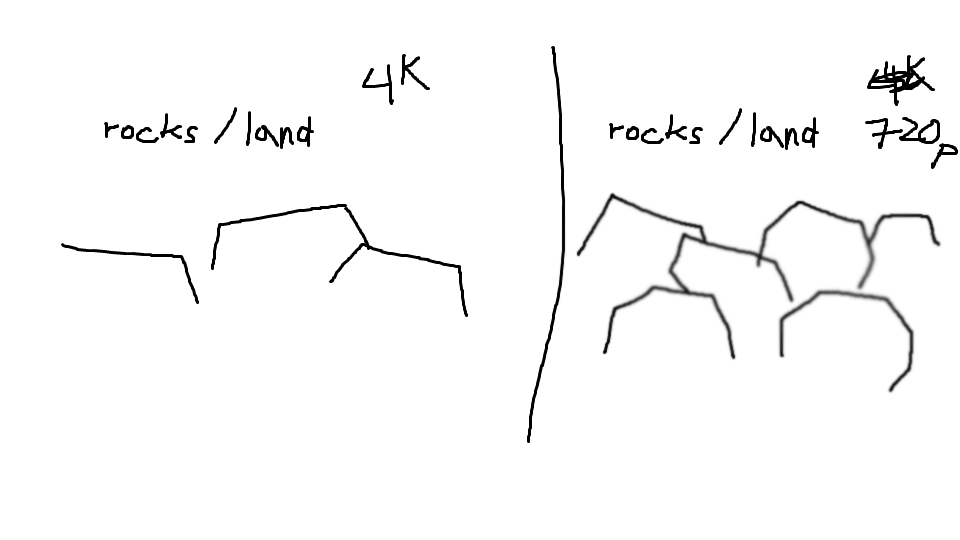

Just blurred out the rocks lol. Here:

Please Log In to post.

Log in to comment