I was watching comparison videos with ray tracing on vs off, didn’t notice a game-changing difference. I feel like it’s a unnecessary waste of power. Not sure if I’m the only one who feels this way. What about you SW? Do you think next-gen consoles should use their power for something other than ray tracing?

Why should next-gen consoles bother with ray tracing?

@R4gn4r0k: Yeah, I understand your point. I just don’t see it being a game changing aspect compared to this gen. As an example, Gears 4 has incredible lighting, will ray tracing really show that much of an improvement compared to a game like that?

While lighting is important, good lighting sells a game far better than bad. And I have never seen a good implementation of ray tracing; the results are usually as hilariously bad as the old angular faces used to be in video games, with the added detriment of lowering overall lighting quality and introducing new graphical glitches.

Realistic might be good, but badly-implemented realistic is worse.

For me I want characters faces to stop looking like plastic to me that's what I want the most out of next gen graphically ray tracing is cool and all but I'm sick of the uncanny valley faces.

I'm in favor of ray tracing, but I'm also not limited by console hardware.

Devs will be using it because it makes it easier to create realistic lighting. It's a good idea for consoles to have support for it. Not only because devs will be using it, but because if consoles support it there will be more incentive for devs to use it.

Plus it looks great and I'm sure as more people use it, they will figure out ways to get the performance where it should be.

@R4gn4r0k: Yeah, I understand your point. I just don’t see it being a game changing aspect compared to this gen. As an example, Gears 4 has incredible lighting, will ray tracing really show that much of an improvement compared to a game like that?

At one point Raytracing will be as important as textures. Realistic lighting really means everything. Is it the most important thing ever and should next gen consoles have it?

Well, personally, and when given a choice, I'd much rather have stable high resolutions and stable high framerates.

Well, personally, and when given a choice, I'd much rather have stable high resolutions and stable high framerates.

This, right here, is why there are so many guides on how to turn off and permanently disable things like godrays.

@warmblur: I feel that is more of an engine problem.

Unreal engine is the worst for realism. Everything looks like plastic like you described. It was very obvious which games used Unreal the last couple gens because of the certain look those games had.

The environments in unreal games aren’t too bad, but the faces and character bodies could use an overhaul.

one of the big complaints about RTX is the performance hit it has on games. on consoles, where many games aim for 30FPS, i dont think thats going to be as big of an issue.

RTX, like different shader types, tessellation, baked shadows etc.etc.....at the end of the day these are just tools. for some games it can make sense to use them. for others it may not. some developers may prefer RTX lighting in a realistic game where as it may not make much sense in something that looks like windwaker.

so it would be good for consoles to have that tool.

the big question is on AMDs approach to implementing it. will it be like nvidia where there is specialised RTX cores to deal with it? will they modify their shader cores so that they can deal with Ray tracing type work and also be effective at rasterisation (this is ideal on paper but unlikely). have AMD, sony and MS developed a software based cheat to get most of the benefit of Ray tracing without needing specialised cores (the cry engine demo would suggest this is possible). not "true" ray tracing if you will but good enough (most of games development is about cheating, smoke and mirrors).

Well, personally, and when given a choice, I'd much rather have stable high resolutions and stable high framerates.

This, right here, is why there are so many guides on how to turn off and permanently disable things like godrays.

I like the freedom of PC options menus (ok, sometimes the port is really bad and it doesn't have those). I turn off:

-Motion blur

-Depth of field

-Chromatic aberration

-lens flares

-Bloom

-FXAA or any other type of AA that causes blur

Some of that stuff can look in promotional material like screenshots or trailers, but it does not look good at all when playing a game.

@warmblur: I feel that is more of an engine problem.

Unreal engine is the worst for realism. Everything looks like plastic like you described. It was very obvious which games used Unreal the last couple gens because of the certain look those games had.

The environments in unreal games aren’t too bad, but the faces and character bodies could use an overhaul.

They look pretty good to me....

@R4gn4r0k: yeah def the first 4, Bloom and flares can be ok if not overdone which sadly they usually are so you can't see shit

I was watching comparison videos with ray tracing on vs off, didn’t notice a game-changing difference. I feel like it’s a unnecessary waste of power. Not sure if I’m the only one who feels this way. What about you SW? Do you think next-gen consoles should use their power for something other than ray tracing?

Reduce pre-bake rendering workloads being done by human resource.

one of the big complaints about RTX is the performance hit it has on games. on consoles, where many games aim for 30FPS, i dont think thats going to be as big of an issue.

RTX, like different shader types, tessellation, baked shadows etc.etc.....at the end of the day these are just tools. for some games it can make sense to use them. for others it may not. some developers may prefer RTX lighting in a realistic game where as it may not make much sense in something that looks like windwaker.

so it would be good for consoles to have that tool.

the big question is on AMDs approach to implementing it. will it be like nvidia where there is specialised RTX cores to deal with it? will they modify their shader cores so that they can deal with Ray tracing type work and also be effective at rasterisation (this is ideal on paper but unlikely). have AMD, sony and MS developed a software based cheat to get most of the benefit of Ray tracing without needing specialised cores (the cry engine demo would suggest this is possible). not "true" ray tracing if you will but good enough (most of games development is about cheating, smoke and mirrors).

MS already confirmed hardware accelerated raytracing for Scarlet.

AMD already confirmed hardware accelerated raytracing for next year's RDNA 2 GPU family.

RDNA 2 to be made on TSMC's second generation 7nm+ process tech with 20 percent improved transisior density and 15 percent lower power consumption.

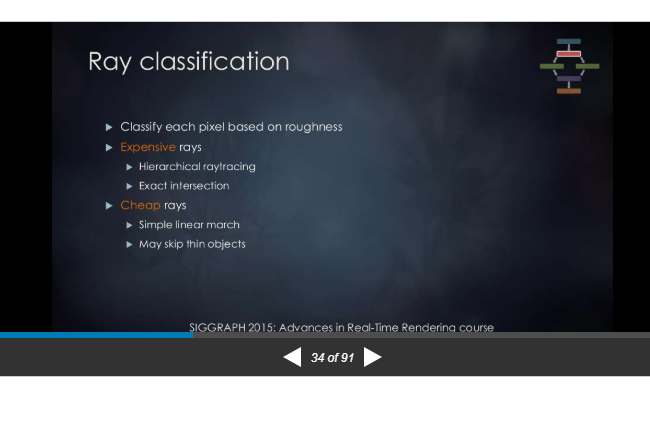

Cheay rays vs Expensive rays according EA DICE.

NVIDIA's RTX hardware and Microsoft DXR API covers hierachical raytracing and exact intersection.

@warmblur: I feel that is more of an engine problem.

Unreal engine is the worst for realism. Everything looks like plastic like you described. It was very obvious which games used Unreal the last couple gens because of the certain look those games had.

The environments in unreal games aren’t too bad, but the faces and character bodies could use an overhaul.

Unreal Engine 4 main branch is ussually unfriendly for AMD GCN hardware. I'm waiting for RX 5700 RX results with UE4.

It's largely a benefit to the developers than it is a matter of providing groundbreaking visuals for the end user.

one of the big complaints about RTX is the performance hit it has on games. on consoles, where many games aim for 30FPS, i dont think thats going to be as big of an issue.

RTX, like different shader types, tessellation, baked shadows etc.etc.....at the end of the day these are just tools. for some games it can make sense to use them. for others it may not. some developers may prefer RTX lighting in a realistic game where as it may not make much sense in something that looks like windwaker.

so it would be good for consoles to have that tool.

the big question is on AMDs approach to implementing it. will it be like nvidia where there is specialised RTX cores to deal with it? will they modify their shader cores so that they can deal with Ray tracing type work and also be effective at rasterisation (this is ideal on paper but unlikely). have AMD, sony and MS developed a software based cheat to get most of the benefit of Ray tracing without needing specialised cores (the cry engine demo would suggest this is possible). not "true" ray tracing if you will but good enough (most of games development is about cheating, smoke and mirrors).

It can still be bad for consoles since in order to compensate for the performance hit ray tracing has games will require the sacrifice of resolutions and even other areas of graphics to make up for it. In fact 4k gaming may not even be a norm with the next gen consoles either if ray tracing is prioritized as it has to be sacrificed for the game to even run at 30fps assuming it is not a low level implementation of ray tracing and the games overall graphics are low demanding.

Crytek's demo actually proves software based cheats wrong as it is really just brute forcing and as you say smoke and mirrors. Crytek themselves said that their Neon Noir demo is inferior to the RTX titles and that their demo can not only run significantly better on RTX cards but with more features and higher resolutions. Hybrid RT/Shader cores sounds good but they'd have to compensate by greatly increasing the amount of them as ray tracing is very demanding on shader cores since they have to do ray tracing on top of their other tasks. So even then those hybrid cards would likely not perform nearly as good as RTX Cards since they have hardware completely dedicated to ray tracing so the rest of the GPU isn't burdened with the task. It's just like how people argued that direct compute can make up for the garbage CPUs in current consoles as if it is no big deal not realizing that because the GPU has to pick up the CPU's slack that is less graphical power to go into increasing graphical fidelity.

You can only get so far with smoke and mirrors especially with how high end graphics are starting to come at some point it will not be worth it to invest so much time and money in creating prebaked and more static methods trying to look like their more easily implemented real time, accurate and dynamic counterparts.

Because dynamic photorealism isn't possible without it, and because it simplifies developer workflows, therefore making games easier to make.

@R4gn4r0k: yeah def the first 4, Bloom and flares can be ok if not overdone which sadly they usually are so you can't see shit

Yeah I sometimes leave bloom on, but most of these effects cause insane blurring (depth of field, motion blur, FXAA). Why would you render a sharp game, only to blur the image in post really badly?

What?... You don't notice a difference worth the performance jump?... I hope you didn't look at Tomb Raider or BFV, Metro Exodus is a example of why Ray Tracing is the future.

Lighting is one of the most important aspects of graphics and Ray Tracing is that generational leap forward and this is just one aspect of Ray Tracing.

Ray Tracing is more than a generational leap forward in lighting, its the final frontier... And if you can't see a difference between those two images that worth the performance then I don't know what to tell you, also clowns like you are always around and I have seen them for the last 4 generations saying "graphics" are good enough, with that mentality we would be stuck with PS1 graphics.

@Grey_Eyed_Elf: I believe ray tracing is getting most of its hate due to the cost of RTX cards. With it being expensive and RT cores currently only in Nvidia cards people see it as some sort of gimmick similar to other Nvidia Gameworks effects. People do want it but don't want to pay for the price of RTX cards. You could see how hyped people were when that Crytek ray tracing demo came out only to go back to pretending not to care once it was pointed out it did not run at 4k 30fps on the Vega 56 and actually 1080p 30fps with visuals inferior to the RTX titles along with other tricks to make it look like ray tracing but was still inaccurate and low ray counts not to mention the demo would run and look a lot better on RTX cards due to their dedicated tech.

Tessellation got similar treatment in its early stages but not as bad as the tech was on both AMD and Nvidia cards and the performance hit wasn't as huge as ray tracing. People were calling tessellation a gimmick and here we are years later with tessellation being a standard norm in games and GPU hardware.

@NoodleFighter:

People are joke.

I also find it hilarious that Nvidia RTX cards got a lot of flack for being overpriced but AMD come out with Navi with a smaller die size, higher tdp and no dedicated ray tracing for basically the same price.

People want high end graphics but don't want to pay for it and they want it to run on low end hardware. Its a joke... And the prices for GPU's have been shit for a while and AMD was a part of that problem when they launched the HD 5870 higher than any flagship card around at that time and then they did the same thing with the HD 7970.

AMD fanboys need to understand that aside from the CPU department now and the Phenom era AMD has been just as bad as Nvidia if not worse when it comes to GPU's.

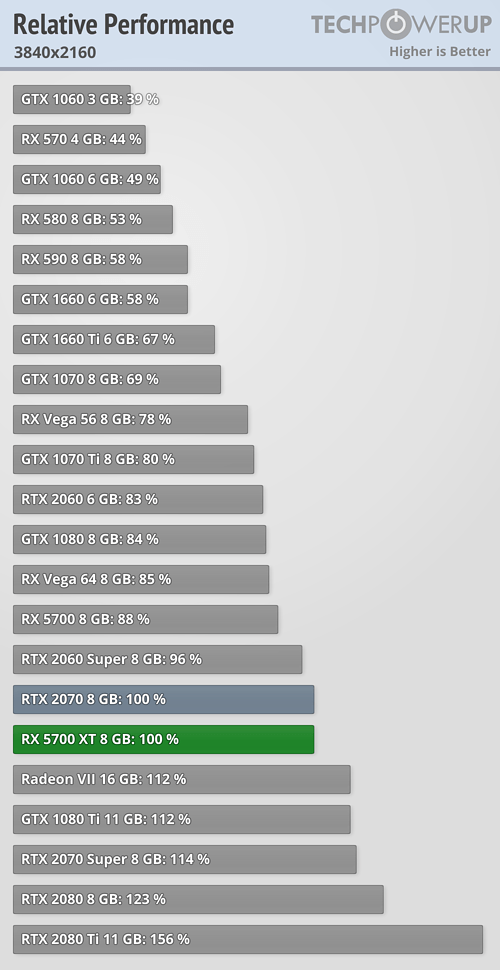

@Grey_Eyed_Elf: AMD GPUs can hardly be recommended as a go to for budget builds anymore as they're no longer weaker GPUs at a lower price but weaker GPUs at the same price while also having higher TDP. If Nvidia is rivaling and beating AMD in non ray tracing performance with the same price ranges what makes people think they'll sell ray tracing cards at normal card prices? Especially when some of these RTX cards at the same price and performance range as their AMD counterparts and gap gets even bigger once you start using ray tracing.

Pascal went without any refreshes during its run because it was that much better than what AMD had to offer. I'm glad Intel is getting in on the discrete graphics card market because AMD are just losing it and don't seem to be getting better anytime soon and the extra competition is good for pricing even if their cards don't beat Nvidia's.

Interesting people say it mostly about simplifying development. I wasn't really aware of that, but it makes sense. As I understand it they move the calculation out to specific calculation units made just for RT? So it shouldn't affect performance as much?

@Grey_Eyed_Elf: AMD GPUs can hardly be recommended as a go to for budget builds anymore as they're no longer weaker GPUs at a lower price but weaker GPUs at the same price while also having higher TDP. If Nvidia is rivaling and beating AMD in non ray tracing performance with the same price ranges what makes people think they'll sell ray tracing cards at normal card prices? Especially when some of these RTX cards at the same price and performance range as their AMD counterparts and gap gets even bigger once you start using ray tracing.

Pascal went without any refreshes during its run because it was that much better than what AMD had to offer. I'm glad Intel is getting in on the discrete graphics card market because AMD are just losing it and don't seem to be getting better anytime soon and the extra competition is good for pricing even if their cards don't beat Nvidia's.

The biggest wake up call for Navi is that AMD prices are ONLY for their blower cards... You will be looking at $450-500 for a XT when the AIB cards come out.

https://www.techpowerup.com/review/amd-radeon-rx-5700-xt/

https://www.techpowerup.com/review/amd-radeon-rx-5700-xt/29.html

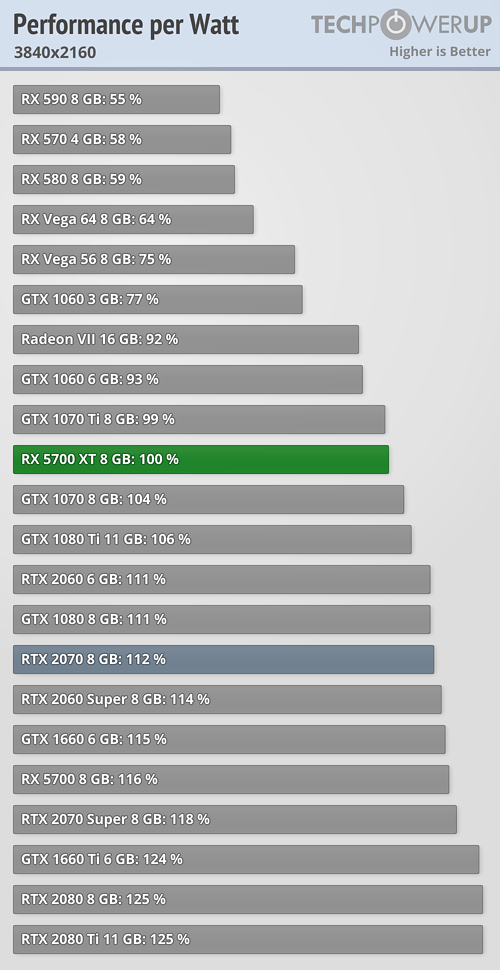

"RX 5700" performance per watt rivals Turing!.

https://www.techpowerup.com/review/amd-radeon-rx-5700/35.html

- Very energy efficient, matches NVIDIA Turing architecture - techpowerup.

RX-5700 XT's performance per watt declines due to AMD's factory overclock but it's still within Pascal's performance per watt range.

RDNA v1 is a good foundition for the future RDNA 2 GPUs. Bring on RX 5800 XT and RX 5900 XT...

PS; NAVI ~= RDNA v1

I was watching comparison videos with ray tracing on vs off, didn’t notice a game-changing difference. I feel like it’s a unnecessary waste of power. Not sure if I’m the only one who feels this way. What about you SW? Do you think next-gen consoles should use their power for something other than ray tracing?

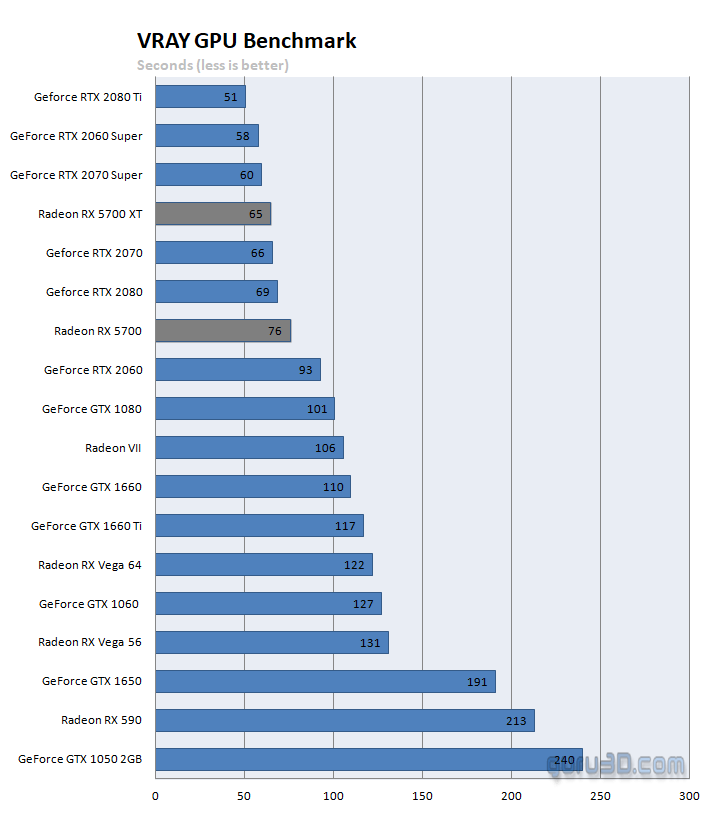

https://www.guru3d.com/articles_pages/amd_radeon_rx_5700_and_5700_xt_review,27.html

RX 5700 XT murdered RX Vega II on non-DXR raytracing! LOL...

NAVI is a good foundition to attach hardware acclerated raytracing due to it's Turing CUDA like behavior. GCN is dead.

RX Vega II is useless for AMD's workstation raytracing solution when RX-5700 XT just murdered it. AMD must quickly release RX 5800/RX 5900 to replace RX Vega II.

@Grey_Eyed_Elf: Don’t get me wrong, I see the difference, but is it worth using all that power for? Ray-tracing hits pc quite a bit in terms of performance. Next gen consoles will most likely be a little weaker than modern PC’s. Should a console really use a considerable amount of its power to implement a feature that will decrease its performance? That was my question. Not trying to downplay the importance of Ray-tracing, just not sure if it’s the best way to use the hardware.

@Grey_Eyed_Elf: Don’t get me wrong, I see the difference, but is it worth using all that power for? Ray-tracing hits pc quite a bit in terms of performance. Next gen consoles will most likely be a little weaker than modern PC’s. Should a console really use a considerable amount of its power to implement a feature that will decrease its performance? That was my question. Not trying to downplay the importance of Ray-tracing, just not sure if it’s the best way to use the hardware.

There is no best way to use the hardware its just preference which is why developers offer modes now on consoles, I would assume the same would apply to Ray Tracing and then a performance mode for 60.

Its why people game on PC because you have more options and the ability to chose your own way to play any game and if you have the money you don't really need to sacrifice much if anything at all.

Remember console are not targeting 60FPS unless they have to for the genre and they rarely run games that match PC on High let alone Ultra settings even the X1X struggles. So aside from offering modes the ray tracing implementation will not be close to the PC versions and the framerate target is half.

Consoles and PC have different standards as many console gamer's don't care about frame rate as long as its consistent and not a game that requires it take that into account along with lower settings and Ray Tracing won't be any different than any other demanding settings consoles half ass today.

It's not a necessity, but we do need to push the tech forward. Fully ray-traced scenes are leaps-and-bounds over current-gen, but we're severely limited by hardware. I have an RTX 2080, and I won't bother on PC until a few more generational leaps over my GPU. Personally, I prefer trying to maximize fps followed by resolution ATM.

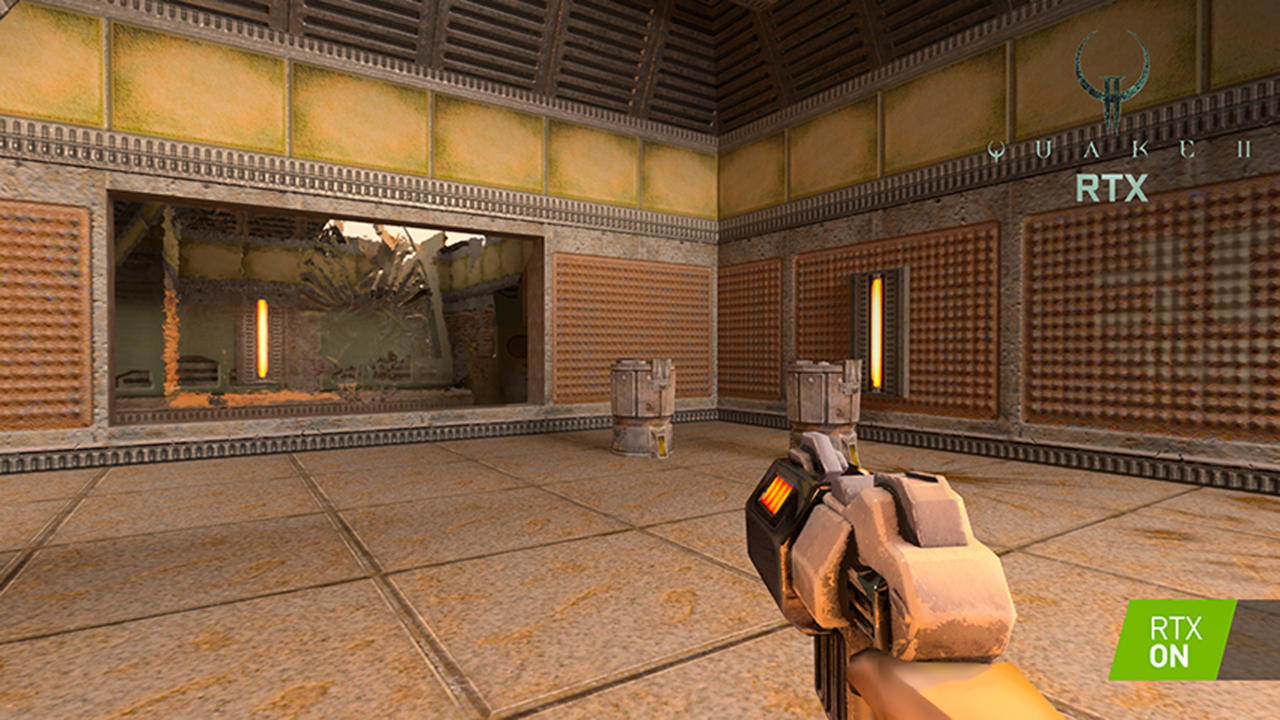

I'm questioning the logic of making the efforts for ray tracing, considering what graphics level Nvidia themselves considered an acceptable demonstration. Maybe those rays can work on high-end machines, but I'm not sold on the concept when they had to be applied to a game from the 1990s.

If some miracle happens and RTX ends up being a functional thing on console I would appreciate the option between 60fps without it and 30 with. Some games I'm perfectly fine with a 30fps target, others not so much.

Of course it's a waste of power. They wont have good raytracing for consoles without making the consoles more expensive. But it's a great buzz word to sell your console to people with. Like 4K. The consoles suck at playing games in 4K, but that didn't stop MS and Sony from using it as a selling point.

They need a gimmick to cling to, even if its not a gimmick. What i mean is that games in general have reached the mass majority(its now a casual medium) and because of this these console manufacturers are unsure whether or not people will see amd or want the next gen consoles. So, there must be some "wow" factor in the form of before amd after.

"Cheap rays" can still use intersect triangle test hardware.

The expensive operation with "expensive rays" is with hierarchical tree search engine which has large memory bandwidth component.

Geometry data is not delta color compression (DCC) workload.

Graphics are already more than good so it is just a gimmick. Is ray tracing gonna make a game better? No. Just give me good games.

Graphics are already more than good so it is just a gimmick. Is ray tracing gonna make a game better? No. Just give me good games.

I'm sorry but have you not seen the reception PS4 games get for being pretty or AAA games in general?...

All graphics are a gimmick by that lose definition you label it with, graphics will keep on improving whether you neanderthals like it or not.

Please Log In to post.

Log in to comment