maxing out a game means the highest settings you can enable ingame irrespective of resolution. theres rly no grounds for debate here. ur wrong on all accounts.

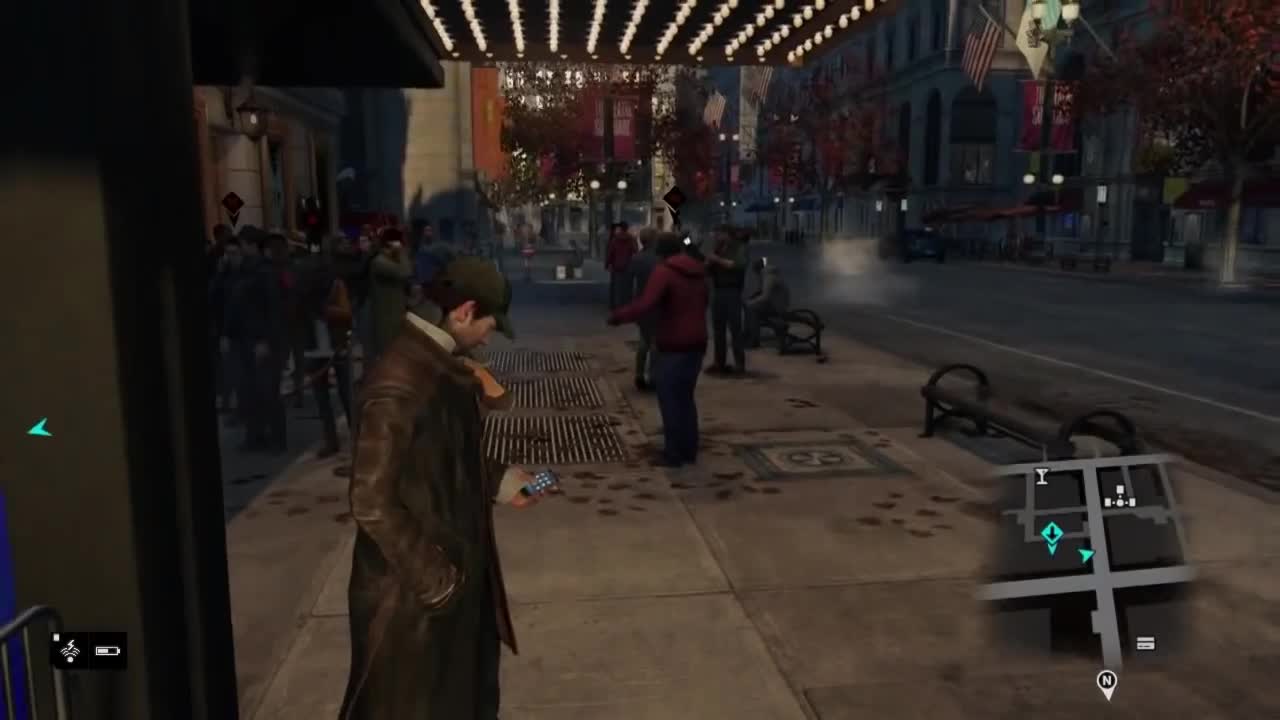

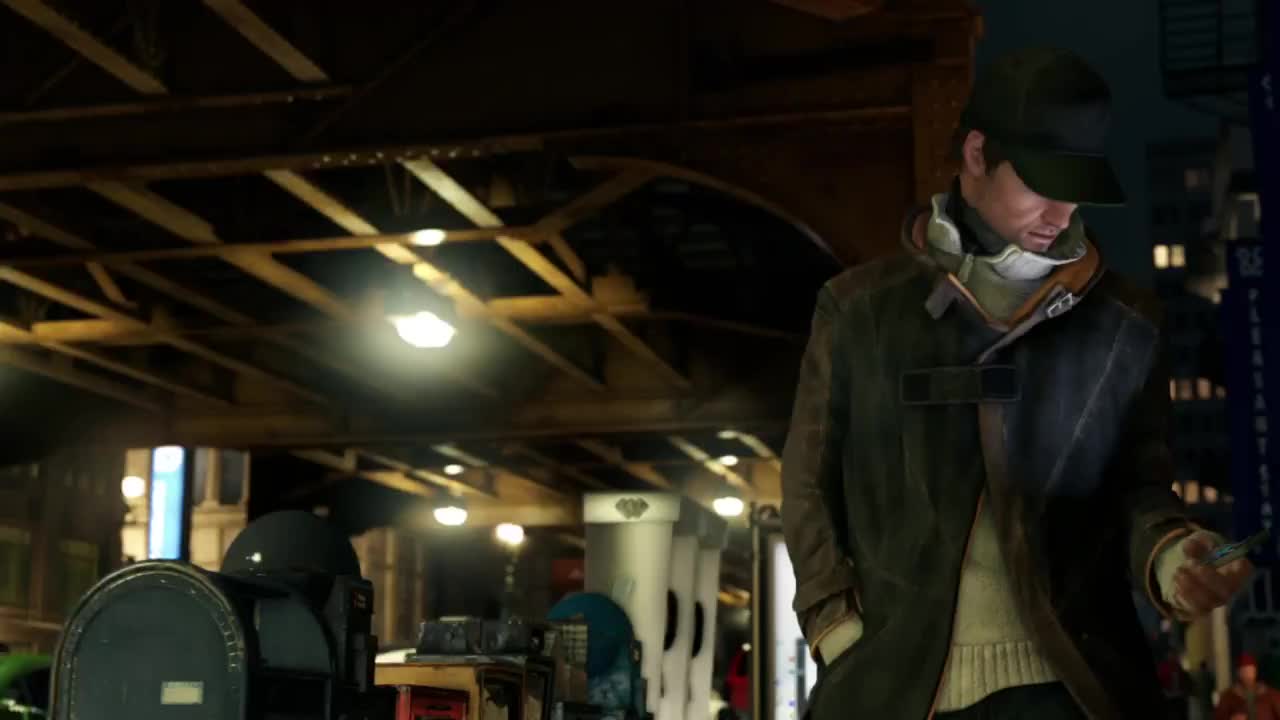

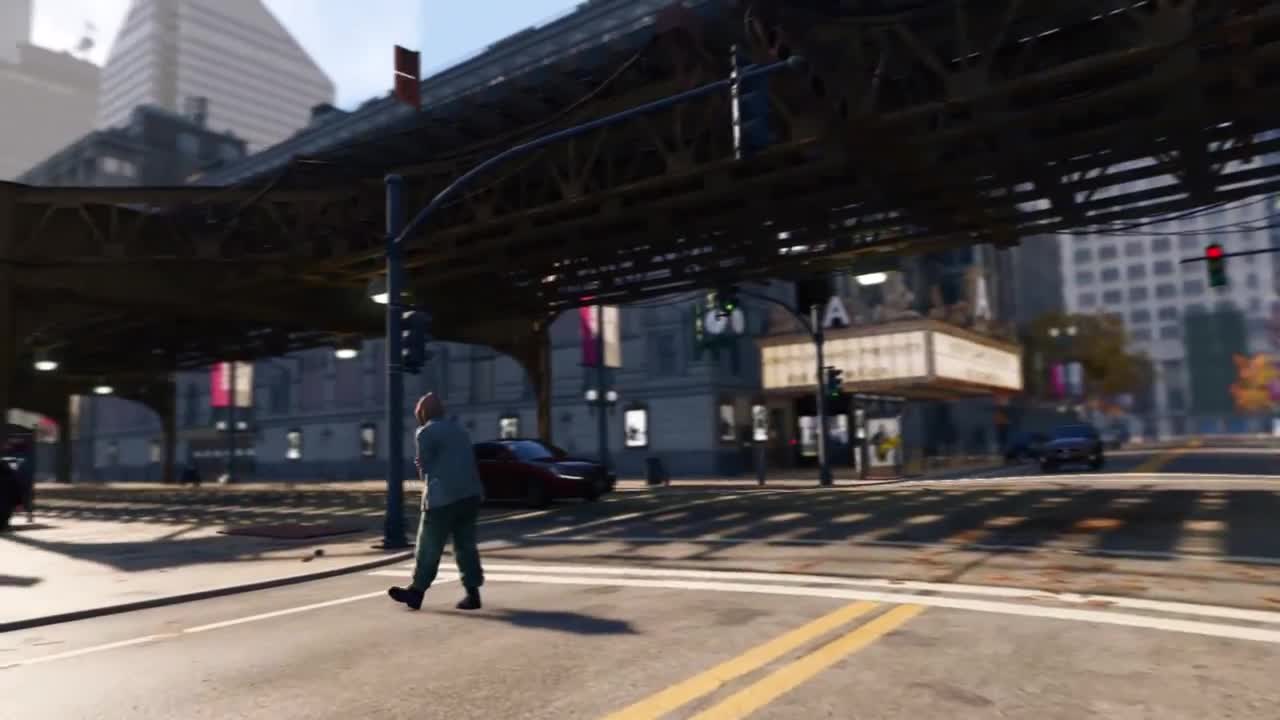

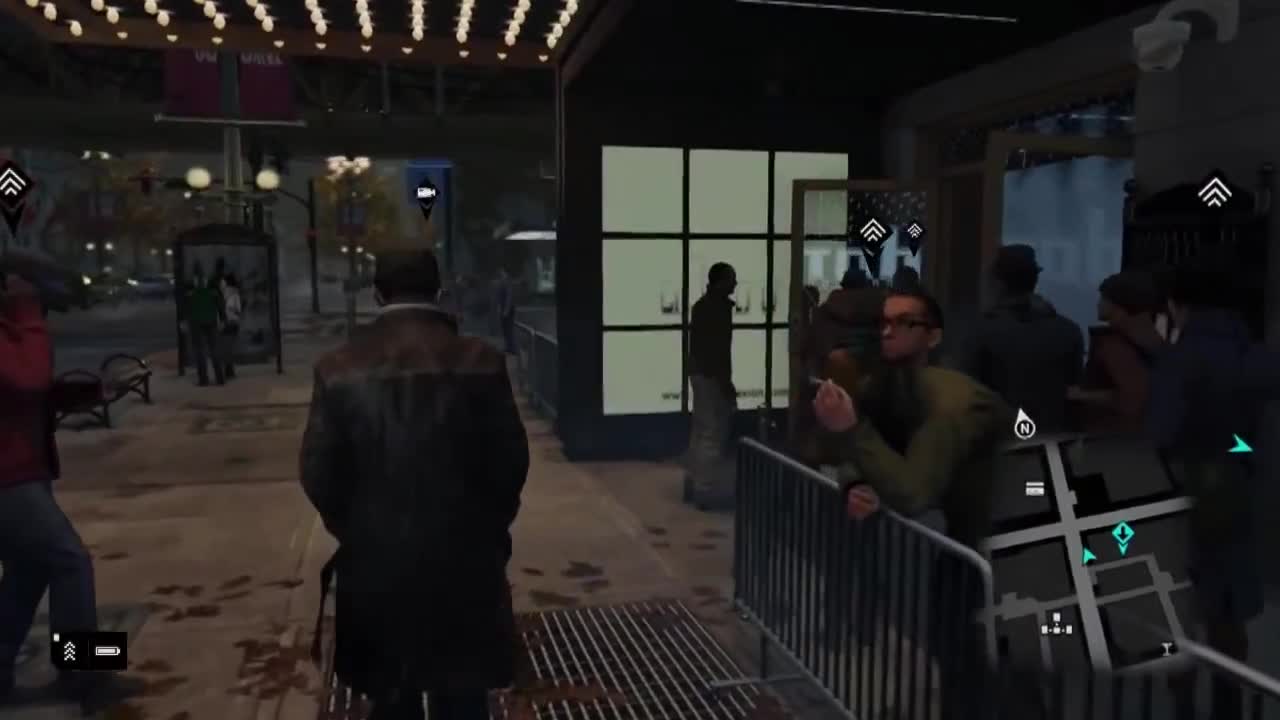

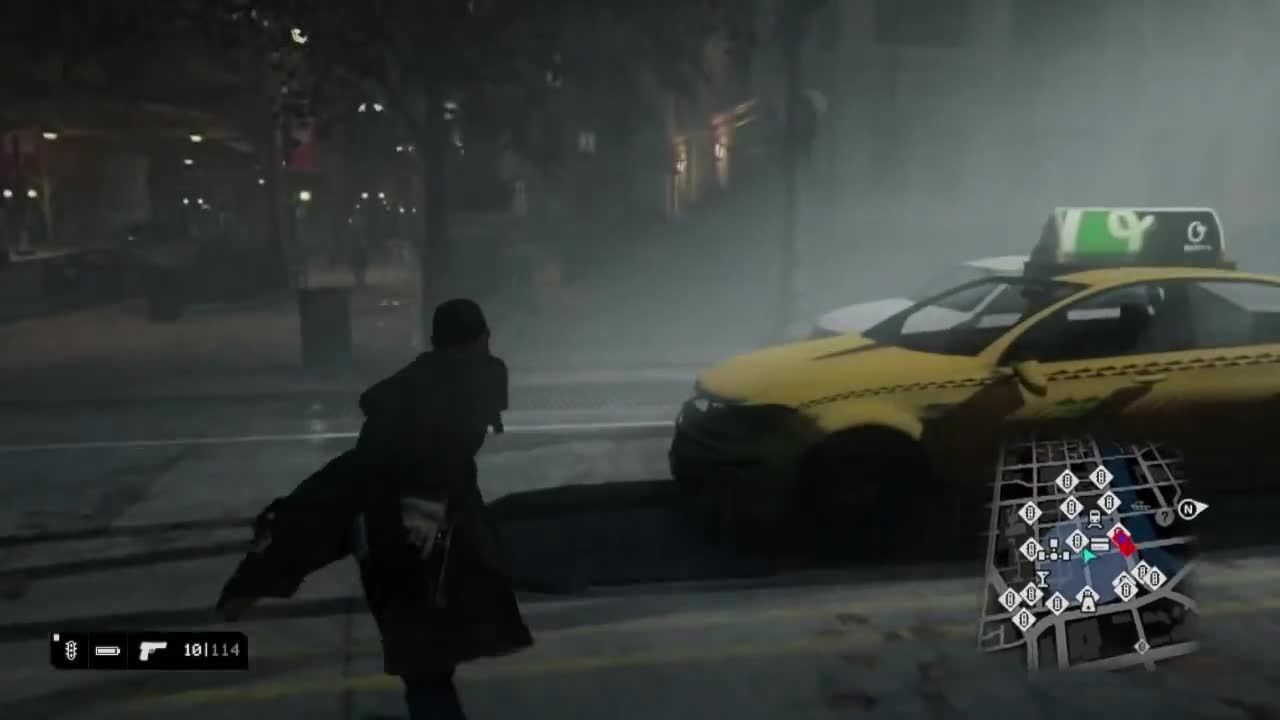

Watchdogs PC version trailer looks

This topic is locked from further discussion.

hopefully it's not just a bunch of horribly optimized crap that i'll just turn off

AC4 was riddled with that. I tried to run the game using the recommended setting for 780Ti and it still ran like ass.

What? I ran AC4 on an HD 7950 and maxed it out at vsynced 30fps. It might not be the most well optimized game ever (especially on processor side), but it looks better than the PS4 version and runs at a solid 30fps on a two year old card. Don't blow it out of proportion. It runs even better on my GTX 770. Hardly can be described as running "like ass".

Yes, I would love to max it out and run it at a solid 60fps, but it wasn't nearly as bad as some people make it sound. I hope Watch Dogs will have better utilization of multiple cores. It does seem to have more advanced simulations though so it might still be a little difficult to achieve a solid 60fps or higher with single graphics card setups.

I have the most powerful single GPU on the planet and it still can't run that game. The opening scene was horrific with the FPS bouncing all of the place.

Like I said, I've been able to get a solid 30fps with an HD 7950.

If people are talking like "oh I'll just get the PS4 version" then what's wrong with playing a superior-looking version on PC at a solid 30fps? I mean, obviously 60fps is much better than 30fps, but a solid 30fps on PC with better graphics and cleaner image quality is still better than a somewhat inferior looking version on console with worse image quality and more framerate drops.

I think the problem is that a lot of PC gamers don't know how to get a steady vsynced 30fps. You can't simply use a typical framerate limiter because the frame delivery will be out of sync with the refresh rate of of the display and you will still get some judder (although a lot less than if you let it fluctuate up and down). In order to get a steady 30fps without judder you need to use "double vsync" if you are on an AMD card or "half refresh rate vsync" if you are on Nvidia. These features can be found in RadeonPro and Nvidia Inspector respectively.

I don't know if that applies to you, but if not I can see why you would be frustrated not being able to achieve a solid 60fps with a high end card, I just don't think it is the end of the world. It still looks amazing running at a solid 30fps and offers better overall visuals than the console versions.

Is it really that much better looking on PC? Does it really effect your experience that much? It wins in the sense of having better resolution and AA maybe. But I'd say at this point it's a matter of which you prefer. Btw, did you half vsync in Nvidia control panel? Because I actually like 30fps in games like this. Gives a more cinematic feel.

*facepalm*

hopefully it's not just a bunch of horribly optimized crap that i'll just turn off

AC4 was riddled with that. I tried to run the game using the recommended setting for 780Ti and it still ran like ass.

What? I ran AC4 on an HD 7950 and maxed it out at vsynced 30fps. It might not be the most well optimized game ever (especially on processor side), but it looks better than the PS4 version and runs at a solid 30fps on a two year old card. Don't blow it out of proportion. It runs even better on my GTX 770. Hardly can be described as running "like ass".

Yes, I would love to max it out and run it at a solid 60fps, but it wasn't nearly as bad as some people make it sound. I hope Watch Dogs will have better utilization of multiple cores. It does seem to have more advanced simulations though so it might still be a little difficult to achieve a solid 60fps or higher with single graphics card setups.

I have the most powerful single GPU on the planet and it still can't run that game. The opening scene was horrific with the FPS bouncing all of the place.

Like I said, I've been able to get a solid 30fps with an HD 7950.

If people are talking like "oh I'll just get the PS4 version" then what's wrong with playing a superior-looking version on PC at a solid 30fps? I mean, obviously 60fps is much better than 30fps, but a solid 30fps on PC with better graphics and cleaner image quality is still better than a somewhat inferior looking version on console with worse image quality and more framerate drops.

I think the problem is that a lot of PC gamers don't know how to get a steady vsynced 30fps. You can't simply use a typical framerate limiter because the frame delivery will be out of sync with the refresh rate of of the display and you will still get some judder (although a lot less than if you let it fluctuate up and down). In order to get a steady 30fps without judder you need to use "double vsync" if you are on an AMD card or "half refresh rate vsync" if you are on Nvidia. These features can be found in RadeonPro and Nvidia Inspector respectively.

I don't know if that applies to you, but if not I can see why you would be frustrated not being able to achieve a solid 60fps with a high end card, I just don't think it is the end of the world. It still looks amazing running at a solid 30fps and offers better overall visuals than the console versions.

Is it really that much better looking on PC? Does it really effect your experience that much? It wins in the sense of having better resolution and AA maybe. But I'd say at this point it's a matter of which you prefer. Btw, did you half vsync in Nvidia control panel? Because I actually like 30fps in games like this. Gives a more cinematic feel.

*facepalm*

That's loosingends level of reasoning right there.

hopefully it's not just a bunch of horribly optimized crap that i'll just turn off

AC4 was riddled with that. I tried to run the game using the recommended setting for 780Ti and it still ran like ass.

What? I ran AC4 on an HD 7950 and maxed it out at vsynced 30fps. It might not be the most well optimized game ever (especially on processor side), but it looks better than the PS4 version and runs at a solid 30fps on a two year old card. Don't blow it out of proportion. It runs even better on my GTX 770. Hardly can be described as running "like ass".

Yes, I would love to max it out and run it at a solid 60fps, but it wasn't nearly as bad as some people make it sound. I hope Watch Dogs will have better utilization of multiple cores. It does seem to have more advanced simulations though so it might still be a little difficult to achieve a solid 60fps or higher with single graphics card setups.

I have the most powerful single GPU on the planet and it still can't run that game. The opening scene was horrific with the FPS bouncing all of the place.

Like I said, I've been able to get a solid 30fps with an HD 7950.

If people are talking like "oh I'll just get the PS4 version" then what's wrong with playing a superior-looking version on PC at a solid 30fps? I mean, obviously 60fps is much better than 30fps, but a solid 30fps on PC with better graphics and cleaner image quality is still better than a somewhat inferior looking version on console with worse image quality and more framerate drops.

I think the problem is that a lot of PC gamers don't know how to get a steady vsynced 30fps. You can't simply use a typical framerate limiter because the frame delivery will be out of sync with the refresh rate of of the display and you will still get some judder (although a lot less than if you let it fluctuate up and down). In order to get a steady 30fps without judder you need to use "double vsync" if you are on an AMD card or "half refresh rate vsync" if you are on Nvidia. These features can be found in RadeonPro and Nvidia Inspector respectively.

I don't know if that applies to you, but if not I can see why you would be frustrated not being able to achieve a solid 60fps with a high end card, I just don't think it is the end of the world. It still looks amazing running at a solid 30fps and offers better overall visuals than the console versions.

Is it really that much better looking on PC? Does it really effect your experience that much? It wins in the sense of having better resolution and AA maybe. But I'd say at this point it's a matter of which you prefer. Btw, did you half vsync in Nvidia control panel? Because I actually like 30fps in games like this. Gives a more cinematic feel.

loosingends? is that you?

Looks as good as the E3 2013 PS4 demo.

It's still downgraded from the 2012 demo though.

It definitely looks better than the PS4 footage from 2013. Especially in terms of things like shadow quality and overall image quality.

And as far as the initial E3 2012 demo, I don't understand what some people think looks better about it. Aside from the cinematic BS at the beginning of the video, the actual gameplay looks no better than this PC footage.

Let's look at some screen captures from the 2012 demo and the recent PS4 footage:

(Not to mention that the PC footage looks better than the PS4 footage)

Those images from 2014 are taken from PS4 dev kit . Which means it was enhanced version of actual PS4 version . The real PS4 gameplay looks like this in 2014 (Starts at 0:29). Video also contains dev kit footage .

And PC version like this .

The difference is huge (even if you compare it with PS4 dev kit footage , which have awful shadows , taking best shots doesn't from video doesn't help either)

The difference between PC and Console is already this big. It's been less than a year. This is what happens when consoles are launched with ancient hardware by tech standards. Imagine how different PC vs Console will look in two years?

Consolites will go from the ps4 is king to graphics and mods don't matter to steam sucks lmfao

theres games today a 780ti cant max at 1080p, even overclocked to 1.2 ghz.

name them

crysis 3, metro, tomb raider

And the glorious super charged PC that the 900pStation is, runs all of them is 3980x2160 @ 120 FPS in 4D.

@jhonMalcovich:

Thanks. I was actually a bit worried, because PC advances so quickly and my graphics card was released two years ago. How many more years do you think my PC will remain a high end machine? I fear that the clock is ticking, with DDR4 and GDDR6 around the corner.

You shouldn't look at the fast advancement as something negative. Your PC will be able to play games in a beautiful way. The faster it goes it will just mean that your next PC will be that much better than this already great PC. The developers are not going to cut support for your PC just because it's a couple years old no matter how fast it goes, because they like money. The worst thing that could happen is for it to slow down because Sony and MS can't sell decent configurations to their shareholders. I sincerely hope that people abandon the PS4 and X1 and that PC hardware skyrockets instead of slowing down.

I can see people still damage controlling . I don't get it , I really want to meet one of these corporate slaves and see how they look . What is the point of defending a plastic from a corporation that doesn't care about you .? You can believe whatever you want . You can (try to) misguide people by saying that you'll need 2,000+$ PC to run the game at high settings but it doesn't change the fact that Watch Dogs look a lot better on PC than consoles and you don't need more than 700-800$ to build a high end PC that can last for years to come . Deal with it and get a life .

I believe this gen consoles will face annihilation, it is already start to happen at the beginning of the gen. kind of inevitable with the low-end hardware they pack in.

theres games today a 780ti cant max at 1080p, even overclocked to 1.2 ghz.

name them

crysis 3, metro, tomb raider

Now, those games you listed have documented performance problems and are also some of the most demanding PC games on the market.

Metro being a prime example of said performance issues, released around the time of the "lol housefires" GTX480 and even now a GTX780Ti has trouble running it with all the bells and whistles. That is not the problem of the card, that is a game related problem.

Thats not going to change going forward though. my point was people act like having a 780ti is instant max settings in all games at 4k.

"cant wait to play this in 4k on my 780"

If you were playing games at 4K, I'd assume you'd have the hardware to back that claim up considering the cost of a 4K panel.

that wasnt my quote. i have a single 780ti and would never purchase a 4k monitor until multi gpu is completely redesigned.

IFY, it would help if you quoted the person you are responding too, makes things easier.

Multiple GPU's are always going to have problems. Its gotten better but its no where near where it should.

Please Log In to post.

Log in to comment