https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

Xbox launches x1x which pounds psfaux pro for power.. " Power is irrelevant "

Article speculating both next xbox and ps5 will be more powerful than stadia...

"Witness the power of the ps5".

Welcome to moo-moo land.

Kratos

A new console in ~1.5 years will be better than the current one for same/similar price? Wow, much pwned.

You must feel identified. To bad about your buyers remorse purchase bud.

People who were smart didn't waste their $500 on a half step mid-gen system like the X.

If you're in the PC camp, you should have just waited til next gen system released before upgrading the current expensive PC components.

If you've done either from last year til now, yes, you are pawned much.

So a console that is 3 years old when a rumored new console is going to be released is less powerful!?? More news at eleven...

Seriously boxrekt/recloud or whoever else you are currently, don’t you ever get tired of making these embarrassing threads that always backfire horrible on you?? I mean if I didn’t knew better, I would think that you were an lemming/hermit alt that just make these threads to take the piss on cows and make them look silly....

https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

NAVI GPU has extra delta memory compression coverage over memory operation functions and additional GCN compute features such as "wavebreak" with different size grids.

The compute difference is enough to separate NAVI gfx10 compute from Vega gfx9 compute behavior.

"Wavebreak" with different grid size relates to GCN's wavefront compute instruction payload. NAVI may include features like "variable shader rate" which relates to grid size. NVIDIA's Turing GPUs already includes "variable shader rate" and "rapid pack maths" down to INT8 at quad rate speed.

GCN with 56 CU at 1680 Mhz has 12.0 TFLOPS.

GCN with 56 CU at 1800 Mhz has 12.9 TFLOPS.

Looking about RTX 2070 to near RTX 2080 level GPU.

A new console in ~1.5 years will be better than the current one for same/similar price? Wow, much pwned.

You must feel identified. To bad about your buyers remorse purchase bud.

People who were smart didn't waste their $500 on a half step mid-gen system like the X.

If you're in the PC camp, you should have just waited til next gen system released before upgrading the current expensive PC components.

If you've done either from last year til now, yes, you are pawned much.

Yeah, I have a major buyers remorse on my 4-10 year old PC components.

Let me know what kind of PS5 games it will have cause specs isn't something I'm gonna care about. I just want new IP exclusives only!

@MonsieurX: I think a few here have said that sadly.

I can't recall anyone making that damn claim and if they did they were idiots.

That's because no one never ever stated that claim cause who in their right mind thinks Xbox One X will be powerful then PS5? This s next-gen were talking about and it should be PS5 vs Xbox Scarlet, why bring in Xbox One X?

oh shiiiiit

Eat that google choke on it PS5 > STADIA THEN 10 STADIAS

PS5 looks to have 2080 performance in console form next gen = far greater actual game performance due to the standardized optimization for console specific hardware.

The power=graphics narrative for PC gamers is going to effectively die next gen.

For all of the rants about how great PC is for being more powerful than console, the only thing they had to show for it this gen was screaming native 4k and 60fps (even though most PCs don't get that now for every game) with console multiplats.

PS5 will be doing 4k60 standard, plus have exclusives that blow every multiplat on PC out of the water no matter how powerful.

The only difference a more powerful Xbox could deliver is a few more frames per second. But if both are doing 60fps, unless the next xbox is doing 120fps @ 4k (it wont) will it make a difference? No, it wont.

Next gen PC gamers would need to spend 2k to get any type of advantage even in multiplats over consoles just to say look I'm doing 8k. But the actual graphics? They simply won't have any argument ammo for that claim.

https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

NAVI GPU has extra delta memory compression coverage over memory operation functions and additional GCN compute features such as "wavebreak" with different size grids.

The compute difference is enough to separate NAVI gfx10 compute from Vega gfx9 compute behavior.

"Wavebreak" with different grid size relates to GCN's wavefront compute instruction payload. NAVI may include features like "variable shader rate" which relates to grid size. NVIDIA's Turing GPUs already includes "variable shader rate" and "rapid pack maths" down to INT8 at quad rate speed.

GCN with 56 CU at 1680 Mhz has 12.0 TFLOPS.

GCN with 56 CU at 1800 Mhz has 12.9 TFLOPS.

Looking about RTX 2070 to near RTX 2080 level GPU.

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

NAVI GPU has extra delta memory compression coverage over memory operation functions and additional GCN compute features such as "wavebreak" with different size grids.

The compute difference is enough to separate NAVI gfx10 compute from Vega gfx9 compute behavior.

"Wavebreak" with different grid size relates to GCN's wavefront compute instruction payload. NAVI may include features like "variable shader rate" which relates to grid size. NVIDIA's Turing GPUs already includes "variable shader rate" and "rapid pack maths" down to INT8 at quad rate speed.

GCN with 56 CU at 1680 Mhz has 12.0 TFLOPS.

GCN with 56 CU at 1800 Mhz has 12.9 TFLOPS.

Looking about RTX 2070 to near RTX 2080 level GPU.

1800Mhz at 12.9TF are the numbers I've been seeing suggested.

oh shiiiiit

Eat that google choke on it PS5 > STADIA THEN 10 STADIAS

PS5 looks to have 2080 performance in console form next gen = far greater actual game performance due to the standardized optimization for console specific hardware.

The power=graphics narrative for PC gamers is going to effectively die next gen.

For all of the rants about how great PC is for being more powerful than console, the only thing they had to show for it this gen was screaming native 4k and 60fps (even though most PCs don't get that now for every game) with console multiplats.

PS5 will be doing 4k60 standard, plus have exclusives that blow every multiplat on PC out of the water no matter how powerful.

The only difference a more powerful Xbox could deliver is a few more frames per second. But if both are doing 60fps, unless the next xbox is doing 120fps @ 4k (it wont) will it make a difference? No, it wont.

Next gen PC gamers would need to spend 2k to get any type of advantage even in multiplats over consoles just to say look I'm doing 8k. But the actual graphics? They simply won't have any argument ammo for that claim.

2080 card costs £600-£700 in uk, your telling me you will get equivalent performance in a sub £500 console. That I find hard to believe.

Either 2080 will come down a lot, or sacrifices will be made.

oh shiiiiit

Eat that google choke on it PS5 > STADIA THEN 10 STADIAS

PS5 looks to have 2080 performance in console form next gen = far greater actual game performance due to the standardized optimization for console specific hardware.

The power narrative for PC gamers is going to effectively die next gen.

For all of the rants about how great PC is for being more powerful than console, the only thing they had to show for it this gen was screaming native 4k and 60fps (even though most PCs don't get that now for every game) with console multiplats.

PS5 will be doing 4k60 standard, plus have exclusives that blow every multiplat on PC out of the water no matter how powerful.

The only difference a more powerful Xbox could deliver is a few more frames per second. But if both are doing 60fps, unless the next xbox is doing 120fps @ 4k (it wont) will it make a difference? No, it wont.

Next gen PC gamers would need to spend 2k to get any type of advantage even in multiplats over consoles just to say look I'm doing 8k. But the actual graphics? They simply won't have any argument ammo for that claim.

Specs for RTX 2080

FP: 11.8 TFLOPS FP32 at ~2000 Mhz from CUDA FP cores.

INT: 11.8 TIOPS INT32 at ~2000 Mhz from CUDA INT cores.,

Combined programmable compute rate: 23.6 TOPS

Rapid path maths: double rate FP16 and quad rate INT8. Not factoring Tensor cores which is 80.5 TFLOPSFP16 (DirectML re-mapped capable).

Geometry-raster engines: 6

L2 cache: 4 MB

ROPS: 64 at ~2000 Mhz

----------

Specs for GCN CU 56 as per VII feature set

INT and FP shared: 12 TFLOPS FP32 / TIOPS INT32 at 1680 Mhz

Combined programmable compute rate: 12 TOPS

Rapid path maths: double rate FP16 and quad rate INT8

Geometry-raster engines: 4. It's unlikely NAVI on consoles to exceed 4 units.

L2 cache: 4 MB

ROPS: 64 at 1680 Mhz

Vega 56 at 1710 Mhz rivals or just above RTX 2070.

Vega 56 at 1710 Mhz didn't beat RTX 2080.

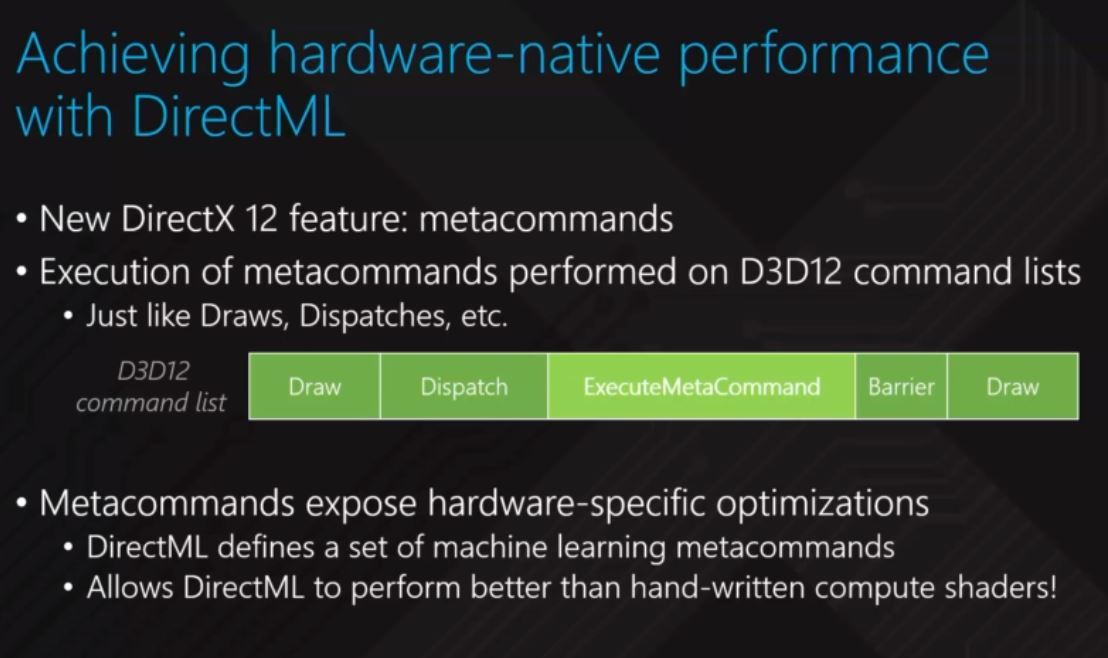

Microsoft plans to introduce DirectML that would enable newer GPUs to use rapid pack maths and Tensor cores with consistent API access.

"Standardized optimization for console specific hardware" is nearly a non-issue on PC since NVIDIA GPUs has NVAPI.

RTX's full potential (CUDA core's rapid pack maths and Tensor core's rapid pack maths) is coming with DirectX12's DirectML access.

AMD's Vega GPU's rapid pack maths also uses DirectX12's DirectML access.

Microsoft is building Windows PC as the "open Xbox" with Xbox One style official Direct3D hit-the-metal access.

AMD is not only company throwing TFLOPS at the problem.

PS5 looks to have 2080 performance in console form next gen = far greater actual game performance due to the standardized optimization for console specific hardware.

2080 card costs £600-£700 in uk, your telling me you will get equivalent performance in a sub £500 console. That I find hard to believe.

Either 2080 will come down a lot, or sacrifices will be made.

Sure, I think Nvidia will rebrand the 2080 before or around PS5 release to bring the price down. Nvidia are all about re-branding out of the blue.

We already know PS5 will be 11 - 13TF and Sony not going over $500 retial so maybe that's why that investors were predicting those 20 million PC gamers would cross over to consoles?

I'm drooling thinking about what Sony's first party can do with all that power. Power is meaningless without the talent to harness it and Sony's devs have been shown to be masters of their craft over the years. What they did with the base PS4's 1.8 tflops is beyond outstanding and I shudder to think what they can do with 10 times or more of that. HZD2 and GOW2 will melt eyeballs.

https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

NAVI GPU has extra delta memory compression coverage over memory operation functions and additional GCN compute features such as "wavebreak" with different size grids.

The compute difference is enough to separate NAVI gfx10 compute from Vega gfx9 compute behavior.

"Wavebreak" with different grid size relates to GCN's wavefront compute instruction payload. NAVI may include features like "variable shader rate" which relates to grid size. NVIDIA's Turing GPUs already includes "variable shader rate" and "rapid pack maths" down to INT8 at quad rate speed.

GCN with 56 CU at 1680 Mhz has 12.0 TFLOPS.

GCN with 56 CU at 1800 Mhz has 12.9 TFLOPS.

Looking about RTX 2070 to near RTX 2080 level GPU.

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

How do we know it won't be HBM?

oh shiiiiit

Eat that google choke on it PS5 > STADIA THEN 10 STADIAS

PS5 looks to have 2080 performance in console form next gen = far greater actual game performance due to the standardized optimization for console specific hardware.

The power=graphics narrative for PC gamers is going to effectively die next gen.

For all of the rants about how great PC is for being more powerful than console, the only thing they had to show for it this gen was screaming native 4k and 60fps (even though most PCs don't get that now for every game) with console multiplats.

PS5 will be doing 4k60 standard, plus have exclusives that blow every multiplat on PC out of the water no matter how powerful.

The only difference a more powerful Xbox could deliver is a few more frames per second. But if both are doing 60fps, unless the next xbox is doing 120fps @ 4k (it wont) will it make a difference? No, it wont.

Next gen PC gamers would need to spend 2k to get any type of advantage even in multiplats over consoles just to say look I'm doing 8k. But the actual graphics? They simply won't have any argument ammo for that claim.

2080 card costs £600-£700 in uk, your telling me you will get equivalent performance in a sub £500 console. That I find hard to believe.

Either 2080 will come down a lot, or sacrifices will be made.

The 20 series cards are a scam IMO so I expect Nvidia to lower their price in time for next gen. Right now we are paying the Nvidia tax to get access to raytracing from them.

It's why I chose to wait till next gen insoles are out before upgrading my card (I'm still rocking a 1080). Once the new consoles are out Nvidia will finally have competition again, and they will lower price and release new cards to stay competitive, and that's when I'll bite and upgrade my PC.

https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

Got my X for $220, go play your hot stack of pancakes that plays games worse than the base version.

Who said the X1X would be more powerful than next gen consoles?

I don't know about that, but I certainly remember people saying it would be stronger than high end PCs. ?

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

How do we know it won't be HBM?

HBM v2 is garbage on AMD hardware.

Considering the Xbox One X has 6 TFLOP’s now and console generation jumps has always more than doubled in power.

So it should be expected that Sony and MS will aim higher than 10 TFLOPs.

However, Stadia might be just an app with minimal hardware, a controller and a Chromecast.

For me, I am lucky to have super fast internet and Stadia looks quite attractive. I could spend that $400-500 on games instead of the console.

Next gen leap will be the least noticeable yet. With x1x already performing 4k native @30fps, the most we're looking at is 30fps more which hardly a giant leap. Probably upscaled 4k at that, so pricing, launch dates, policies and games will have much more of an effect on sales than power when it comes to selling most consoles.

4k sets still aren't anywhere near in the majority of homes, so anyone claiming we're looking at 8k gaming next gen is delusional.

Next gen leap will be the least noticeable yet. With x1x already performing 4k native @30fps, the most we're looking at is 30fps more which hardly a giant leap. Probably upscaled 4k at that, so pricing, launch dates, policies and games will have much more of an effect on sales than power when it comes to selling most consoles.

4k sets still aren't anywhere near in the majority of homes, so anyone claiming we're looking at 8k gaming next gen is delusional.

Scaling CPU power by 4X at 1.6Ghz clock speed enables PC's style NPC count from Ashes of Singularity.

CPU power dictates game-play simulation's quality and scale.

GPU only renders CPU's view port.

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

How do we know it won't be HBM?

HBM v2 is garbage on AMD hardware.

That something to do with GCN or something?

https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

NAVI GPU has extra delta memory compression coverage over memory operation functions and additional GCN compute features such as "wavebreak" with different size grids.

The compute difference is enough to separate NAVI gfx10 compute from Vega gfx9 compute behavior.

"Wavebreak" with different grid size relates to GCN's wavefront compute instruction payload. NAVI may include features like "variable shader rate" which relates to grid size. NVIDIA's Turing GPUs already includes "variable shader rate" and "rapid pack maths" down to INT8 at quad rate speed.

GCN with 56 CU at 1680 Mhz has 12.0 TFLOPS.

GCN with 56 CU at 1800 Mhz has 12.9 TFLOPS.

Looking about RTX 2070 to near RTX 2080 level GPU.

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

Includes real time power consumption comparison.

https://www.reddit.com/r/Amd/comments/ao43xl/radeon_vii_insanely_overvolted_undervolting/

VII is insanely over volted.

AMD has to solve >170 watts power spikes.

Note why MS created their own power curve profile for X1X.

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

How do we know it won't be HBM?

HBM v2 is garbage on AMD hardware.

That something to do with GCN or something?

Diminishing returns with VII.

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

How do we know it won't be HBM?

HBM v2 is garbage on AMD hardware.

That something to do with GCN or something?

Diminishing returns with VII.

maybe there's bandwidth wasted, reduced power draw is one of the obvious factors as well tho.

How do we know it won't be HBM?

HBM v2 is garbage on AMD hardware.

That something to do with GCN or something?

Diminishing returns with VII.

maybe there's bandwidth wasted, reduced power draw is one of the obvious factors as well tho.

Quad HBM v2 stacks...

NVIDIA: added 128 ROPS with GV100

AMD: recycled 64 ROPS from Vega 64. LOL. WTF is AMD doing?

@ronvalencia: More NPCs at 30fps higher than now.

Hardly awe inspiring is it?

PC's city scale example.

FInd it strange google aren't just catering to the casual gaming crowd. Who I see going to bother with the stadia when streaming isn't even mainstream.

https://gamerant.com/ps5-specs-rumors/

also

Sorry Pedro :'(

PS5 rumored to be between 12 - 14TF.

Don't be sorry if you just bought a $500 X or an $800 PC that will be less powerful than PS5, Be better

Kratos

NAVI GPU has extra delta memory compression coverage over memory operation functions and additional GCN compute features such as "wavebreak" with different size grids.

The compute difference is enough to separate NAVI gfx10 compute from Vega gfx9 compute behavior.

"Wavebreak" with different grid size relates to GCN's wavefront compute instruction payload. NAVI may include features like "variable shader rate" which relates to grid size. NVIDIA's Turing GPUs already includes "variable shader rate" and "rapid pack maths" down to INT8 at quad rate speed.

GCN with 56 CU at 1680 Mhz has 12.0 TFLOPS.

GCN with 56 CU at 1800 Mhz has 12.9 TFLOPS.

Looking about RTX 2070 to near RTX 2080 level GPU.

and how much power draw on 1800mhz 56cu GCN on gddr6? certainly sounds like console specs

Includes real time power consumption comparison.

https://www.reddit.com/r/Amd/comments/ao43xl/radeon_vii_insanely_overvolted_undervolting/

VII is insanely over volted.

AMD has to solve >170 watts power spikes.

Note why MS created their own power curve profile for X1X.

AMD is obviously shipping them with higher base voltage to get better yields, so while you can easily UV most of the cards on the market, the lower ones would just crash

Includes real time power consumption comparison.

https://www.reddit.com/r/Amd/comments/ao43xl/radeon_vii_insanely_overvolted_undervolting/

VII is insanely over volted.

AMD has to solve >170 watts power spikes.

Note why MS created their own power curve profile for X1X.

AMD is obviously shipping them with higher base voltage to get better yields, so while you can easily UV most of the cards on the market, the lower ones would just crash

For X1X, Microsoft created a circuitry to figure out the ideal power curve for each APU silicon without end user's intervention i.e. superior craftsmanship while AMD is being lazy.

Considering the Xbox One X has 6 TFLOP’s now and console generation jumps has always more than doubled in power.

So it should be expected that Sony and MS will aim higher than 10 TFLOPs.

However, Stadia might be just an app with minimal hardware, a controller and a Chromecast.

For me, I am lucky to have super fast internet and Stadia looks quite attractive. I could spend that $400-500 on games instead of the console.

Lmao, you won't own any games on Stadia fool

I find that hard to believe considering AMDs most powerful gpu on the market right now is the 14 TF vega VII, which at 14 TF still can’t beat the 11TF 1080ti and barely beats the non ti 1080. Everybody knows that if you want a high end gpu you go with NVidia because they have no competition in the high end gpu market. I’m concerned about how they(Ms and Sony) are gonna be able to go much higher than what the X has to offer, because based on rumors we’re to expect 2080ti levels of performance. Yet AMD has no gpu to compete with the 2080 let alone 2080/1080 ti.

How can you put that much power in a small box while managing to maintain safe operating temps? Also find it weird that AMD would not release a high end pc gpu and continue to let NVidia dominate that space while instead building a high end gpu for consoles that they won’t make as much money from. Realistically I expect both consoles to use something along the lines of an r5 1600 paired with a Rx 590, with gpu and cpu clocks being tuned down to help manage heat.

People are setting their sights to high, now that consoles basically use off the shelf pc parts the ceiling for consoles is a little easier to guesstimate. As long as the consoles continue to use AMD they will never be able to compete with high end gpus because that space belongs to Nvidia. When it comes to gaming it’s always been Intel+Nvidia>AMD. Remember, Ryzen has not changed that narrative Ryzen is just the “bang for your buck” king, if you’re going for best FPS/Res Nvidia+Intel is the definitive answer. #Undisputed

2080 card costs £600-£700 in uk, your telling me you will get equivalent performance in a sub £500 console. That I find hard to believe.

Either 2080 will come down a lot, or sacrifices will be made.

The xbox 360 had performance in 2005 equivalent to a $550 video card,yet it was from $300 to $400 dollars,MS was eating a $125 dollar loss per unit i remember well.

To sony and MS those chips will not cost them what you pay for a card or even close,they buy in millions those are chips that other wise would have never be sold,and will instantly make that GPU the highest seller.

For example the 7870 of AMD is the best selling single model GPU inside GCN thanks of the PS4.

Now i am not saying it will come with a 2080 level of performance at all,but that they simply will not pay the same you would on market.

Please Log In to post.

Log in to comment