A turd in dynamic 4K is still a turd.

Changing the resolution isn't gonna save that turd of a game. LOL @ butthurt lems still comparing the piece of shit to Uncharted.

You've never even played it, silence.

A turd in dynamic 4K is still a turd.

Changing the resolution isn't gonna save that turd of a game. LOL @ butthurt lems still comparing the piece of shit to Uncharted.

You've never even played it, silence.

@kuu2:

Announced during Gamescon was that QB would get the 4K treatment.

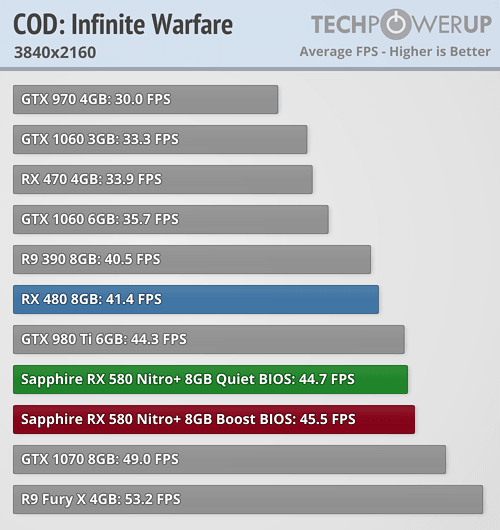

4K with 30 fps target medium settings on GTX 980 Ti ~6 TFLOPS.

Nvidia TFlops =/= AMD TFlops and you know that.

It could still end up being 4K but your video isn't proof.

AMD's TFLOPS are not the problem i.e. AMD's graphics pipeline and effective memory bandwidth that surrounds CU's ALUs are the real problem. LOL.

You can compare AMD and NV FLOPS only under specific conditions.

Pure GpGPU workloads doesn't have graphics pipelines involvement. AMD GPUs are having issues with converting the above TFLOPS into graphics performance.

In certain situation, AMD's TFLOPS rivals NVIDIA's TFLOPS counterpart.

X1X's GPU modifications was against certain 3D engines e.g. Unreal Engine 4. COD IW wouldn't need X1X's GPU modifications since it has avoided well known GCN's performance landmines.

My posted video is only use for indications for 6 TFLOPS class GPU with higher memory bandwidth.

On TFLOPS, AMD's DSP is about par with NVIDIA's Paxwell DSP.

@i_p_daily: lol and then you went to claim that he was one of those people who think UC4 is greatness but of course you left out that. Those people are much more than you and your butthurt.

The clown is so upset. Uncharted 4 is universally acclaimed and considered a masterpiece by the industry and millions of gamers while Quantum Flop is a forgotten flop only brought up by angry neckbeard lems like him in forums. The industry has moved on and pretty much forgotten Quantum Fail and it makes him very angry and upset lol.

@ronvalencia: Congrats, you just wasted your time posting a bunch of shitty charts that still don't prove your point.

A turd in dynamic 4K is still a turd.

Changing the resolution isn't gonna save that turd of a game. LOL @ butthurt lems still comparing the piece of shit to Uncharted.

You've never even played it, silence.

You don't need to eat shit to know it probably tastes bad.

Well maybe in your case you do, I'm not judging you lol.

@ronvalencia: Congrats, you just wasted your time posting a bunch of shitty charts that still don't prove your point.

Your "Nvidia TFlops =/= AMD TFlops and you know that." is a load of BS when the real problem is NOT AMD's TFLOPS.

AMD's TFLOPS is good for Ethereum mining and it's similar to Pascal counterparts. That's pure math operations, memory bandwidth and no graphics pipeline involvement (e.g. Delta Color Compression) .

@ronvalencia: Congrats, you just wasted your time posting a bunch of shitty charts that still don't prove your point.

Your "Nvidia TFlops =/= AMD TFlops and you know that." is a load of BS when the real problem is NOT AMD's TFLOPS.

AMD's TFLOPS is good for Ethereum mining and it's similar to Pascal counterparts. That's pure math operations, memory bandwidth and no graphics pipeline involvement (e.g. Delta Color Compression) .

You're joking if you actually think I read your posts. Don't bother replying me Wrongvalencia. You're always wrong and you type paragraph essays that are full of shit to back up your wrong statements. You never admit when you fail in an argument.

AMD TFlop =/= Nvidia TFlop and that's that.

You don't have the Xbone GPU benchmarks to prove the rubbish you're posting. The game may or may not be native 4K when it releases on Xbone (I doubt it), and until we get official confirmation from DF, your posts are horseshit.

@ronvalencia: Congrats, you just wasted your time posting a bunch of shitty charts that still don't prove your point.

Your "Nvidia TFlops =/= AMD TFlops and you know that." is a load of BS when the real problem is NOT AMD's TFLOPS.

AMD's TFLOPS is good for Ethereum mining and it's similar to Pascal counterparts. That's pure math operations, memory bandwidth and no graphics pipeline involvement (e.g. Delta Color Compression) .

You're joking if you actually think I read your posts. Don't bother replying me Wrongvalencia. You're always wrong and you type paragraph essays that are full of shit to back up your wrong statements. You never admit when you fail in an argument.

AMD TFlop =/= Nvidia TFlop and that's that.

You don't have the Xbone GPU benchmarks to prove the rubbish you're posting. The game may be native 4K when it releases on Xbone (I doubt it), and until you get official benchmarks, your posts are horseshit.

You assign blame on things that are not the real problem.

DF over clocks Vega 56's memory to Vega 64's 484 GB/s and result yields Vega 56 being very close GTX 1080 game and TDP results.

IF AMD configured Vega for 44 CU and clocks for 1.7 Ghz (important for non-CU hardware) and 484 GB/s memory bandwidth, then it might have GP104 clone.

Memory bandwidth potentials without latency factors.

Vega's 484 GBps x Polaris 1.36 DCC boost = 658 GBps

GTX 1080's 320 GBps x Pascal 2.00 DCC boost = 640 GBps

Similar memory bandwidth potential with similar results.

You're full of shit, cow dung.

Quantum Break = Xbox version of The Order 1886 - Monumental FLOP

I would say Quantum Break is a much bigger flop given that it was made by the creators of Max Payne and Alan Wake. The Order was made by a dev that previously only made handheld games.

There was a lot of people calling Remedy the "Naughty Dog of Xbox" before Quantum Break released. The Order never had much hype. It released near Bloodborne and that game was getting all the hype.

hope this exposes this great game to more people. Most of the bad reviews had 2 things in common,

1. Reviewers trying to play this game as a third person cover shooter. When it was never meant to be played that way, with all the awesome time bending powers, which were awesome.

2. Game was checkerboarded from 720 to 1080. Funny how that works, right ps4 pro fans?

I give the game a solid 8. drawbacks were game is a little short and last battle is confusing and just dumb. They could have made a horde mode multi player and added dlc to give the game some longevity. I would love a sequel. Actors were great. Way better game play than Alan Wake.

LOL the game couldn't even do 1080p.

So I'm sure the 4k will be an upscaled blur just like the 1080p was an upscaled blur.

Don't wanna join the hatewagon here in this thread, as I think QB was a good game, but its upscaling was a blurry mess.

yeah and native 1440p was way too much for my old 980ti to handle, havent tried the dx11 version tho which is apparently much better for nvidia cards especially

@ronvalencia: Congrats, you just wasted your time posting a bunch of shitty charts that still don't prove your point.

Your "Nvidia TFlops =/= AMD TFlops and you know that." is a load of BS when the real problem is NOT AMD's TFLOPS.

AMD's TFLOPS is good for Ethereum mining and it's similar to Pascal counterparts. That's pure math operations, memory bandwidth and no graphics pipeline involvement (e.g. Delta Color Compression) .

You're joking if you actually think I read your posts. Don't bother replying me Wrongvalencia. You're always wrong and you type paragraph essays that are full of shit to back up your wrong statements. You never admit when you fail in an argument.

AMD TFlop =/= Nvidia TFlop and that's that.

You don't have the Xbone GPU benchmarks to prove the rubbish you're posting. The game may be native 4K when it releases on Xbone (I doubt it), and until you get official benchmarks, your posts are horseshit.

You assign blame on things that are not the real problem.

DF over clocks Vega 56's memory to Vega 64's 484 GB/s and result yields Vega 56 being very close GTX 1080 game and TDP results.

IF AMD configured Vega for 44 CU and clocks for 1.7 Ghz (important for non-CU hardware) and 484 GB/s memory bandwidth, then it might have GP104 clone.

Memory bandwidth potentials without latency factors.

Vega's 484 GBps x Polaris 1.36 DCC boost = 658 GBps

GTX 1080's 320 GBps x Pascal 2.00 DCC boost = 640 GBps

Similar memory bandwidth potential with similar results.

You're full of shit, cow dung.

You're wrong wrongvalencia

Try again

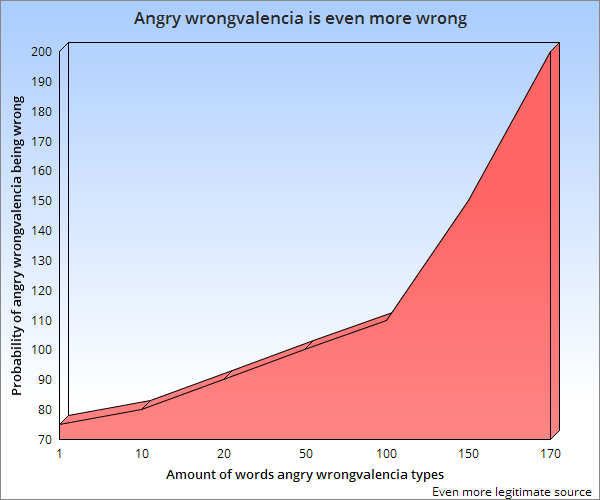

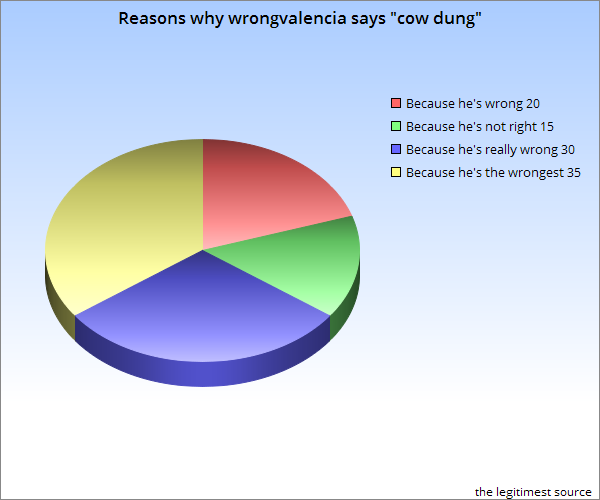

You clearly wrote more than 50 words. And yes the probability goes over 100 but the chart isn't wrong, that's just how wrong you can get.

Another non-system wars post coming from cow dung.

Your "Nvidia TFlops =/= AMD TFlops and you know that." is a load of BS when the real problem is NOT AMD's TFLOPS.

AMD's TFLOPS is good for Ethereum mining and it's similar to Pascal counterparts. That's pure math operations, memory bandwidth and no graphics pipeline involvement (e.g. Delta Color Compression) .

You're joking if you actually think I read your posts. Don't bother replying me Wrongvalencia. You're always wrong and you type paragraph essays that are full of shit to back up your wrong statements. You never admit when you fail in an argument.

AMD TFlop =/= Nvidia TFlop and that's that.

You don't have the Xbone GPU benchmarks to prove the rubbish you're posting. The game may be native 4K when it releases on Xbone (I doubt it), and until you get official benchmarks, your posts are horseshit.

You assign blame on things that are not the real problem.

DF over clocks Vega 56's memory to Vega 64's 484 GB/s and result yields Vega 56 being very close GTX 1080 game and TDP results.

IF AMD configured Vega for 44 CU and clocks for 1.7 Ghz (important for non-CU hardware) and 484 GB/s memory bandwidth, then it might have GP104 clone.

Memory bandwidth potentials without latency factors.

Vega's 484 GBps x Polaris 1.36 DCC boost = 658 GBps

GTX 1080's 320 GBps x Pascal 2.00 DCC boost = 640 GBps

Similar memory bandwidth potential with similar results.

You're full of shit, cow dung.

You're wrong wrongvalencia

Try again

You clearly wrote more than 50 words. And yes the probability goes over 100 but the chart isn't wrong, that's just how wrong you can get.

Another non-system wars post coming from cow dung.

What!? I try to use your chart language to see if it works better than english and now you cry foul? It's a chart wrongvalencia, deal with it! My charts don't lie unlike yours.

@drlostrib: ? You're right. Luckily I don't read his charts

You don't have any counter arguments.

Techreport/Beyond3D FLOPS benchmark >>>>>>>>>>>>>> YOU.

You're joking if you actually think I read your posts. Don't bother replying me Wrongvalencia. You're always wrong and you type paragraph essays that are full of shit to back up your wrong statements. You never admit when you fail in an argument.

AMD TFlop =/= Nvidia TFlop and that's that.

You don't have the Xbone GPU benchmarks to prove the rubbish you're posting. The game may be native 4K when it releases on Xbone (I doubt it), and until you get official benchmarks, your posts are horseshit.

You assign blame on things that are not the real problem.

DF over clocks Vega 56's memory to Vega 64's 484 GB/s and result yields Vega 56 being very close GTX 1080 game and TDP results.

IF AMD configured Vega for 44 CU and clocks for 1.7 Ghz (important for non-CU hardware) and 484 GB/s memory bandwidth, then it might have GP104 clone.

Memory bandwidth potentials without latency factors.

Vega's 484 GBps x Polaris 1.36 DCC boost = 658 GBps

GTX 1080's 320 GBps x Pascal 2.00 DCC boost = 640 GBps

Similar memory bandwidth potential with similar results.

You're full of shit, cow dung.

You're wrong wrongvalencia

Try again

You clearly wrote more than 50 words. And yes the probability goes over 100 but the chart isn't wrong, that's just how wrong you can get.

Another non-system wars post coming from cow dung.

What!? I try to use your chart language to see if it works better than english and now you cry foul? It's a chart wrongvalencia, deal with it! My charts don't lie unlike yours.

Another non-system wars post coming from cow dung.

You assign blame on things that are not the real problem.

DF over clocks Vega 56's memory to Vega 64's 484 GB/s and result yields Vega 56 being very close GTX 1080 game and TDP results.

IF AMD configured Vega for 44 CU and clocks for 1.7 Ghz (important for non-CU hardware) and 484 GB/s memory bandwidth, then it might have GP104 clone.

Memory bandwidth potentials without latency factors.

Vega's 484 GBps x Polaris 1.36 DCC boost = 658 GBps

GTX 1080's 320 GBps x Pascal 2.00 DCC boost = 640 GBps

Similar memory bandwidth potential with similar results.

You're full of shit, cow dung.

You're wrong wrongvalencia

Try again

You clearly wrote more than 50 words. And yes the probability goes over 100 but the chart isn't wrong, that's just how wrong you can get.

Another non-system wars post coming from cow dung.

What!? I try to use your chart language to see if it works better than english and now you cry foul? It's a chart wrongvalencia, deal with it! My charts don't lie unlike yours.

Another non-system wars post coming from cow dung.

Uh oh. The charts are not looking good wrongy

Another non-system wars post coming from cow dung.

Uh oh. The charts are not looking good wrongy

Another non-system wars post coming from cow dung.

You're wrong wrongvalencia

Try again

You clearly wrote more than 50 words. And yes the probability goes over 100 but the chart isn't wrong, that's just how wrong you can get.

Another non-system wars post coming from cow dung.

What!? I try to use your chart language to see if it works better than english and now you cry foul? It's a chart wrongvalencia, deal with it! My charts don't lie unlike yours.

Another non-system wars post coming from cow dung.

Uh oh. The charts are not looking good wrongy

Another non-system wars post coming from cow dung.

Better than a lot of shit on the PS4. lol

Not really. It was so bad that Microsoft and Remedy parted ways.

Another non-system wars post coming from cow dung.

What!? I try to use your chart language to see if it works better than english and now you cry foul? It's a chart wrongvalencia, deal with it! My charts don't lie unlike yours.

Another non-system wars post coming from cow dung.

Uh oh. The charts are not looking good wrongy

Another non-system wars post coming from cow dung.

More indisputable proof you're wrong

Better than a lot of shit on the PS4. lol

Not really. It was so bad that Microsoft and Remedy parted ways.

Wrong, Remedy modified Quantum Break for X1X. The out-source contract is for just Quantum Break project, nothing more, nothing less.

Another non-system wars post coming from cow dung.

More indisputable proof you're wrong

Another non-system wars post coming from cow dung/mowgly1/PinkAnimal/dakur.

yeah and native 1440p was way too much for my old 980ti to handle, havent tried the dx11 version tho which is apparently much better for nvidia cards especially

On PC I'm offered the choice to turn off the upscaling, which makes the game run like total crap (eventhough my hardware more than suffices to handle it.)

Or leave the upscaling on and make everything look really blurry.

At least on Xbox One I could understand it, because consoles are underpowered. But after how great Alan Wake and other Remedy games ran on PC, I was kinda expecting better from them.

The PC version is total whack.

yeah and native 1440p was way too much for my old 980ti to handle, havent tried the dx11 version tho which is apparently much better for nvidia cards especially

On PC I'm offered the choice to turn off the upscaling, which makes the game run like total crap (eventhough my hardware more than suffices to handle it.)

Or leave the upscaling on and make everything look really blurry.

At least on Xbox One I could understand it, because consoles are underpowered. But after how great Alan Wake and other Remedy games ran on PC, I was kinda expecting better from them.

The PC version is total whack.

From medium settings, max settings for QB seems to be wasteful...

If the game is going to run as poorly as it has then 4k is a pipe dream for the Xbox One X.

Even with the lowest settings set on PC at 4k res my 1080ti couldn't hold 60fps. Although most of the time the gpu usage was hovering around 70-78%.

I can't get this game to fully utilize my GPU.

Also at max settings at 4k without upscaling (so native 4k) my fps is in the low 20s.

If I want to play at ultra settings smoothly at 4k I am forced to use the upscaling setting which is not real 4k. And even then I have to cap the fps at 30 otherwise it is not a smooth experience with vsync enabled. If I disable vynsc its alot smoother with the fps cap above 30fps but then I get lots of screen tearing.

Quantum Break is one of the most unoptimized games I have ever come across.

@RyviusARC: steam dx11 or winstore one? I havent bothered reinstalling the game and only got the winstore one

QB flopped hard.

like the order killzone sf and driveclub? at least QB scored higher than these games.

If the game is going to run as poorly as it has then 4k is a pipe dream for the Xbox One X.

Even with the lowest settings set on PC at 4k res my 1080ti couldn't hold 60fps. Although most of the time the gpu usage was hovering around 70-78%.

I can't get this game to fully utilize my GPU.

Also at max settings at 4k without upscaling (so native 4k) my fps is in the low 20s.

If I want to play at ultra settings smoothly at 4k I am forced to use the upscaling setting which is not real 4k. And even then I have to cap the fps at 30 otherwise it is not a smooth experience with vsync enabled. If I disable vynsc its alot smoother with the fps cap above 30fps but then I get lots of screen tearing.

Quantum Break is one of the most unoptimized games I have ever come across.

Run it on medium settings.

Intel Core i7 6700K 4.00 GHz

16 GB DDR4 RAM

Palit GeForce GTX 980 Ti Super Jetstream 6GB

MSI Z170A PC Mate Mainboard

Windows 10 Pro 64bit

If the game is going to run as poorly as it has then 4k is a pipe dream for the Xbox One X.

Even with the lowest settings set on PC at 4k res my 1080ti couldn't hold 60fps. Although most of the time the gpu usage was hovering around 70-78%.

I can't get this game to fully utilize my GPU.

Also at max settings at 4k without upscaling (so native 4k) my fps is in the low 20s.

If I want to play at ultra settings smoothly at 4k I am forced to use the upscaling setting which is not real 4k. And even then I have to cap the fps at 30 otherwise it is not a smooth experience with vsync enabled. If I disable vynsc its alot smoother with the fps cap above 30fps but then I get lots of screen tearing.

Quantum Break is one of the most unoptimized games I have ever come across.

Run it on medium settings.

Intel Core i7 6700K 4.00 GHz

16 GB DDR4 RAM

Palit GeForce GTX 980 Ti Super Jetstream 6GB

MSI Z170A PC Mate Mainboard

Windows 10 Pro 64bit

Didn't you read my post?

Even at the lowest settings my 1080ti can't hold 60fps at 4k native res. Th game wouldn't fully utilize my GPU.

Believe it or not, I actually played this through to completion. I won't say it was bad (the TV portions definitely felt like a low-rent CW show, though)...

It was just very forgettable. I could really only tell you one or two story beats without looking up a playthrough.

It was a looker, though.

So, according to DF, QB is 1440p checkerboard on XboneX

True 4k

According to DF, ROTR X1X's native 4K while ProOfShit4 has 4K CB.

I played this through twice. Don't feel a desire to play it again anytime soon in any resolution. Liked the story and mix with the show. LOL at the hate from people here. These same people swear by acclaim by others with other games but ignore the fact this game is positively received by the industry. Cherry picking for the win. JAPANIMEEEEEEE GOOOOOOOOOO!!!!

Wrongvalencia ends up with another shitty wrong prediction. Who knew he was talking out of his ass? ? I'm not surprised at all.

Please Log In to post.

Log in to comment