@tormentos said:

@ronvalencia said:

@boxrekt: NAVI is not GCN.

AMD claims NAVI is designed for scalability.

7950 and 7870 have the same geometry input, rasterization(raster engine) and ROPS hardware. 7950 has the advantage of higher resolution performance.

https://www.techpowerup.com/review/amd-hd-7850-hd-7870/26.html

AMD can't afford to repeat Vega's scaling debacle when competing against multi SKU Turing let alone Ampere.

Based on 2-weeks raw Gears 5 port's built-in benchmark at PC Ultra settings, XSX GPU delivers RTX 2080 level results, hence it's RDNA 2 TFLOPS is scaling.

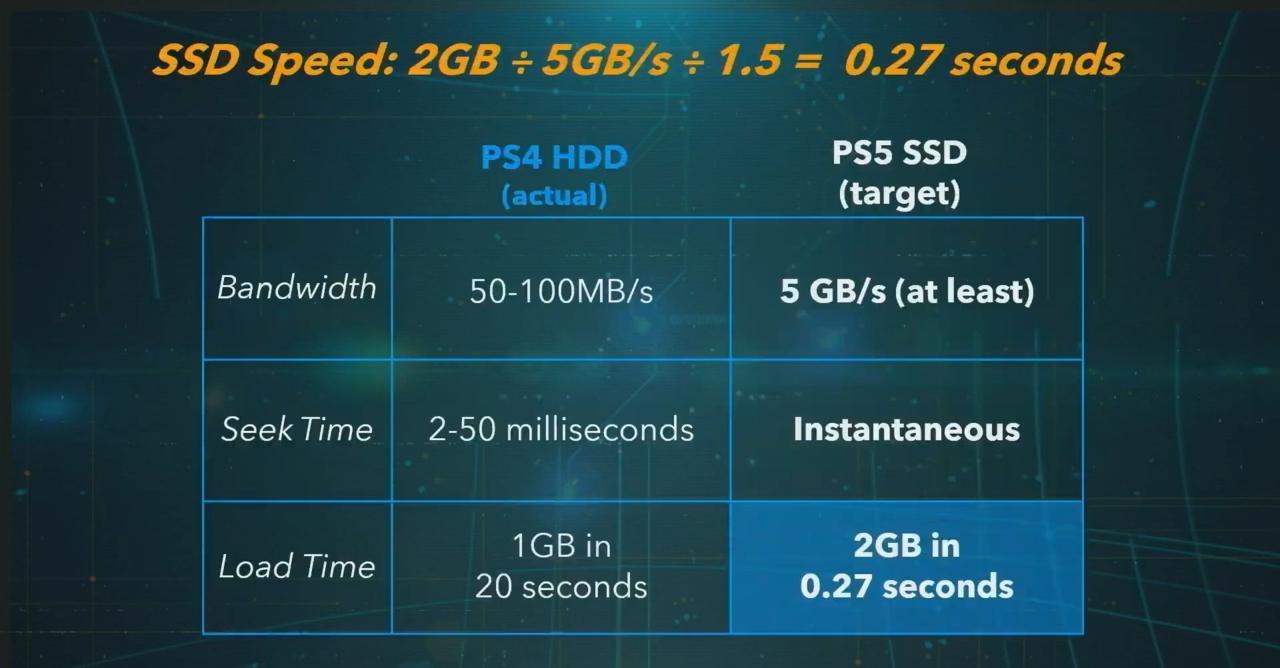

PS5 and XSX comparison is like overclocked RTX 2070 at 2230 Mhz (10.28 TFLOPS) with 448 GB/s bandwidth (best situation, CPU's memory access rates throttled with PS4 style mitigation) against MSI RTX 2080 Super Gaming X Trio with 12.155 TFLOPS with 496 GB/s (PC style brute force, CPU has PC style memory access rates)

And the PS5 and xbox are not Turin based or from Nvidia.

Neither the 3.5GB of slower meory can be use like killzone SF example because sony is not MS and Killzone is not from MS either.

So for some one who constantly use examples that don't represent the hardware in question,i say you look sad stating Navi isn't GCN.

Actually, RDNA's move towards wave32 compute length is influenced by NVIDIA CUDA's warp32 compute length (aka wave32 under Shader Model 6).

Many NVIDIA Gameworks shader programs are designed around CUDA shader size, hence gimped on GCN's wave64. This is one of many reasons why you see GPUs like RX Vega 56 with 10.54 TFLOPS getting rivaled by GTX 1070 with ~6.5 TFLOPS. API abstraction has limits and this is one of them.

AMD needs to be involved in the game's development process to create a game-ready driver with shader replacements.

NVIDIA's wave32 can easily support GCN's wave64, but not the other way around.

In GCN based game consoles, GCN wave64 shader consideration is built into the console market, but gaming PC is the largest single desktop gaming platform that needs the combine game consoles market to beat it.

Under RDNA v1, the move towards wave32 includes 7 clock cycle instruction retirement latency instead of 8 cycles with GCN wave64 which is about 12.5 percent perf/watt improvement.

Vega GCN has 12 clock cycle instruction retirement latency, hence RDNA v1 in GCN BC mode has 33% perf/watt improvement. This is how AMD partly builds it's 50% perf/watt improvements from the same 7nm process with Radeon VII.

RDNA v2 has further improvements with AMD claiming another 50% perf/watt improvements from RDNA v1 e.g. RDNA 2 being designed for higher clock speed and possible lower latency improvements.

The old Raja Koduri regime that relies on many wave64s to hide pipeline latency is over.

Many years ago, these major Terrascale and GCN compute issues are in the back of my mind when I compared it against NVIDIA CUDA.

NVIDIA's Maxwell v2 was the "Core 2 Duo" moment on AMD. Maxwell v1 was "Core Duo" warning to AMD.

I don't need MS when discussing gaming hardware.

NVIDIA is not Intel, hence it's harder to pull off a "Ryzen" style disruption for AMD.

TFLOPS comparison assumes 0 latencies when instruction retirement clock cycles are not factored in, hence they only useful within the same design family comparison.

Summary

- AMD has tackled wave compute length format issues.

- AMD has to tackle the instruction clock cycle retirement latency issue.

- Renewed competition is a win for consumers.

Log in to comment