New CPU and GPU news is expected.

Phil Spencer is in the house as well!

A Look Into Next Gen: AMD CES Keynote Thread

I'm pretty much decided to go AMD with my next card anyway. This is encouraging. So sick of Nvidia and their bullshit. The problem is that historically they have genuinely offered excellent hardware and so they've deserved first place based on the numbers alone... and so I, like many, go with them. But they suck nuts as a company and these days you can get something tolerably competitive in the mid-high range where I like to be from AMD. So why give Nvidia my money? Let them earn it back, I say.

Sounds like this thing will still require 285 watts just like Vega 64 to get the performance being shown...

This thing is fail

Navi is the savior we needed

Sounds like this thing will still require 285 watts just like Vega 64 to get the performance being shown...

This thing is fail

Navi is the savior we needed

Don't know about fail as it's a potent card for the price range that goes toe to toe with RTX 2080 but I'm thinking the wattage will be high compared to RTX since AMD didn't mention it while they were quick to make a note of it in case of Ryzen. It's crazy when you think about it because it's on 7nm and RTX is essentially a 16nm with improvements (I know Nvidia/TSMC calls it 12nm).

Though good to see the card has double the bandwidth and RAM.

hmm. so is it basically a Vega 60 on 7NM and they clocked the nards off it or did they do more? on the one hand im thinking of finally biting the bullet on a 4K screen (asus have made a monitor thats nigh on perfect for me. i swear they are stalking my posting history :P). but i dont think my 4GB RX 580 is going to cut the mustard with 4K. hmm....still might hold off.

@neutrinoworks: Is wattage that much of a problem though? Good quality high wattage PSUs are fairly cheap these days.

So, another electron-guzzling miniature frying pan. And it's $700.

Meanwhile, I just found a RTX 2070 that's very attractively-priced and I know for sure will definitely fit in my ITX case. I'm only holding off for now because it's from a brand (Zotac) I know nothing about...

Do better, AMD, or I'm gonna buy it.

@neutrinoworks: Is wattage that much of a problem though? Good quality high wattage PSUs are fairly cheap these days.

Less power also means less heat and potentially more overclocking headroom.

And easier to keep noise levels down

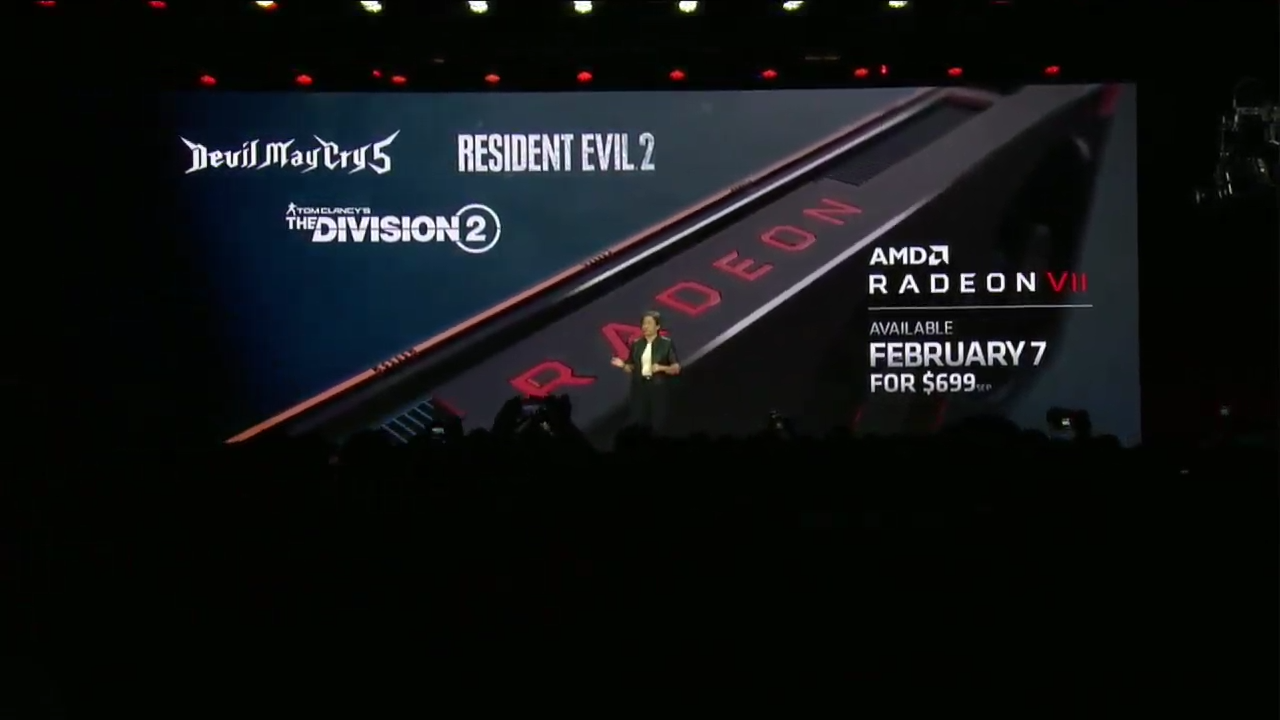

Honestly this even more somewhat disappointing than the RTX lineup, main issues with RTX cards are the prices. This Radeon VII is on the hyped up 7nm and yet, this uses more wattage than the RTX 2080 and price at $699? If AMD had did that for $499, that would have been a total blow across Nvidia bow. But all in all, it's priced at $699 because of the the 16GB Vram and the fact it's better for video editing and ''content creation'' but when you look at it that way, the price is okayish, everybody should have known AMD weren't going to match 2080Ti in performance unfortunately. Does it have HDMI 2.1 though? I still cannot find out the info but I'm assuming no which is total BS if it doesn't!

All that said and done, AMD Radeon VII is a really not somewhat bad for a compute card, but the problem is, the Vega architecture is just not optimized for gaming. AMD said the OpenCL performance is improved by 62% which is absolutely massive since it will put it way ahead of the RTX 2080Ti in a lot of OpenCL benchmarks.

At this point, it's best to wait for tech youtubers benchmarks for the final decision making.

This is underwhelming. If it would have been released for $499.99 then AMD would have been onto something. This is interesting now as next gen consoles are in the works; I see a bigger impact on the CPU side of things instead of the GPU to keep costs down on the consoles.

Same performance as the 2080 and the same price? Welp, another card that doesn't peak my interest. Sticking with my good 'ol 1070. However, if it's basically the same as the 2080 and priced the same--what's the point? Unless you really really want to jump ship to team Red, if I were upgrading, I'd rather just get the 2080.

Anyone who didn't buy those overpriced RTX cards and waited is being rewarded for their patience.

Or they could've waited for a better RTX mid-range like the latest RTX 2060? I admit, I was really impress what I saw from the benchmarks and for only $350, not too shabby.

@davillain-: The main attraction is the content creator application of the card. 16GB or RAM comes in handy when rendering. I would like to see the performance for Unity GPU Accelerated lightmap since it runs on Radeon Rays.

I'm sure the Radeon VII will do fine on it's own. This announcement is alot to take into but the 16GB is the most interesting though.

@davillain-: If it beats RTX2080 in games while being priced roughly the same, that's damn well done by AMD. Nvidia needs competition.

Totally. I'm sick of Nvidia taking all the fun away without competition. Intel better come through with their own GPU in the future.

@neutrinoworks: Is wattage that much of a problem though? Good quality high wattage PSUs are fairly cheap these days.

Less power also means less heat and potentially more overclocking headroom.

And easier to keep noise levels down

That makes sense, personally I don't see those as detracting factors but I can see why they would for others. Overclocking doesn't even get you much of an increase in fps, heat is manageable with proper cooling (which isn't that expensive to get relative to your other PC parts), I wear headphones so PC noise doesn't influence me.

@neutrinoworks: Is wattage that much of a problem though? Good quality high wattage PSUs are fairly cheap these days.

Less power also means less heat and potentially more overclocking headroom.

And easier to keep noise levels down

That makes sense, personally I don't see those as detracting factors but I can see why they would for others. Overclocking doesn't even get you much of an increase in fps, heat is manageable with proper cooling (which isn't that expensive to get relative to your other PC parts), I wear headphones so PC noise doesn't influence me.

That extra headroom is also why a 16-core part might be possible.

@techhog89: That would definitely be interesting to see :). Also looking forward to Navi and how all of this will influence next gen consoles. I'm still on board with ray tracing though and I hope AMD adopts it in the coming years.

So, another electron-guzzling miniature frying pan. And it's $700.

Meanwhile, I just found a RTX 2070 that's very attractively-priced and I know for sure will definitely fit in my ITX case. I'm only holding off for now because it's from a brand (Zotac) I know nothing about...

Do better, AMD, or I'm gonna buy it.

Zotac's definitely a good brand. Not as good as EVGA, but their cards are good. Plus, they have a 5-year extended warranty, if that helps.

Honestly this even more somewhat disappointing than the RTX lineup, main issues with RTX cards are the prices. This Radeon VII is on the hyped up 7nm and yet, this uses more wattage than the RTX 2080 and price at $699? If AMD had did that for $499, that would have been a total blow across Nvidia bow. But all in all, it's priced at $699 because of the the 16GB Vram and the fact it's better for video editing and ''content creation'' but when you look at it that way, the price is okayish, everybody should have known AMD weren't going to match 2080Ti in performance unfortunately. Does it have HDMI 2.1 though? I still cannot find out the info but I'm assuming no which is total BS if it doesn't!

All that said and done, AMD Radeon VII is a really not somewhat bad for a compute card, but the problem is, the Vega architecture is just not optimized for gaming. AMD said the OpenCL performance is improved by 62% which is absolutely massive since it will put it way ahead of the RTX 2080Ti in a lot of OpenCL benchmarks.

At this point, it's best to wait for tech youtubers benchmarks for the final decision making.

Maybe AMD will release a cut down version with maybe 12gb or 8gb vram? Surely that will help with the prices and be more competitive against the 2080, since there are some 2080s that are going for 699USD right now in newegg.

But then again, AMD drivers are surely better now than nvidia's 'cause of finewine, and Radeon VII will for sure beat the 2080 in the future.

good news, but im waiting for news on intels new gpu. Its going to rape nvidia, and ill support intel to do it.

@goldenelementxl: Rivaling RTX 2080 with VII is good enough. NVIDIA needs competition.

Honestly this even more somewhat disappointing than the RTX lineup, main issues with RTX cards are the prices. This Radeon VII is on the hyped up 7nm and yet, this uses more wattage than the RTX 2080 and price at $699? If AMD had did that for $499, that would have been a total blow across Nvidia bow. But all in all, it's priced at $699 because of the the 16GB Vram and the fact it's better for video editing and ''content creation'' but when you look at it that way, the price is okayish, everybody should have known AMD weren't going to match 2080Ti in performance unfortunately. Does it have HDMI 2.1 though? I still cannot find out the info but I'm assuming no which is total BS if it doesn't!

All that said and done, AMD Radeon VII is a really not somewhat bad for a compute card, but the problem is, the Vega architecture is just not optimized for gaming. AMD said the OpenCL performance is improved by 62% which is absolutely massive since it will put it way ahead of the RTX 2080Ti in a lot of OpenCL benchmarks.

At this point, it's best to wait for tech youtubers benchmarks for the final decision making.

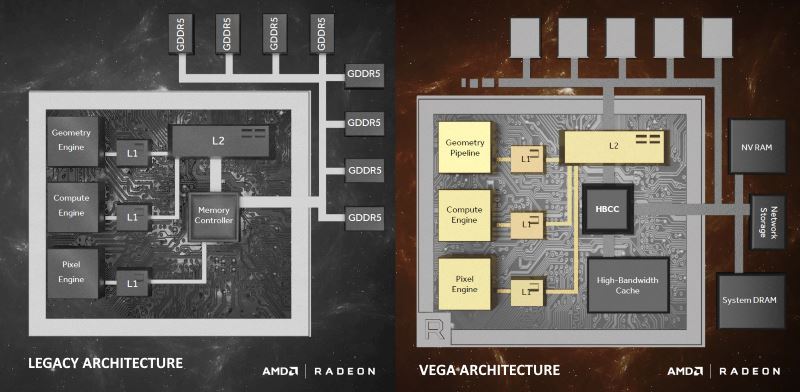

According to Anandtech site, VII comes with 128 ROPS, hence on ROPS read-write bound situation, VII would be different from Vega 64

AMD's game benchmarks shown in CES 2019 are heavy compute shader/TMU read-write path games. Battlefield V's 3D engine is well known for software based compute tile render.

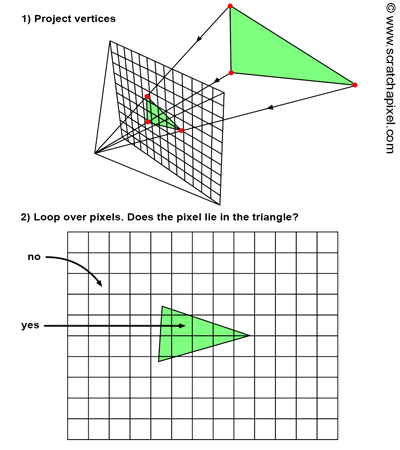

The other bottleneck is with rasterization i.e. the fix function hardware for the following concepts.

good news, but im waiting for news on intels new gpu. Its going to rape nvidia, and ill support intel to do it.

It depends IF Raja "Mr TFLOPS" Koduri learns from past mistakes i.e. TFLOPS doesn't include classic GPU hardware performance.

This is underwhelming. If it would have been released for $499.99 then AMD would have been onto something. This is interesting now as next gen consoles are in the works; I see a bigger impact on the CPU side of things instead of the GPU to keep costs down on the consoles.

I would like to see lower cost VII with 56 CUs at 1800 Mhz and 8GB VRAM SKU.

This is underwhelming. If it would have been released for $499.99 then AMD would have been onto something. This is interesting now as next gen consoles are in the works; I see a bigger impact on the CPU side of things instead of the GPU to keep costs down on the consoles.

I would like to see lower cost VII with 56 CUs at 1800 Mhz and 8GB VRAM SKU.

The Vega 56 already exists.

So much for Navi.

Anyways... $699? Really? Yeesh.

Don't get me wrong, it's probably an amazing card. But am I willing to part with my R9 Fury for this? I dunno. $699 is a steep price for any card.

This is underwhelming. If it would have been released for $499.99 then AMD would have been onto something. This is interesting now as next gen consoles are in the works; I see a bigger impact on the CPU side of things instead of the GPU to keep costs down on the consoles.

I would like to see lower cost VII with 56 CUs at 1800 Mhz and 8GB VRAM SKU.

The Vega 56 already exists.

Not with 1800Mhz clock speed and 128 ROPS. Higher clock speed improves existing rasterization hardware.

So, the price and performance of a 2080 with significantly higher power consumption and without the tensor and ray tracing cores? Meh.

VII's AI instructions are integrated with the main compute units like Tegra X1's SMs.

So, the price and performance of a 2080 with significantly higher power consumption and without the tensor and ray tracing cores? Meh.

VII's AI instructions are integrated with the main compute units like Tegra X1's SMs.

Which means that if it uses them for AI then they are unavailable for anything else.

Please Log In to post.

Log in to comment