AMD's decision to work with VESA (the standards body for computer graphics) to develop a competitor to Nvidia's G-Sync technology might well turn out to be the right one in the long run, but it hasn't exactly been a speedy launch. Almost a whole year after the first G-Sync displays arrived--during which time Nvidia built up a following for its own variable refresh rate tech--it's only now that the first Freesync monitors are hitting the market. Once again, AMD is left playing catch up, and with all that extra time to develop Freesync, I'd have hoped for a slightly smoother experience from the off. But it's early days yet, and Freesync is very good for the most part. Plus, thanks to its reliance on VESA standards, there's a far better chance of it making its way into all monitors, TVs, and notebooks.

What is Freesync?

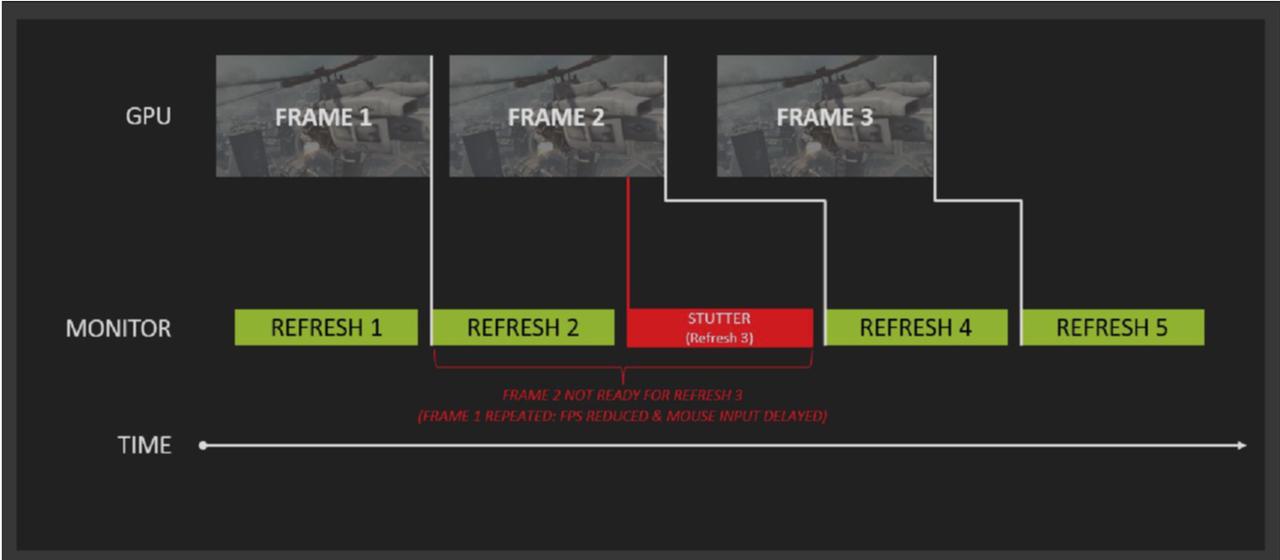

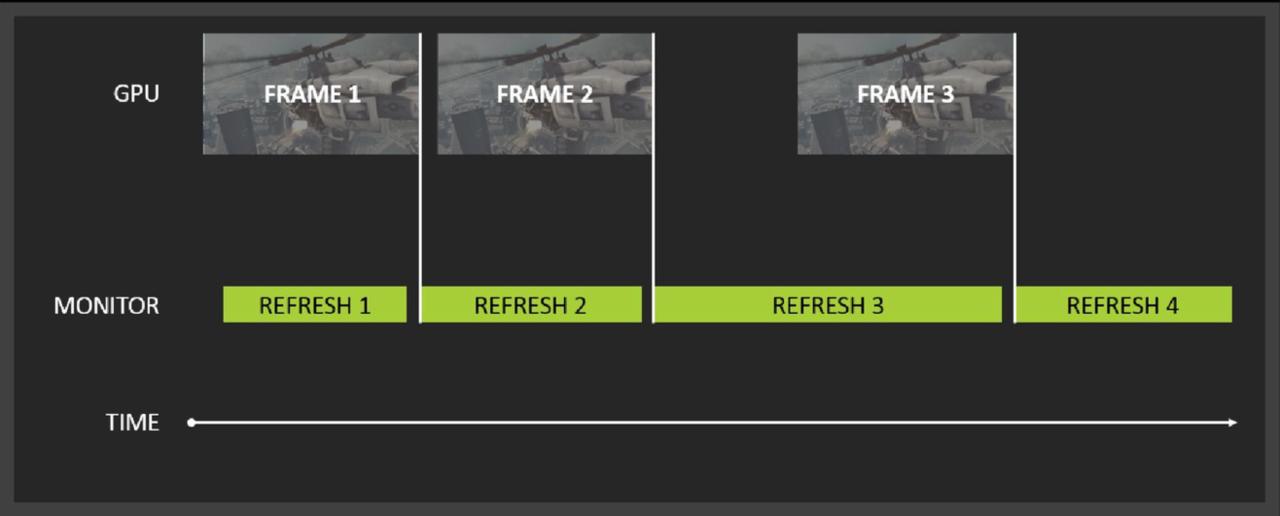

For the uninitiated, Freesync is AMD's solution to problems that some are more susceptible to than others: screen tearing, stuttering, and input lag. All are the result of how GPUs render frames from games or other media, and how monitors display them. Let's take a regular, 1080p, 60Hz monitor for example. The 60Hz part means the monitor displays an image (in this a case, a single frame from a game) 60 times a second. In an ideal world, your GPU would feed the display exactly 60 frames every second, and you'd see a smooth image. Unfortunately, GPUs and games don't work that way. The problem is that GPU performance is highly variable, depending on the type of scene it has to render. An obvious example would be a 2D platformer like Super Meat Boy versus a 3D shooter like Battlefield. But even within a game like Battlefield, there's a large variance in frame rates, due to the different degrees of complexity in its indoor and outdoor scenes.

Most people playing modern games will see frame rates from around 30fps to 100fps, depending on hardware and what settings a game is running at. That large variance in frame rate presents a problem for a 60Hz monitor. If the frame rate from the GPU is higher than 60fps, a new frame is sent to the monitor at any point in its refresh cycle, even if it's currently drawing a frame. This results in a situation where the monitor displays half of the old frame it was in the process of displaying, and half of the new frame that the GPU is sending it. This sharp line across the screen where the two frames don't line up is screen tearing, and it can be very distracting. One solution to the problem is to use a display that can handle a higher frame rate. Many gaming orientated displays work up to 144Hz, but even this more frequent screen refreshing only makes the problem less noticeable, it doesn't remove it entirely.

The other common solution to the problem is V-Sync. When enabled, V-Sync forces a monitor to display images on the screen at fixed intervals, most commonly at 60Hz. While this gets rid of the screen tearing, it can introduce stuttering and input lag. Since the GPU now has to wait for the monitor to be ready for the next frame, the image you see on screen will almost never be the most up to date one that reflects your controller input. If the frame rate of the game drops lower than the refresh rate of the monitor, it has to re-draw the same frame again to make up the difference, which causes stuttering. What Freesync (and Nvidia's competitor G-Sync) does is directly control a monitor's refresh rate via the GPU. This way, the monitor only displays a frame when the GPU is ready, rather than at a fixed interval. The result, in theory, is a super-smooth, lag-free image, regardless of frame rate.

The Tech

Freesync is actually just AMD's branding for AdaptiveSync, a portion of the DisplayPort standard that enables a variable refresh rate by expanding the vBlank timings of a display; AMD has simply expanded on the standard with drivers and GPUs that support it. Notably, AdaptiveSync is an optional portion of DisplayPort 1.2a, so while it's technically a standard, monitor makers don't necessarily have to implement it--and that doesn't always gel with AMD's message that Freesync is a "No licensing. No proprietary hardware. No incremental hardware costs" solution. While the underlying idea behind AdaptiveSync is technically free, because it's optional, there's still a cost associated with implementing it; display scaler and control chip makers still have to work to support the new standard. That said, now that the tech has been developed, the cost to monitor makers is likely to fall over time.

Nancy Drew: Mystery of the Seven Keys | World Premiere Official Trailer Modern Warfare III & Warzone - Official Cheech & Chong Bundle Gameplay Trailer The Fallout TV Show's Biggest Easter Eggs Teenage Mutant Ninja Turtles: Splintered Fate – Announcement Gameplay Trailer Dead Island 2 – Official SoLA Expansion Gameplay Launch Trailer SteamWorld Heist II – Official Reveal Gameplay Trailer Potionomics: Masterwork Edition - Official Announcement Trailer Genshin Impact - "Arlecchino: Sleep in Peace" | Official Character Teaser Snowbreak: Containment Zone - "Gradient of Souls" Version Trailer Harold Halibut GameSpot Video Review Little Kitty, Big City – Release Date Reveal Cat Quest III - Release Date Trailer

Please enter your date of birth to view this video

By clicking 'enter', you agree to GameSpot's

Terms of Use and Privacy Policy

Nvidia's solution was to build the scaler hardware and sell it directly to monitor makers, with the required G-Sync module adding around $60 to the cost. Naturally, AMD claims that without the cost of the scaler, Freesync monitors are cheaper than the G-Sync equivalent. That's certainly the case when the prices are taken at face value, but as shown in the monitor roundup below, direct apples-to-apples comparisons aren't entirely possible. You also have to factor in the potential cost of a new GPU to use Freesync, thanks to the limited selection that the technology works with. While G-Sync works with pretty much every Nvidia GPU dating back to the 600-series, only AMD's more recent R9 290X, R9 290, R9 285, R7 260X and R7 260 GPUs work with Freesync's variable refresh rates in games.

The biggest difference between the two technologies is how they handle frame rates that are above or below the monitor's refresh rate window. For example, the catchily titled LG 34UM67 ultrawide monitor--one of the first Freesync monitors on the market--sports a supported dynamic refresh range of 48-75Hz. If your GPU puts out a frame rate between those two values, you get all the sweet variable refresh rate smoothness. If your GPU's performance falls outside of that range, you have two options: play with V-Sync enabled and put up with the associated stutter, judder, and input lag, or play with V-Sync off and deal with screen tearing. With G-Sync, there's no option, with games defaulting to V-Sync when performance falls outside of a monitor's range. While that does result in a generally smoother experience, having the choice between the two is a definite win for Freesync, particularly for those sensitive to input lag.

What's particularly interesting is what happens when the frame rate drops below the monitor's refresh rate range. The folks over at PC Perspective have done a great analysis of this for both G-Sync and Freesync, and it's well worth checking out the article for a very detailed look at the two technologies. But the takeaway is that in a G-Sync monitor, if the frame rate drops below the monitor's range, say to 25fps, the display actually refreshes at 50Hz. In this case the G-Sync module inserts an extra frame in order to avoid the flickering that's associated with lower frame rates. PC Perspective even looked at frame rates as low as 14fps, where the module quadrupled the frame rate up to 56Hz. This is a clever bit of visual trickery, but it does require a local frame buffer in the G-Sync module in order to function.

Freesync monitors don't feature such trickery. In the case of the LG 34UM67, its lowest supported refresh rate is 48Hz. If you enable V-Sync, the game treats the monitor like a fixed 48 Hz display, locking the frame rate to 48fps. Others, like Acer's XG270HU, drop to a lower 40Hz refresh rate. At these lower refresh rates, with V-Sync on, things like judder are much more pronounced. It's certainly something I noticed when playing on the Freesync monitors, particularly when viewed side-by-side against the Asus ROG G-Sync monitor.

Monitor Mixup

Speaking of monitors, AMD claims that one of Freesync's biggest selling points is that compatible monitors are cheaper than the G-Sync equivalent. The trouble is, direct comparisons at this point are difficult, due to the vast differences in specs. Certainly, the entry point for a 144Hz 1440p monitor is lower for Freesync than G-Sync, and there are more options available in terms of aspect ratios and panel types. There's also the fact that, unlike G-Sync, Freesync monitors have more than just a single DisplayPort input. If you're spending upwards of $400 on a monitor, being able to use it with HDMI devices like consoles and TV boxes, or even with an older DVI-equipped PC is a real boon.

LG 29UM67/34UM67 | Acer XG270HU | BenQ XL2730Z | Asus ROG Swift PG278Q | AOC G2460PG | Acer Predator 4K2K XB280HK | |

Variable Refresh Tech | Freesync | Freesync | Freesync | G-Sync | G-Sync | G-Sync |

Size | 29"/34" | 27" | 27" | 27" | 24" | 28" |

Resolution | 2560x1080 (21:9) | 2560x1440 | 2560x1440 | 2560x1440 | 1920x1080 | 3840x2160 |

Refresh Rate | 48-75Hz | 40-144Hz | 40-144Hz | 30-144Hz | 144Hz | 60Hz |

Response Time | 5ms | 1ms | 1ms | 1ms | 1ms | 1ms |

Panel Type | IPS | TN | TN | TN | TN | TN |

Inputs | DisplayPort, HDMI, DVI | DisplayPort, HDMI, DVI | DisplayPort, HDMI, DVI, USB | DisplayPort, USB | DisplayPort, USB | DisplayPort, USB |

Price | $449/$649 | $499 | $599 | $780 | $399 | $759 |

The initial launch batch of Freesync monitors consists of the Acer XG270HU, a 1440p 27" 144Hz TN panel; the BenQ XL2730, another 1440p 27" 144Hz TN panel; and the LG 29UM67 and 34UM67, a pair of 21:9 2560x1080 pixel ultrawide IPS monitors that sport a 75Hz refresh rate. 4K 60Hz panels from Samsung, as well as a 144Hz 1080p panel from Viewsonic are on the way, but aren't available at launch. The Acer XG270HU is set to retail for $499, which compares extremely favorably to the similarly equipped Asus ROG Swift PG278Q G-Sync monitor that retails for around $780. To beat the Acer's price for G-Sync, you have to step down to AOC's 1080p display, which goes for around $400.

However, there are some clear tradeoffs for the price. For starters, the Acer feels considerably cheaper than the Asus, with a non-height-adjustable stand, no USB hub, and a flimsy, glossy black plastic finish. And, while it's definitely a matter of taste, I'm not a fan of the less than subtle orange highlights that scream "gaming monitor." Why hardware manufacturers continue to slather gaming hardware in garish colours is beyond me. There's also the matter of the panel's 40-144Hz variable refresh rate area, which doesn't go quite as low as the ROG Swift's 30Hz. It's worth noting that while AMD is pitching 9-240Hz refresh rates for Freesync, they're only the possible extremes; it's up to individual monitor makers to implement them.

However, the Acer's TN panel itself is very good, and certainly matches the quality of the ROG Swift. Some corners have been cut to make the price, but in terms of actually using it as a gaming monitor, you'd be hard pressed to tell the difference between the two, particularly as both sport a fast 1ms response time. Colours and viewing angles are decent too, although if you go off axis too much and you'll definitely notice some TN colour-shift.

There are no such problems with the LG 29UM67 and 34UM67, which sport some seriously nice 21:9 IPS panels. The resolution isn't high at just 2560x1080, nor is the 5ms response time and 48-75Hz refresh rate as competitive, but the colours are far richer, and the viewing angles much wider. They also have a much more premium feel and finish, even if you're limited to just two steps for height adjustment. That 75Hz refresh rate is also one of the highest out there for an IPS panel, at least until Acer finally launches its 144Hz 1440p IPS monitor. At $649 and $499, they're also competitively priced, coming in cheaper than their non-Freesync equivalents.

There aren't currently any 21:9 G-Sync monitors to compare the LGs to, with Acer's 34" 144Hz G-SYNC ultrawide not arriving until later this year. It will, however, sport a whopping 3440x1440 resolution. Still, this early on in Freesync's life, that you can choose a 21:9 monitor is impressive. Early pricing is promising too, and I suspect once the 1080p monitors hit the market, they're going to come in under the $400 starting price for G-Sync.

Is Freesync Worth it?

The question is, if you already have a nice monitor, is it worth upgrading to a Freesync one? For the most part, I'd say it is. Setup is as easy as ticking a box in Catalyst Control Center, and there's a substantial improvement in the look and feel of games without any noticeable performance impact. Taking the Acer XG270HU as an example, if you don't dip below its lowest 40Hz refresh rate, games look absolutely fantastic. It's surprising just how much of a difference removing V-Sync judder and screen tearing makes, and without having to worry about maintaining a 60fps minimum, you can crank the settings much higher than you otherwise would.

That said, thanks to how Freesync works, at lower frame rates there's a more noticeable judder when the panel is working at 40Hz, amplifying the effects of V-Sync on or off. There's also some ghosting on the Freesync monitors I tested (you can see a little of it in the video above), that doesn't affect a G-Sync screen. Indeed, Nvidia's on the offensive at the moment mentioning that very fact. Still, it's not a total deal breaker by any means, and for the most part, games will be running far above that threshold anyway.

The smaller variable refresh rate window of the ultrawide LG panels makes them a less compelling offering for pro-gamers or those really wanting the lowest input lag and highest frame rates without tearing. In terms of the gaming experience, however, it's absolutely the monitor I'd go for. LG's IPS panel produces substantially better colours than the Acer, and the wide viewing angles make it great choice for pulling double-duty as movie watching screen. Most impressive, and surprising, was just how much of a difference the 21:9 aspect ratio made. The ultrawide format works brilliantly for games, with the screen giving you a much wider field of view, and a cinematic feel that you just don't get with a 16:9 monitor. The only real downside to the LG for me is the resolution. As soon as there's a 3440x1440 version (seriously, make it happen LG), I'm all in with a 21:9 Freesync monitor.

However, there are some fringe cases where, like G-Sync, Freesync is less compelling. For instance, if you're already regularly pushing mega high frame rates to a 144Hz monitor, the benefits are less noticeable. The higher refresh rates tend to gloss over many screen tearing issues, even if they don't get rid of them completely. Variable frame rates also won't work miracles if you're having trouble running games at above 30fps, and in those cases, a GPU upgrade should be at the top of your list.

Freesync or G-Sync?

As for whether you should go Freesync or G-Sync, the answer is less clear. If you're already rocking a GPU from a particular brand, the choice is obvious. But if you're planning a GPU upgrade and wondering which is the better option, I'd say there's little to call between the two technologies. Both work extremely well at removing the problems associated with V-Sync on or off, and both are easy to set up. While I did encounter an issue where Freesync wouldn't work with Bioshock Infinite, it did work with every other game I tried it with. Hopefully a driver update will fix the issue, and enable the promised compatibility with Crossfire setups and dual-GPU cards like the R9 295X2. There's also the issue of what happens to games that work below a monitor's minimum refresh rate, and there Nvidia's solution is the superior one.

In the long run, though, it's tough to see where Nvidia is going to go with G-Sync. Yes, it has the superior technology and performance, but propriety technologies, however well they work, generally tend to lose out to open standards. Plus, as AdaptiveSync matures and AMD's drivers improve, Freesync's little niggles like minimum refresh rates and ghosting could be solved. With monitor makers not having to pay a premium for propriety modules, and with laptops particularly likely to have the technology baked in for power-saving reasons (lowering the refresh rate for static images), Freesync is the more attractive proposition, even if Nvidia has a wider slice of the GPU market. More monitor features, and a lower cost of entry make it even better.

Perhaps Nvidia will come up with something to make G-Sync more attractive in the face of increased competition--the company has certainly done so in the past. Or maybe it'll eventually cave and embrace the AdaptiveSync standard. Regardless of the future though, if you're currently rocking an AMD GPU and want to jump in on variable refresh rate technology, there's no longer any need to consider switching to Nvidia. Freesync is an great bit of tech that costs less, makes your games buttery smooth, and may prove to be the more sensible investment in the long run.