If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

Low to mid range PC hardware comparisson

This topic is locked from further discussion.

Are you trying to break the world record of worst thread ever posted on sw?

deeliman

Just asking a question in a thread with so many PC experts

If it's getting 2-3fps, then it's not a low end gaming card, it's a business grade card, as in what they use to run windows, internet browser and MS Office.

Low end gaming standard is what AMD brings with their APUs imo.

Gazillion times better.If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

loosingENDS

Depending on your CPU.

If it`s a 2008th dual core some 3ghz with 8 gb of RAM DDR2 800 then

some 30-40 fps with GTX770 or AMD Radeon 7970 in most games, at 1080p.

If it`s a 2008th quad core (2.8GHZ or so), then 40-60fps with the same GTX770 or AMD Radeon 7970 in most games, at 1080p.

If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

loosingENDS

List the parameters where the 2-3fps occur such:

Game

Resolution

Detail Levels (including Lowest-Max, levels of AA)

Machine specs (both yours and test machine)

If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

loosingENDS

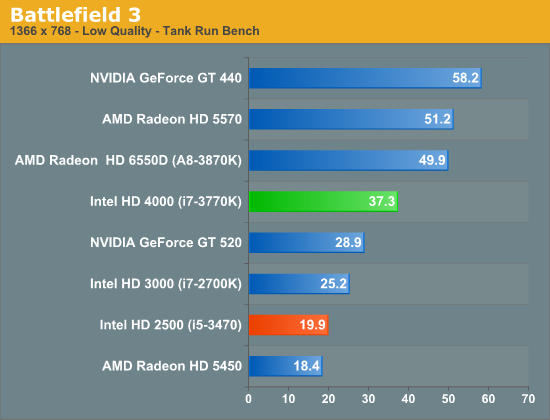

A card slower than Intel HD 4000.

http://www.anandtech.com/show/5871/intel-core-i5-3470-review-hd-2500-graphics-tested/3

A card slower than Intel HD 4000.If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

loosingENDS

am i the only one more bothered by ron benchies/links spam than loosey? like what in the actual f*ck is wrong with this dude? i he even a human, alien or bot?

am i the only one more bothered by ron benchies/links spam than loosey? like what in the actual f*ck is wrong with this dude? i he even a human, alien or bot?

silversix_

I dunno. I can usually follow ron's posts. Even if I get stumped by his posts sometimes, I eventually catch up. :lol:

english isn't ron first language. He can't read sarcasm and takes everything said literally.am i the only one more bothered by ron benchies/links spam than loosey? like what in the actual f*ck is wrong with this dude? i he even a human, alien or bot?

silversix_

Are you trying to break the world record of worst thread ever posted on sw?

deeliman

He certainly has the record for best anyway

That Witcher 2 thread he made a while back (last year?) was incredible

Mid range parts have gotten pretty strong. My 7870XT at 1200mhz (no volts adjusting) is in the mid to high 3 teraflop range (7970 levels) and I paid less than $200 for it 6 months ago (mid range cards now a days can play almost every game at base settings very high @1920x1200 ).

My cards on the lower end of mid range and it performs almost twice as fast as the consoles GPU (not only that but we still have 20nm cards with brand new archicture due next year).

Pretty sure he holds that title already.[QUOTE="clyde46"][QUOTE="deeliman"]

Are you trying to break the world record of worst thread ever posted on sw?

indzman

nope. Sniper4321 is still king :P

Are you forgetting that loosingends tried to claim Witcher 2 on 360 had better graphics than it did maxed out on a PC?.

[QUOTE="loosingENDS"]

If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

jun_aka_pekto

List the parameters where the 2-3fps occur such:

Game

Resolution

Detail Levels (including Lowest-Max, levels of AA)

Machine specs (both yours and test machine)

Game: My RPG developed in Unity

Resolution: 1080p, getting about 3-4x times better fps in 720p

Detail Level: Max, all cool effects on (color grading, depth of field, glow effects, but no camera motion blur), grass far far away, medium thickness

I am working on a 5400ATI, old and very basic DX11 card, getting 2.5-3 fps in the worst case, other maps may get 30-40 fps, but i want to know what my more detailed ones will look like in modern mod range cards (or new consoles) and how much to cut back in combat so it is as fluid as possible.

Are you forgetting that loosingends tried to claim Witcher 2 on 360 had better graphics than it did maxed out on a PC?.

Snugenz

Which is a fact, in some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof

[QUOTE="jun_aka_pekto"]

[QUOTE="loosingENDS"]

If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

loosingENDS

List the parameters where the 2-3fps occur such:

Game

Resolution

Detail Levels (including Lowest-Max, levels of AA)

Machine specs (both yours and test machine)

Game: My RPG developed in Unity

Resolution: 1080p, getting about 3-4x times better fps in 720p

Detail Level: Max, all cool effects on (color grading, depth of field, glow effects, but no camera motion blur), grass far far away, medium thickness

I am working on a 5400ATI, old and very basic DX11 card, getting 2.5-3 fps in the worst case, other maps may get 30-40 fps, but i want to know what my more detailed ones will look like in modern mod range cards (or new consoles) and how much to cut back in combat so it is as fluid as possible.

Put it this way your 5400 gpu is more then 10x slower then today's mid ranged (or console equivalent ie PS4 gpu ) You can get a overclocked 2gb 7850 with two free games for $145 ($125 after rebate). 7850 has 1100% more bandwidth, and pixel and texel rates are also nearly 1000% faster then a 5450....[QUOTE="Snugenz"]

Are you forgetting that loosingends tried to claim Witcher 2 on 360 had better graphics than it did maxed out on a PC?.

loosingENDS

Which is a fact, in some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof

Comparing Vanilla Witcher 2 vs 360 Enhanced Edition.... even still Pc vanilla version looked miles better then the 360 version

Put it this way your 5400 gpu is more then 10x slower then today's mid ranged (or console equivalent ie PS4 gpu ) You can get a overclocked 2gb 7850 with two free games for $145 ($125 after rebate). 7850 has 1100% more bandwidth, and pixel and texel rates are also nearly 1000% faster then a 5450....04dcarraher

That is great :)

I do plan to get a proper video card to work on the final refinement of the game, i will get something close to PS4/xbox one hardware in order to make sure i get good frame rates for those systems

[QUOTE="loosingENDS"]

[QUOTE="Snugenz"]

Are you forgetting that loosingends tried to claim Witcher 2 on 360 had better graphics than it did maxed out on a PC?.

04dcarraher

Which is a fact, in some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof

Comparing Vanilla Witcher 2 vs 360 Enhanced Edition.... even still Pc vanilla version looked miles better then the 360 version

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting1.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting1.png[/img]

http://www.eurogamer.net/articles/digitalfoundry-the-witcher-2-tech-analysis

In some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof, in the pics above 360 is way ahead in lighting/shadowing and HDR

[QUOTE="jun_aka_pekto"]

[QUOTE="loosingENDS"]

If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

loosingENDS

List the parameters where the 2-3fps occur such:

Game

Resolution

Detail Levels (including Lowest-Max, levels of AA)

Machine specs (both yours and test machine)

Game: My RPG developed in Unity

Resolution: 1080p, getting about 3-4x times better fps in 720p

Detail Level: Max, all cool effects on (color grading, depth of field, glow effects, but no camera motion blur), grass far far away, medium thickness

I am working on a 5400ATI, old and very basic DX11 card, getting 2.5-3 fps in the worst case, other maps may get 30-40 fps, but i want to know what my more detailed ones will look like in modern mod range cards (or new consoles) and how much to cut back in combat so it is as fluid as possible.

You should get quite a good jump with mid-range cards today. I have an HD 5770 I bought back in 2009 and it's a much better card than the HD 5400. When I replaced the 5770 with my current GTX 560 Ti, I thought the performance jump was huge. That's while keeping my Phenom II X3 720BE CPU.

My rough guess would be equivalent framerates (upper 20's *28fps* to upper 30's outdoors) on say...... Crysis:

The HD 5770 would be 1440x900, DX9, Detail on High (2nd highest), 2xAA

I get somewhat better framerates than the above with the following:

GTX 560 Ti at 1080p, DX10, Detail on Very High, 4xAA

The mid-range cards nowadays would slap silly my GTX 560 Ti. I'm fairly confident they'll be several magnitudes better than the HD 5400. I can't say much about TW2 since I'm not big on RPG games.

Besides the GPU, I wuold guess you plan on buying a new CPU as well.

[QUOTE="Snugenz"]

Are you forgetting that loosingends tried to claim Witcher 2 on 360 had better graphics than it did maxed out on a PC?.

loosingENDS

Which is a fact, in some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof

I rest my case.

[QUOTE="jun_aka_pekto"]

[QUOTE="loosingENDS"]

If a game runs in 2-3 fps in a ATI HD5400 card, what is the expected frame rate for the same settings/game in a mid range video card today ?

loosingENDS

List the parameters where the 2-3fps occur such:

Game

Resolution

Detail Levels (including Lowest-Max, levels of AA)

Machine specs (both yours and test machine)

Game: My RPG developed in Unity

Resolution: 1080p, getting about 3-4x times better fps in 720p

Detail Level: Max, all cool effects on (color grading, depth of field, glow effects, but no camera motion blur), grass far far away, medium thickness

I am working on a 5400ATI, old and very basic DX11 card, getting 2.5-3 fps in the worst case, other maps may get 30-40 fps, but i want to know what my more detailed ones will look like in modern mod range cards (or new consoles) and how much to cut back in combat so it is as fluid as possible.

Intel HD 4000's market share are most likely to be superior when compared to AMD's Radeon HDs with 80 stream processors.

[QUOTE="loosingENDS"]

[QUOTE="jun_aka_pekto"]

List the parameters where the 2-3fps occur such:

Game

Resolution

Detail Levels (including Lowest-Max, levels of AA)

Machine specs (both yours and test machine)

jun_aka_pekto

Game: My RPG developed in Unity

Resolution: 1080p, getting about 3-4x times better fps in 720p

Detail Level: Max, all cool effects on (color grading, depth of field, glow effects, but no camera motion blur), grass far far away, medium thickness

I am working on a 5400ATI, old and very basic DX11 card, getting 2.5-3 fps in the worst case, other maps may get 30-40 fps, but i want to know what my more detailed ones will look like in modern mod range cards (or new consoles) and how much to cut back in combat so it is as fluid as possible.

You should get quite a good jump with mid-range cards today. I have an HD 5770 I bought back in 2009 and it's a much better card than the HD 5400. When I replaced the 5770 with my current GTX 560 Ti, I thought the performance jump was huge. That's while keeping my Phenom II X3 720BE CPU.

My rough guess would be equivalent framerates (upper 20's *28fps* to upper 30's outdoors) on say...... Crysis:

The HD 5770 would be 1440x900, DX9, Detail on High (2nd highest), 2xAA

I get somewhat better framerates than the above with the following:

GTX 560 Ti at 1080p, DX10, Detail on Very High, 4xAA

The mid-range cards nowadays would slap silly my GTX 560 Ti. I'm fairly confident they'll be several magnitudes better than the HD 5400. I can't say much about TW2 since I'm not big on RPG games.

Besides the GPU, I wuold guess you plan on buying a new CPU as well.

I plan to start with a GPU and see how it goes. The thing is that the slower the system i optimize for, the better chances the game will have to run well on PS4/xbox1

Also the GPU is by far the biggest problem i guess, since it is the most entry level GPU you could get some years ago

I have a dual core CPU, Intel Core 2 Duo 3Ghz and i am not sure if i would have to change it, what CPU would get closer to xbox 1 /PS4 specs for example ?

[QUOTE="04dcarraher"]

[QUOTE="loosingENDS"]

Which is a fact, in some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof

loosingENDS

Comparing Vanilla Witcher 2 vs 360 Enhanced Edition.... even still Pc vanilla version looked miles better then the 360 version

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting1.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting1.png[/img]

http://www.eurogamer.net/articles/digitalfoundry-the-witcher-2-tech-analysis

In some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof, in the pics above 360 is way ahead in lighting/shadowing and HDR

The only difference is that on 360 they changed the light sources a little bit, so light comes from different angles and that's why it looks different on the 360. Though in no way does the 360 version look better than the PC version, that's just extremly silly.

[QUOTE="04dcarraher"]

[QUOTE="loosingENDS"]

Which is a fact, in some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof

loosingENDS

Comparing Vanilla Witcher 2 vs 360 Enhanced Edition.... even still Pc vanilla version looked miles better then the 360 version

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting1.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting1.png[/img]

http://www.eurogamer.net/articles/digitalfoundry-the-witcher-2-tech-analysis

In some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof, in the pics above 360 is way ahead in lighting/shadowing and HDR

The only difference is that on 360 they changed the light sources a little bit, so light comes from different angles and that's why it looks different on the 360. Though in no way does the 360 version look better than the PC version, that's just extremly silly.

[QUOTE="04dcarraher"]

[QUOTE="loosingENDS"]

Which is a fact, in some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof

loosingENDS

Comparing Vanilla Witcher 2 vs 360 Enhanced Edition.... even still Pc vanilla version looked miles better then the 360 version

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting2.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/360_lighting1.png[/img]

[/img]http://images.eurogamer.net/2012/articles//a/1/4/7/5/1/4/2/PC_lighting1.png[/img]

http://www.eurogamer.net/articles/digitalfoundry-the-witcher-2-tech-analysis

In some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof, in the pics above 360 is way ahead in lighting/shadowing and HDR

The only difference is that on 360 they changed the light sources a little bit, so light comes from different angles and that's why it looks different on the 360. Though in no way does the 360 version look better than the PC version, that's just extremly silly.

[QUOTE="loosingENDS"]

[QUOTE="04dcarraher"]

Comparing Vanilla Witcher 2 vs 360 Enhanced Edition.... even still Pc vanilla version looked miles better then the 360 version

SchnabbleTab

http://www.eurogamer.net/articles/digitalfoundry-the-witcher-2-tech-analysis

In some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof, in the pics above 360 is way ahead in lighting/shadowing and HDR

The only difference is that on 360 they changed the light sources a little bit, so light comes from different angles and that's why it looks different on the 360. Though in no way does the 360 version look better than the PC version, that's just extremly silly.

The difference is that there is no real light source on PC one, thus they do not have proper HDR and shadows either, the lighting is a bloomy last gen lighting before games could have real lighting

I wonder how PC people that champion next gen lightingand graphics can say PC looks better in the pics i posted

The hypocricy is devastating

[QUOTE="SchnabbleTab"]

[QUOTE="loosingENDS"]

http://www.eurogamer.net/articles/digitalfoundry-the-witcher-2-tech-analysis

In some areas Witcher 2 on 360 had actual lights and shadows than the silly PC bloom and no proper lighting/shadows

See the Digital Foundry comparisson for pics and proof, in the pics above 360 is way ahead in lighting/shadowing and HDRloosingENDS

The only difference is that on 360 they changed the light sources a little bit, so light comes from different angles and that's why it looks different on the 360. Though in no way does the 360 version look better than the PC version, that's just extremly silly.

The difference is that there is no real light source on PC one, thus they do not have proper HDR and shadows either, the lighting is a bloomy last gen lighting before games could have real lighting

I wonder how PC people that champion next gen lightingand graphics can say PC looks better in the pics i posted

The hypocricy is devastating

so next gen lighting... :lol:

[QUOTE="loosingENDS"]

[QUOTE="SchnabbleTab"]

The only difference is that on 360 they changed the light sources a little bit, so light comes from different angles and that's why it looks different on the 360. Though in no way does the 360 version look better than the PC version, that's just extremly silly.

MK-Professor

The difference is that there is no real light source on PC one, thus they do not have proper HDR and shadows either, the lighting is a bloomy last gen lighting before games could have real lighting

I wonder how PC people that champion next gen lightingand graphics can say PC looks better in the pics i posted

The hypocricy is devastating

so next gen lighting... :lol:

The fact that you dont comment on the pics i posted and try to cover the facts i stated by posting .... other pics, only proves my point 100%

The fact remains, in the pics i posted, Witcher 2 lighting is vastly better and more next gen on 360 and a practical last gen joke on PC

Please Log In to post.

Log in to comment